A Semantic-Enhanced Cybersecurity Named Entity Recognition Approach Oriented to Lightweight Adaptation of Large Language Models

-

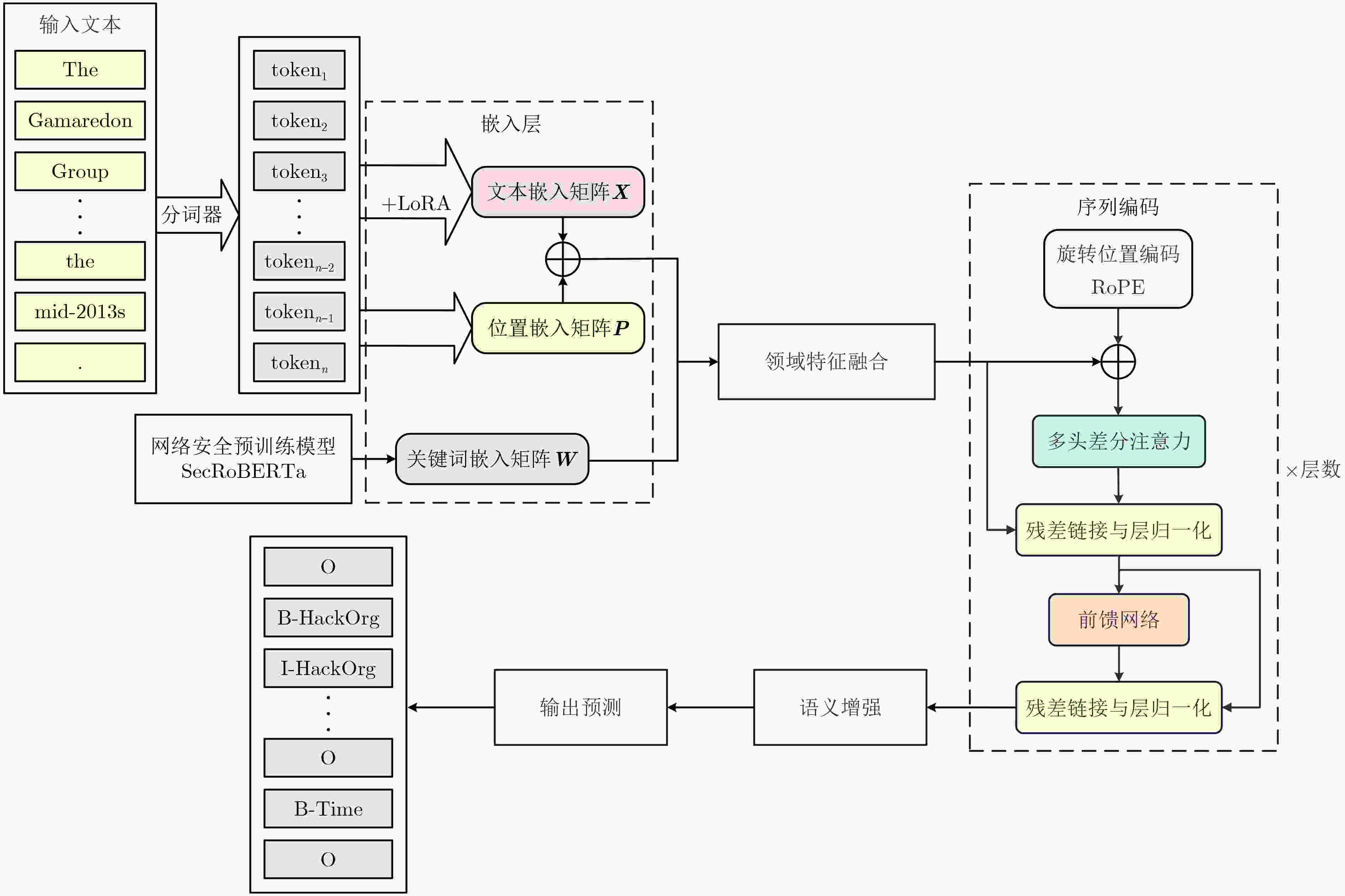

摘要: 网络安全领域命名实体识别作为支撑威胁情报分析、安全事件响应及漏洞管理的核心技术,面临着标注数据稀缺、专业术语密集与语义融合不足等严峻挑战,而现有的大语言模型方法又存在领域语义融合不足和稀有实体召回率低等缺陷。针对以上挑战,该文提出了一种面向大语言模型轻量化适配的语义增强型网络安全命名实体识别方法。该方法集成LLM2Vec与低秩适配的轻量化适配策略以保留深层语义编码并降低训练成本,设计稀疏门控注意力机制以强化领域关键词融合,并引入基于SecRoBERTa的语义增强组件以提升小样本场景下的特征鲁棒性,最终采用掩蔽条件随机场约束标签路径的合法性。在DNRTI和APTNER两个公开数据集上的实验结果表明,所提方法在精确率、召回率和F1分数上均优于现有主流方法,其中在DNRTI数据集上F1分数达到91.91%,较当前最优模型提升2.14%,验证了其在网络安全实体识别任务中的有效性。该方法为低资源场景下的网络安全命名实体识别提供了高效、轻量化的解决方案,对推动威胁情报自动化分析与安全防护体系智能化具有实际意义。Abstract:

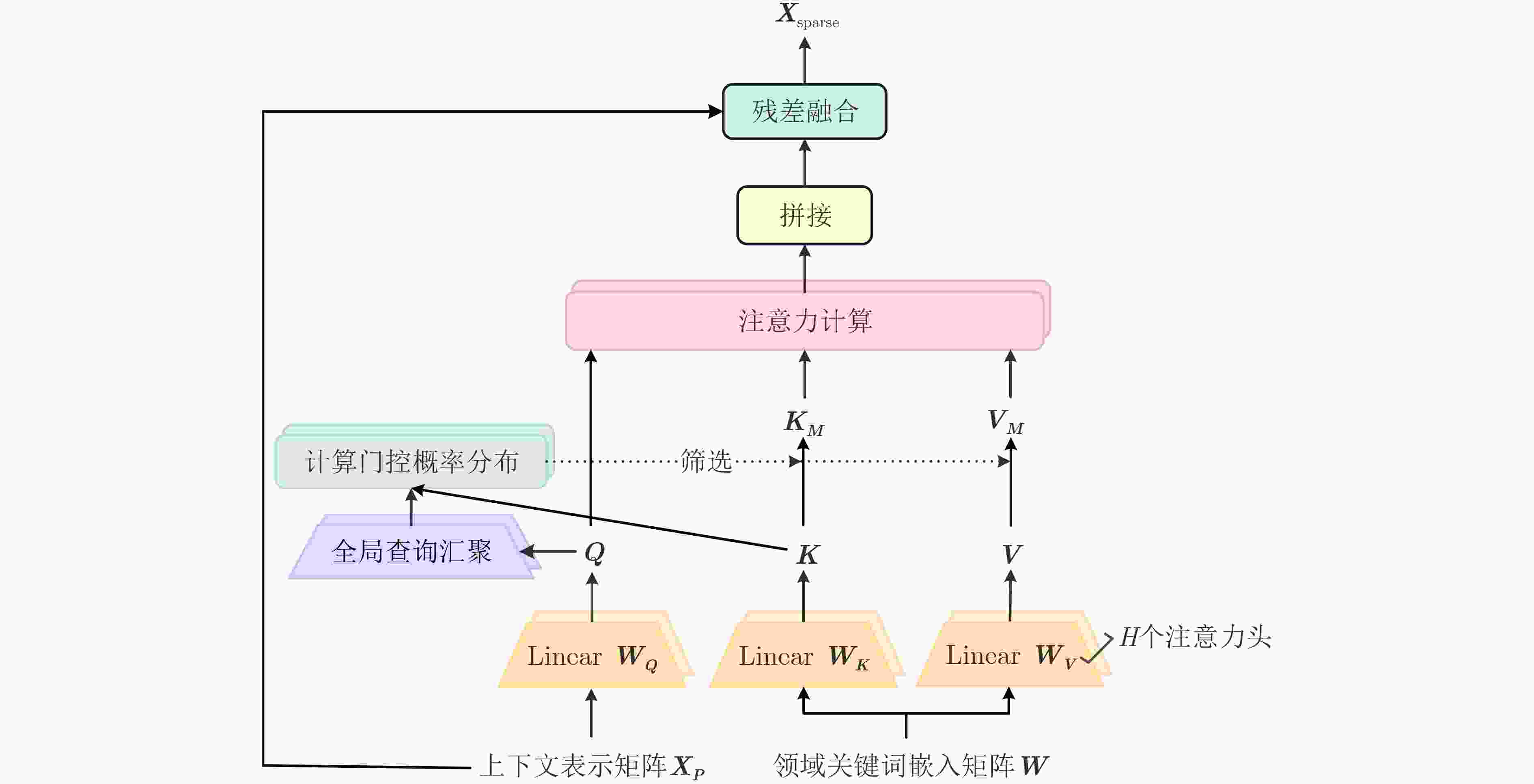

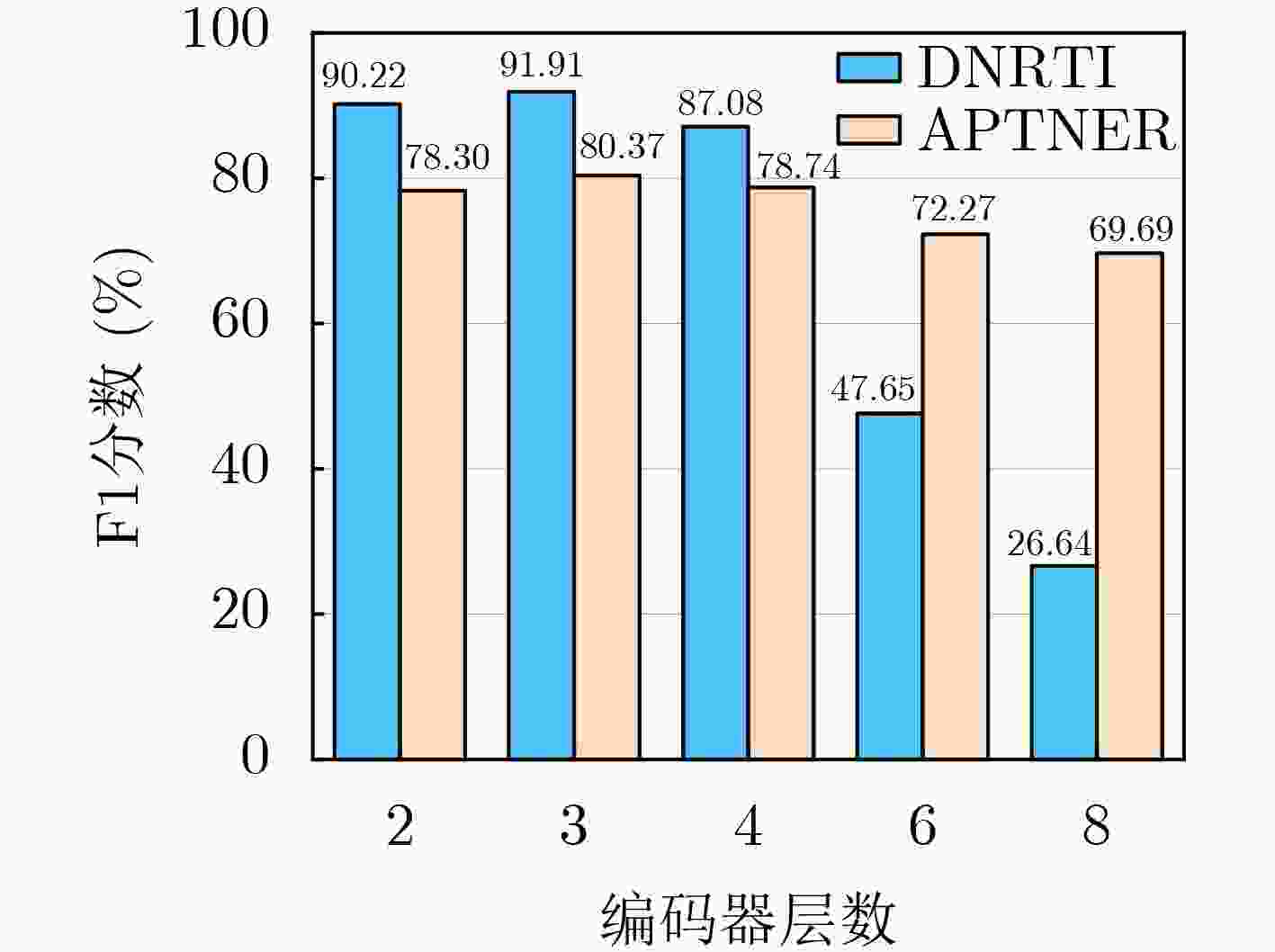

Objective Named Entity Recognition (NER) in the field of cybersecurity is a fundamental technology supporting threat intelligence analysis, vulnerability management, and security incident response. However, this field generally faces challenges such as dense technical terms, scarce labeled data, dynamic changes in entity categories, and highly complex semantic features, which make traditional deep learning models and existing Large Language Models (LLMs) significantly inadequate in terms of domain adaptability and semantic fusion capability. To address the aforementioned key issues while also considering the need for lightweight model deployment, this paper aims to construct a cybersecurity NER approach that can enhance domain semantic representation, improve the ability to identify rare entities, and apply to low-resource environments, providing a reliable technical path for intelligent threat analysis in cybersecurity scenarios. Methods To address the complex semantic features of cybersecurity texts, this paper proposes a semantically enhanced, lightweight, and LLMs-adaptable cybersecurity NER approach. The proposed approach uses LLM2Vec to achieve bidirectional semantic reconstruction of large model decoders and combines Low-Rank Adaptation (LoRA) for low-rank fine-tuning, so as to maintain deep semantic encoding capability while significantly reducing the amount of parameter updates. To address the challenges of sparse keywords and severe noise interference in cybersecurity texts, a sparse gated attention mechanism is introduced to strengthen keyword-focused feature extraction by dynamically selecting high-contribution cybersecurity terms through global gating and sparse inference. A SecRoBERTa-based semantic enhancement component is introduced, which utilizes a domain-pre-trained model to generate similar word embeddings, optimizes feature robustness in small-sample scenarios, and alleviates the challenges of identifying out-of-vocabulary words and low-frequency terms. Finally, a masked conditional random field is employed to constrain label transitions and guarantee BIO-compliant output sequences, achieving robust and consistent entity boundary prediction. Results and Discussions Extensive experiments were conducted on two public cybersecurity datasets, DNRTI and APTNER. The proposed approach achieved an F1 score of 91.91% on DNRTI, surpassing the previous state-of-the-art model by 2.14%. On APTNER, it reached an F1 score of 80.37%, outperforming the best baseline by 2.97%. Ablation studies confirmed the contribution of each key component: the Sparse Gated Attention mechanism improved F1 by 3.57% over standard Multi-Head Attention on DNRTI; the semantic enhancement module contributed a 2.32% F1 gain; and the MCRF (Masked Conditional Random Field) layer provided a 10.63% F1 improvement over traditional CRF (Conditional Random Field). The model also demonstrated efficient training and inference characteristics, aligning with its lightweight design goals. Conclusions This paper proposes a lightweight adaptation approach based on LLMs for NER in the cybersecurity domain, which effectively addresses the limitations of existing LLMs-based NER methods in domain adaptation and rare entity recognition. By integrating LLM2Vec and LoRA for lightweight fine-tuning, a sparse gated attention mechanism for domain feature fusion, and a SecRoBERTa-based semantic enhancement component for similar word precomputation, the proposed approach achieves high performance on DNRTI and APTNER datasets. The research provides an efficient technical path for NER tasks in low-resource cybersecurity scenarios and offers strong support for downstream tasks such as automated threat intelligence analysis. -

表 1 核心参数设置

参数 DNRTI APTNER 最大学习率 1e-4 2e-5 批次大小 32 16 优化器 AdamW AdamW token序列最大长度 200 200 隐藏层维度 256 256 编码器层数 3 3 稀疏门控注意力机制中的注意力头数 4 6 编码器中的注意力头数 4 8 表 2 本文方法与其他NER方法对比实验结果(%)

数据集 方法 精确率 召回率 F1分数 DNRTI BNER[24] (2025) 80.16 81.16 80.66 TISCG[25] (2024) 86.16 86.86 86.51 CTERMRFRAT[26] (2024) - - 88.31 UTERMMF[27] (2023) 90.50 88.34 89.41 DCR-CharNet-TBDN[11] (SOTA, 2025) 90.03 89.51 89.77 本文方法 93.19 90.66 91.91 APTNER SecRoberta[28] (2025) 54.69 61.87 58.06 SecureBERT[28] (2025) 60.76 67.79 64.08 GLM+对比学习[29] (2025) - - 65.52 BERT+BiLSTM+CRF[30] (SOTA, 2024) 80.20 74.80 77.40 本文方法 80.35 80.40 80.37 表 3 DNRTI数据集上各实体类别预测结果(%)

标签 精确率 召回率 F1分数 HackOrg 95.04 92.94 93.98 OffAct 70.77 100.00 82.88 SamFile 89.86 100.00 94.66 SecTeam 92.49 97.92 95.13 Tool 85.16 100.00 91.99 Time 100.00 96.72 98.33 Purp 84.70 94.61 89.38 Area 99.06 76.64 86.42 Idus 100.00 100.00 100.00 Org 100.00 98.00 98.99 Way 30.67 95.83 46.47 Exp 100.00 87.17 93.15 Features 85.84 95.02 90.20 表 4 APTNER数据集上各实体类别预测结果(%)

标签 精确率 召回率 F1分数 TOOL 69.60 73.54 71.52 MAL 81.48 72.78 76.88 APT 85.69 82.73 84.18 TIME 78.48 84.09 81.19 LOC 83.27 88.58 85.84 SECTEAM 76.55 81.99 79.18 IDTY 60.52 72.71 66.06 FILE 82.49 74.91 78.52 PROT 72.22 82.98 77.23 ACT 70.54 63.20 66.67 OS 75.00 84.00 79.25 DOM 82.61 88.37 85.39 VULID 97.06 100.00 98.51 SHA1 100.00 100.00 100.00 SHA2 100.00 96.97 98.46 EMAIL 100.00 100.00 100.00 IP 95.65 91.67 93.62 ENCR 75.00 90.00 81.82 VULNAME 74.32 76.39 75.34 MD5 94.74 100.00 97.30 URL 63.64 70.00 66.67 表 5 位置嵌入消融实验结果(%)

数据集 方法 精确率 召回率 F1分数 DNRTI 本文方法 93.19 90.66 91.91 -位置嵌入 88.07 88.08 88.07 APTNER 本文方法 80.35 80.40 80.37 -位置嵌入 76.09 78.27 77.16 表 6 关键词嵌入消融实验结果(%)

数据集 方法 精确率 召回率 F1分数 DNRTI 使用稀疏门控注意力机制进行关键词嵌入 93.19 90.66 91.91 使用多头自注意力机制进行关键词嵌入 88.79 87.90 88.34 不使用关键词嵌入 88.62 86.24 87.41 APTNER 使用稀疏门控注意力机制进行关键词嵌入 80.35 80.40 80.37 使用多头自注意力机制进行关键词嵌入 77.28 79.08 78.17 不使用关键词嵌入 75.44 77.01 76.22 表 7 语义增强组件的消融实验结果(%)

数据集 方法 精确率 召回率 F1分数 DNRTI 本文方法 93.19 90.66 91.91 -语义增强 91.58 87.68 89.59 APTNER 本文方法 80.35 80.40 80.37 -语义增强 78.78 78.31 78.54 -

[1] 陈曙东, 欧阳小叶. 命名实体识别技术综述[J]. 无线电通信技术, 2020, 46(3): 251–260. doi: 10.3969/j.issn.1003-3114.2020.03.001.CHEN Shudong and OUYANG Xiaoye. Overview of named entity recognition technology[J]. Radio Communications Technology, 2020, 46(3): 251–260. doi: 10.3969/j.issn.1003-3114.2020.03.001. [2] SATVAT K, GJOMEMO R, and VENKATAKRISHNAN V N. Extractor: Extracting attack behavior from threat reports[C]. Proceedings of 2021 IEEE European Symposium on Security and Privacy (EuroS&P), Vienna, Austria, 2021: 598–615. doi: 10.1109/EuroSP51992.2021.00046. [3] GAO Chen, ZHANG Xuan, HAN Mengting, et al. A review on cyber security named entity recognition[J]. Frontiers of Information Technology & Electronic Engineering, 2021, 22(9): 1153–1168. doi: 10.1631/FITEE.2000286. [4] 李永斌, 刘楝, 郑杰. 一种面向特定信息领域的大模型命名实体识别方法[J]. 电子与信息学报, 2026, 48(2): 662–672. doi: 10.11999/JEIT250764.LI Yongbin, LIU Lian, and ZHENG Jie. A method for named entity recognition in military intelligence domain using large language models[J]. Journal of Electronics & Information Technology, 2026, 48(2): 662–672. doi: 10.11999/JEIT250764. [5] HU Chenxi, WU Tao, LIU Chunsheng, et al. Joint contrastive learning and belief rule base for named entity recognition in cybersecurity[J]. Cybersecurity, 2024, 7(1): 19. doi: 10.1186/s42400-024-00206-y. [6] BEHNAMGHADER P, ADLAKHA V, MOSBACH M, et al. LLM2Vec: Large language models are secretly powerful text encoders[J]. arXiv preprint arXiv: 2404.05961, 2024. doi: 10.48550/arXiv.2404.05961. (查阅网上资料,不确定文献类型及格式是否正确,请确认). [7] HU E J, SHEN Yelong, WALLIS P, et al. LoRA: Low-rank adaptation of large language models[C]. Proceedings of the 10th International Conference on Learning Representations, 2022. (查阅网上资料, 未找到本条文献出版地信息, 请确认). [8] YI Feng, JIANG Bo, WANG Lu, et al. Cybersecurity named entity recognition using multi-modal ensemble learning[J]. IEEE Access, 2020, 8: 63214–63224. doi: 10.1109/ACCESS.2020.2984582. [9] MA Pingchuan, JIANG Bo, LU Zhigang, et al. Cybersecurity named entity recognition using bidirectional long short-term memory with conditional random fields[J]. Tsinghua Science and Technology, 2021, 26(3): 259–265. doi: 10.26599/TST.2019.9010033. [10] YI Junkai, LIU Yuan, JIANG Zhongbai, et al. Text command intelligent understanding for cybersecurity testing[J]. Electronics, 2024, 13(21): 4330. doi: 10.3390/electronics13214330. [11] 胡泽, 李文君, 杨宏宇. 基于字符表示学习与时序边界扩散的网络安全实体识别方法[J]. 电子与信息学报, 2025, 47(5): 1554–1568. doi: 10.11999/JEIT240953.HU Ze, LI Wenjun, and YANG Hongyu. A cybersecurity entity recognition approach based on character representation learning and temporal boundary diffusion[J]. Journal of Electronics & Information Technology, 2025, 47(5): 1554–1568. doi: 10.11999/JEIT240953. [12] ZHANG Yunlong, LIU Jingju, ZHONG Xiaofeng, et al. SecLMNER: A framework for enhanced named entity recognition in multi-source cybersecurity data using large language models[J]. Expert Systems with Applications, 2025, 271: 126651. doi: 10.1016/j.eswa.2025.126651. [13] ZHANG Hao, WU Tingmin, ZHU Tianqing, et al. CyberLLaMA: A fine-tuned large language model for cybersecurity named entity recognition[J]. Knowledge-Based Systems, 2025, 328: 114183. doi: 10.1016/j.knosys.2025.114183. [14] VASWANI A, SHAZEER N, PARMAR N, et al. Attention is all you need[C]. Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, USA, 2017: 6000–6010. [15] Hugging Face. jackaduma/SecRoBERTa[EB/OL]. https://huggingface.co/jackaduma/SecRoBERTa, 2024. [16] 才华, 冉越, 付强, 等. 多粒度文本感知分层特征交互的视觉定位方法[J]. 电子与信息学报, 2025, 47(11): 4594–4605. doi: 10.11999/JEIT250387.CAI Hua, RAN Yue, FU Qiang, et al. Multi-granularity text perception and hierarchical feature interaction method for visual grounding[J]. Journal of Electronics & Information Technology, 2025, 47(11): 4594–4605. doi: 10.11999/JEIT250387. [17] 姜小波, 邓晗珂, 莫志杰, 等. 规则压缩模型和灵活架构的Transformer加速器设计[J]. 电子与信息学报, 2024, 46(3): 1079–1088. doi: 10.11999/JEIT230188.JIANG Xiaobo, DENG Hanke, MO Zhijie, et al. Design of transformer accelerator with regular compression model and flexible architecture[J]. Journal of Electronics & Information Technology, 2024, 46(3): 1079–1088. doi: 10.11999/JEIT230188. [18] YE Tianzhu, DONG Li, XIA Yuqing, et al. Differential transformer[J]. arXiv preprint arXiv: 2410.05258, 2024. doi: 10.48550/arXiv.2410.05258. (查阅网上资料,不确定文献类型及格式是否正确,请确认). [19] SU Jianlin, AHMED M, LU Yu, et al. RoFormer: Enhanced transformer with rotary position embedding[J]. Neurocomputing, 2024, 568: 127063. doi: 10.1016/j.neucom.2023.127063. [20] LIU Peipei, LI Hong, WANG Zuoguang, et al. Multi-features based semantic augmentation networks for named entity recognition in threat intelligence[C]. Proceedings of the 26th International Conference on Pattern Recognition (ICPR), Montreal, Canada, 2022: 1557–1563. doi: 10.1109/ICPR56361.2022.9956373. [21] WEI Tianwen, QI Jianwei, HE Shenghuan, et al. Masked conditional random fields for sequence labeling[C]. Proceedings of 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021: 2024–2035. doi: 10.18653/v1/2021.naacl-main.163.(查阅网上资料,未找到本条文献出版地信息,请确认). [22] WANG Xuren, LIU Xinpei, AO Shengqin, et al. DNRTI: A large-scale dataset for named entity recognition in threat intelligence[C]. Proceedings of 2020 IEEE 19th International Conference on Trust, Security and Privacy in Computing and Communications (TrustCom), Guangzhou, China, 2020: 1842–1848. doi: 10.1109/TrustCom50675.2020.00252. [23] WANG Xuren, HE Songheng, XIONG Zihan, et al. APTNER: A specific dataset for NER missions in cyber threat intelligence field[C]. Proceedings of 2022 IEEE 25th International Conference on Computer Supported Cooperative Work in Design (CSCWD), Hangzhou, China, 2022: 1233–1238. doi: 10.1109/CSCWD54268.2022.9776031. [24] CAI Yongxin, KANG Lei, LENG Tao, et al. BNER: A broad learning system-based named entity recognition method for cyber threat intelligence[C]. Proceedings of 2025 11th IEEE International Conference on Privacy Computing and Data Security (PCDS), Hakodate, Japan, 2025: 397–404. doi: 10.1109/PCDS65695.2025.00060. [25] DU Chao, LIU Xuhong, MIAO Lin, et al. Threat intelligence named entity recognition based on global gated feature fusion[C]. Proceedings of 2024 6th International Conference on Internet of Things, Automation and Artificial Intelligence (IoTAAI), Guangzhou, China, 2024: 618–622. doi: 10.1109/IoTAAI62601.2024.10692655. [26] WANG Peng and LIU Jingju. A cyber threat entity recognition method based on robust feature representation and adversarial training[C]. Proceedings of 2023 12th International Conference on Computing and Pattern Recognition, Qingdao, China, 2024: 255–259. doi: 10.1145/3633637.3633677. [27] CHANG Yu, WANG Gang, ZHU Peng, et al. Research on unified cyber threat intelligence entity recognition method based on multiple features[C]. Proceedings of 2023 4th International Conference on Computers and Artificial Intelligence Technology (CAIT), Macau, China, 2023: 233–240. doi: 10.1109/CAIT59945.2023.10469250. [28] ZHANG Yunlong, LIU Jingju, ZHONG Xiaofeng, et al. SecLMNER: A framework for enhanced named entity recognition in multi-source cybersecurity data using large language models[J]. Expert Systems with Applications, 2025, 271: 126651. doi: 10.1016/j.eswa.2025.126651. (查阅网上资料,本条文献与第12条文献重复,请确认). [29] 孙语晨. 基于大语言模型的威胁情报信息抽取研究与实现[D]. [硕士论文], 北京邮电大学, 2025. doi: 10.26969/d.cnki.gbydu.2025.002794.SUN Yuchen. Research and implemention of threat intelligence information extraction based on large language model[D]. [Master dissertation], Beijing University of Posts and Telecommunications, 2025. doi: 10.26969/d.cnki.gbydu.2025.002794. [30] 汪溢镭, 孙歆, 韩嘉佳, 等. 暗网高质量威胁情报获取技术与实现[J/OL]. https://doi.org/10.19678/j.issn.1000-3428.0068805, 2024.WANG Yilei, SUN Xin, HAN Jiajia, et al. Techniques and implementation of high-quality threat intelligence acquisition from the dark web[J/OL]. https://doi.org/10.19678/j.issn.1000-3428.0068805, 2024. -

下载:

下载:

下载:

下载: