A High-Performance Eye Tracking Method Based on Event Camera and Dual-Channel Differential Illumination

-

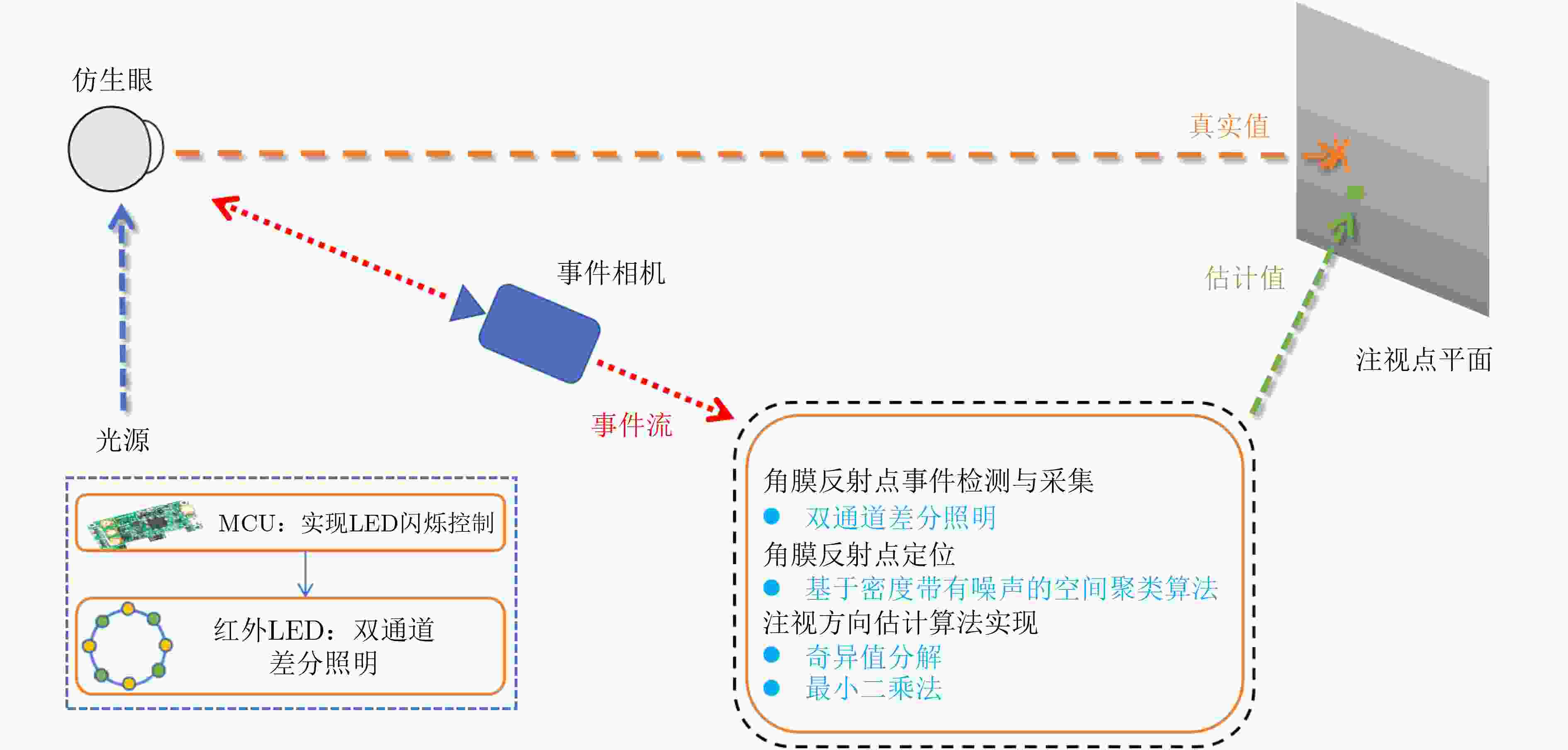

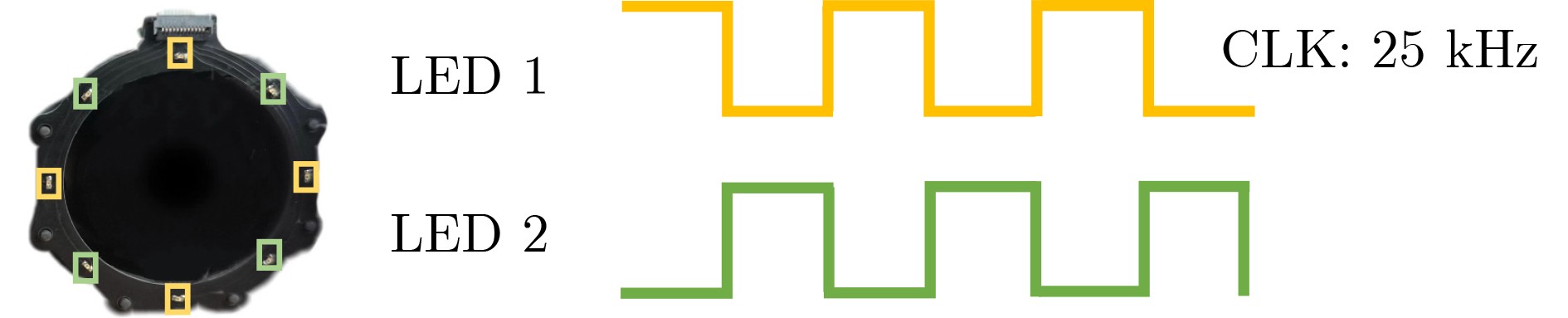

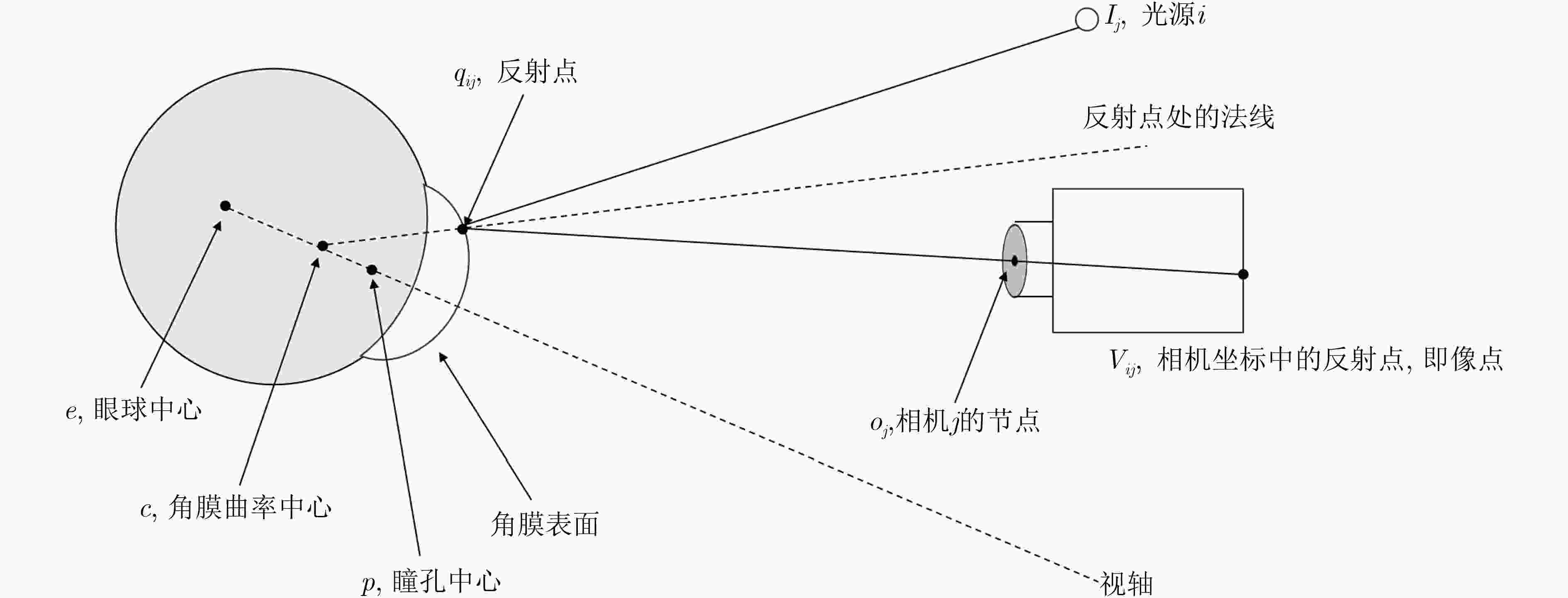

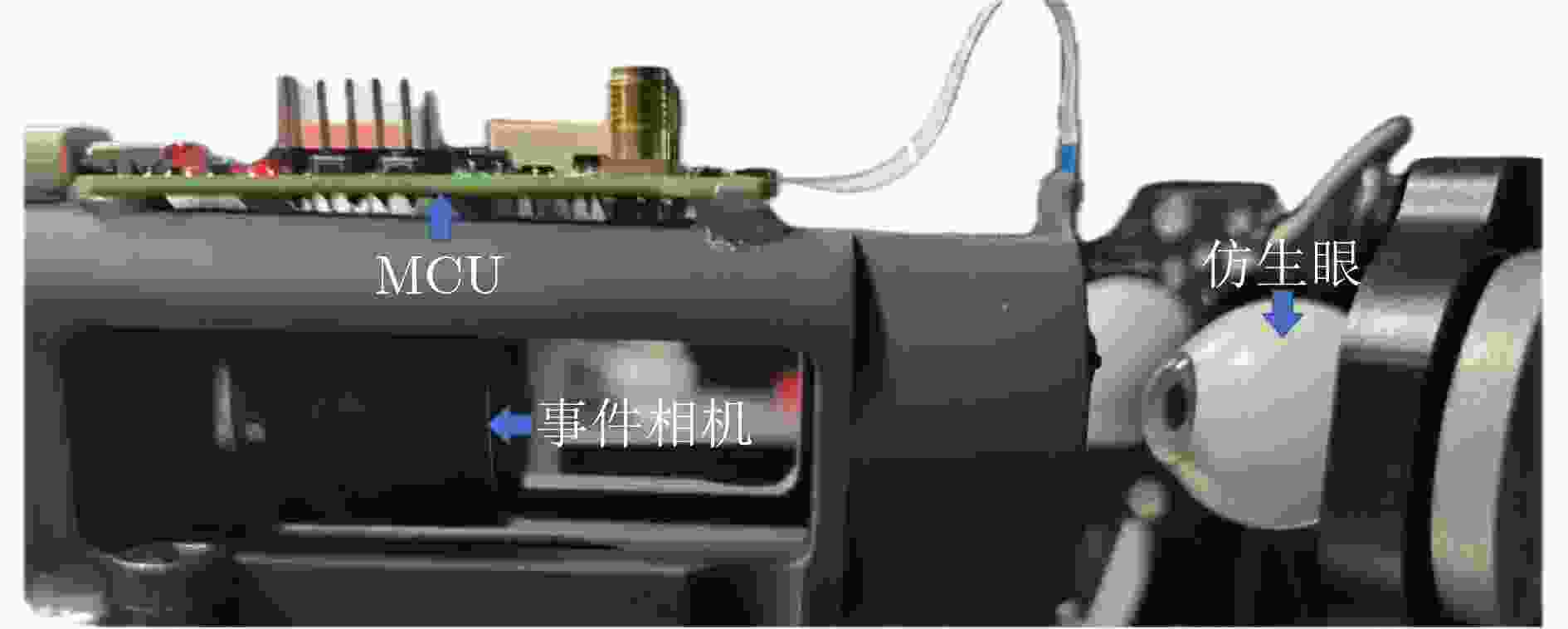

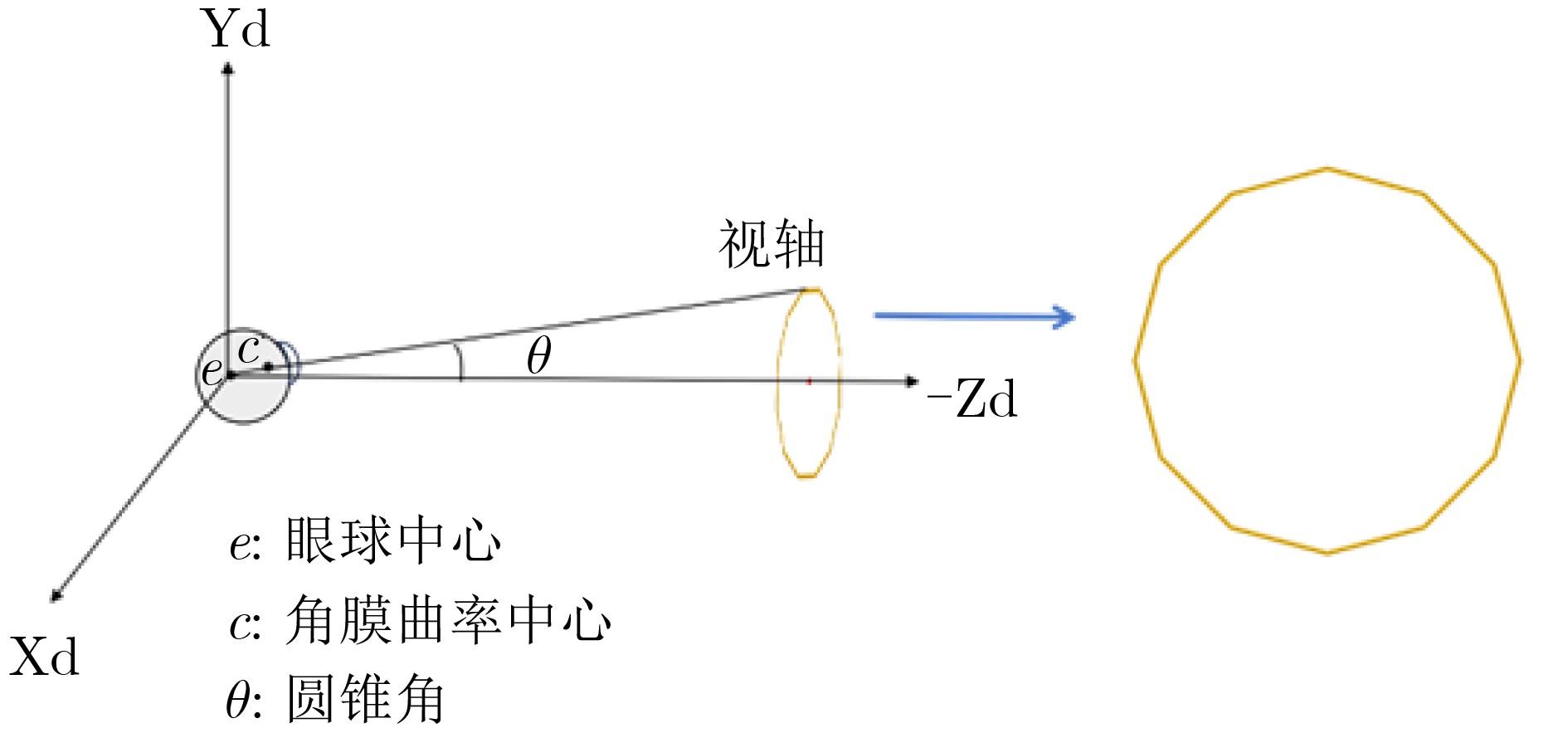

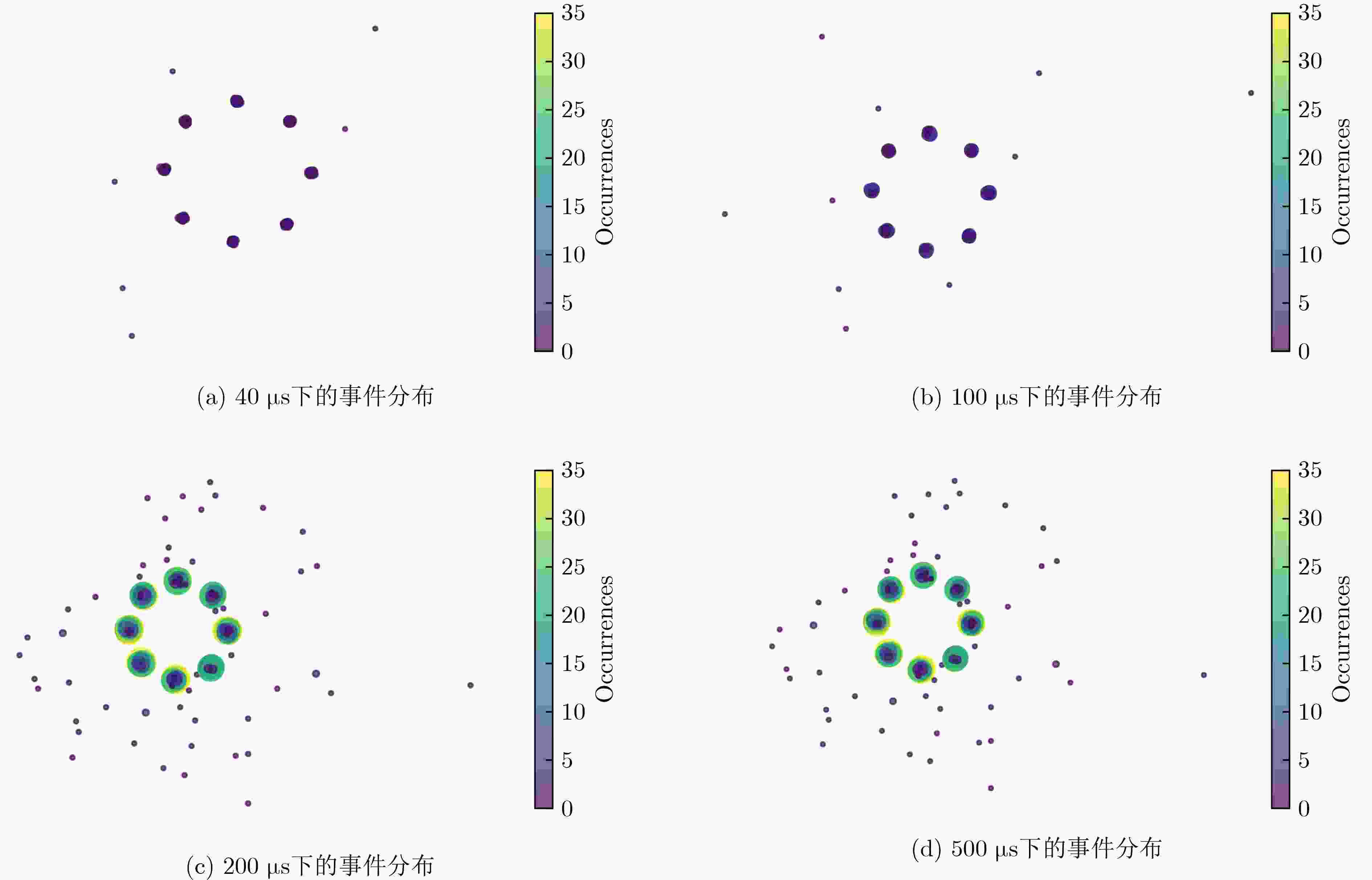

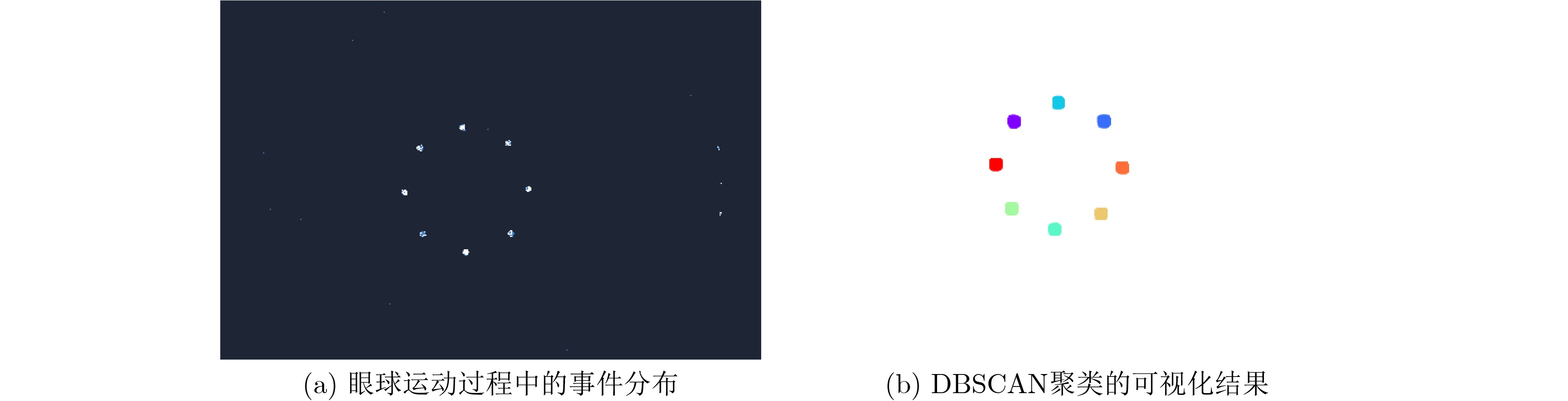

摘要: 为解决现有眼动追踪技术精度低,在高速眼动场景下时间分辨率受限等问题。本文提出了一种基于事件相机与双通道差分照明的眼动追踪方法。相比于传统相机,事件相机能够异步输出有关亮度变化的事件流,具有高时间分辨率、高动态范围、低延迟等优势。首先,本文采用事件相机作为图像传感器,并结合双通道差分照明策略,增强高时间分辨率下角膜反射点事件的信噪比;其次,引入基于密度带有噪声的空间聚类算法(DBSCAN),改善角膜反射点事件中大量离散点噪声导致的定位偏差,提升角膜反射点的定位精度。最后,重建世界坐标系下眼球的射线追踪模型,有效利用角膜反射点坐标并通过奇异值分解(SVD)和最小二乘法确定角膜曲率中心,从而完成注视方向的估计。在仿生眼数据集上的实验结果表明,本文提出的方法能够在25 kHz的时间分辨率下实现误差小于1°的注视方向估计,为下一代高性能眼动交互系统提供了可行的技术路径。Abstract:

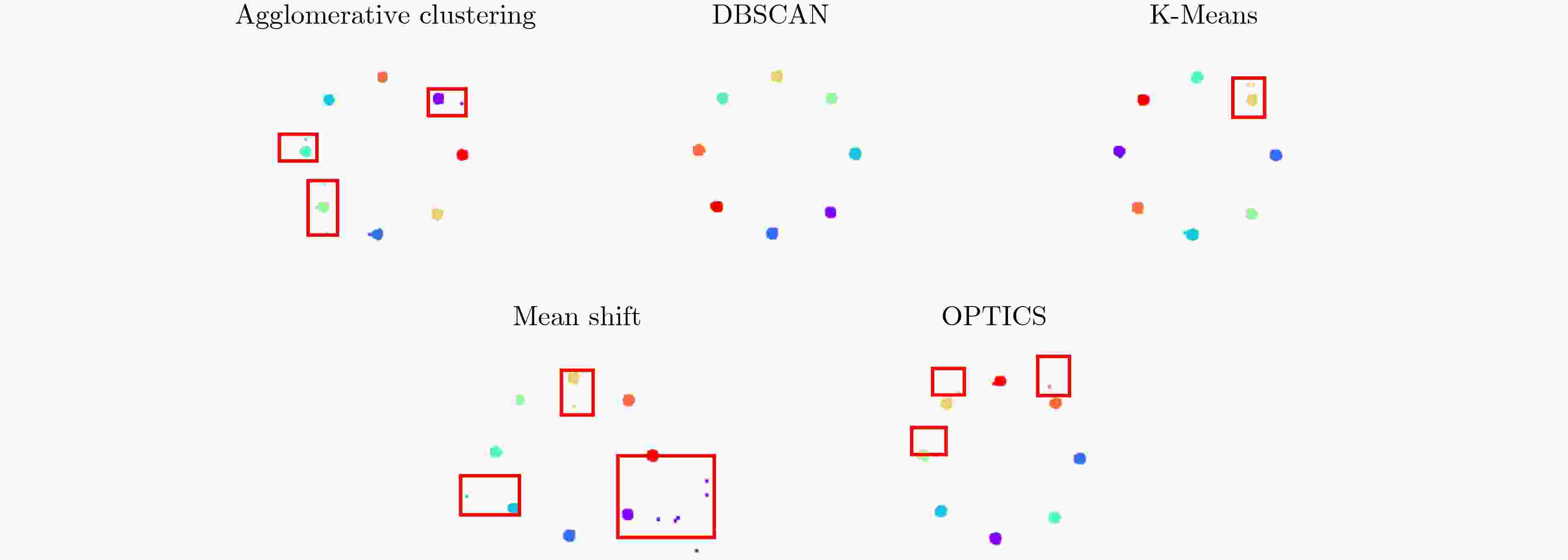

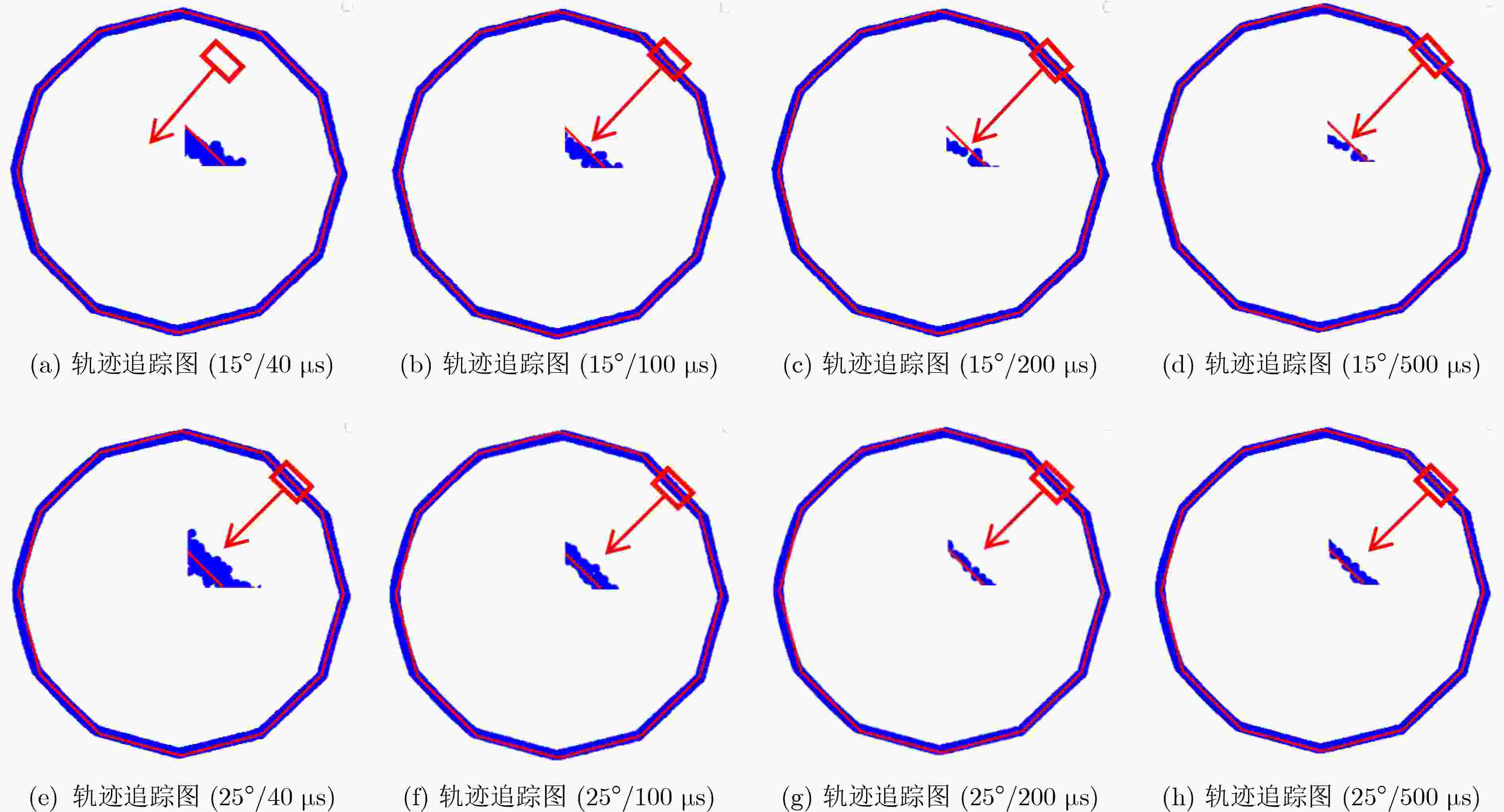

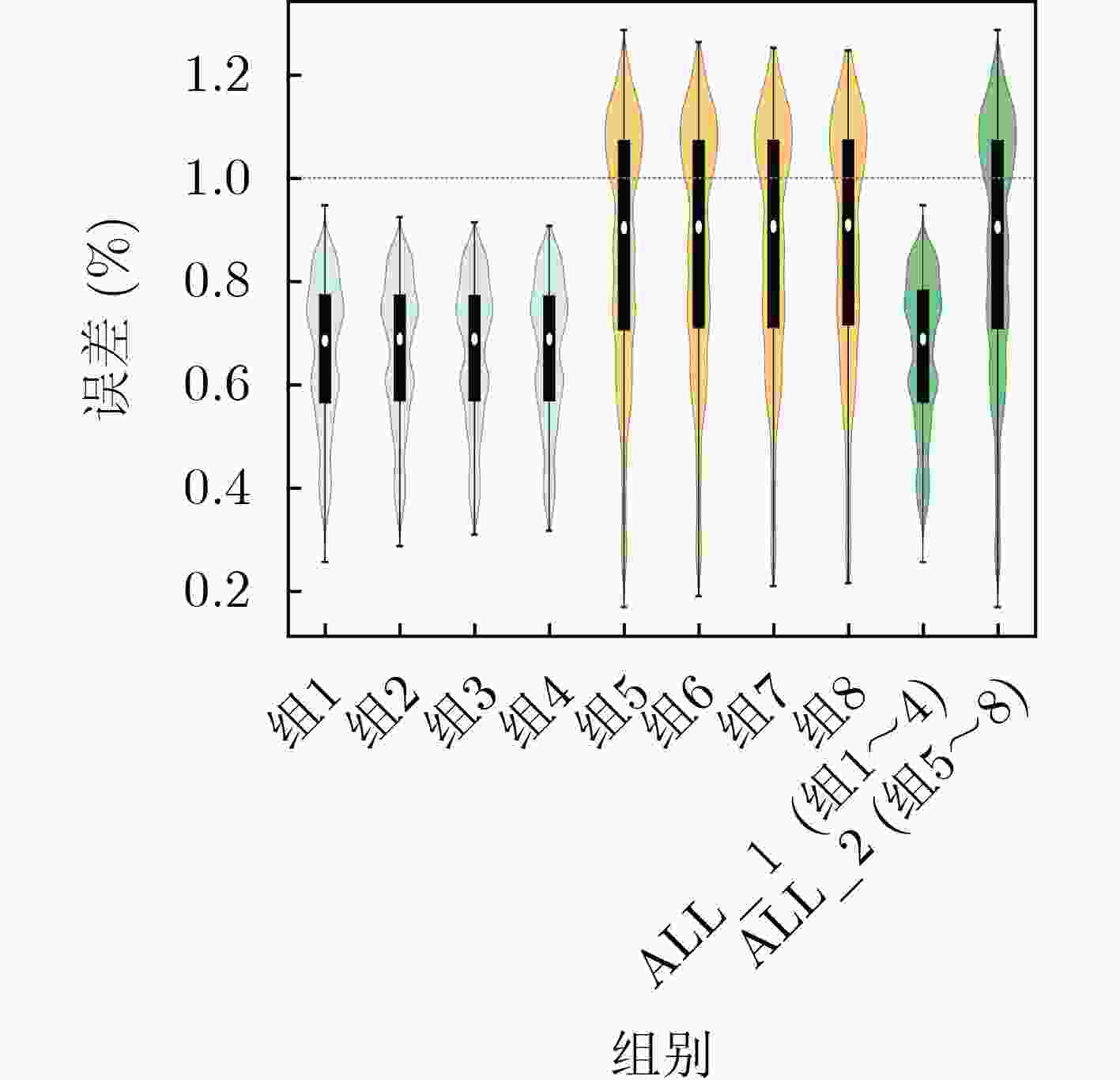

Objective Eye tracking has become an essential technology in human–computer interaction, medical diagnostics, cognitive neuroscience, and augmented/virtual reality applications. However, traditional eye tracking systems often suffer from two major limitations: low spatial accuracy and restricted temporal resolution, particularly in high-speed eye movement scenarios. These limitations hinder precise gaze estimation and reduce the reliability of real-time interactive systems. To address these challenges, this research integrates an event camera with the dual-channel differential illumination strategy to enhance the signal-to-noise ratio of corneal reflection events. By introducing the Density-Based Spatial Clustering of Applications with Noise (DBSCAN) algorithm, accurate localization of corneal reflection points is achieved. On this basis, the corneal reflection point coordinates are utilized in combination with Singular Value Decomposition (SVD) and the least-squares method to determine the corneal curvature center, thereby significantly improving the accuracy of gaze direction estimation. This research provides an efficient technical pathway for next-generation eye tracking systems and offers theoretical support for their deployment in complex interactive environments. Methods The proposed event-camera-based gaze tracking method integrates asynchronous eye movement event data through a dual-channel differential illumination framework, thereby enhancing gaze direction estimation accuracy under high-speed and dynamic conditions. Firstly, the event camera asynchronously captures brightness-change events with microsecond-level temporal resolution, enabling precise tracking of rapid eye movements, while the dual-channel differential illumination mechanism suppresses redundant reflections and enhances the contrast of corneal reflection points. Secondly, the DBSCAN algorithm is employed to process event data, effectively removing noise and optimizing the spatial localization accuracy of corneal reflection features. Finally, a ray-tracing model is reconstructed using SVD and least-squares fitting to determine the corneal curvature center, thereby achieving robust and high-precision gaze direction estimation. Experimental results on a biomimetic eye movement dataset demonstrate that the proposed method achieves high temporal resolution, localization accuracy, and robustness in dynamic tracking scenarios. Results and Discussions Experiments demonstrate that the proposed method achieves a temporal resolution of 25 kHz ( Fig. 6 ), far exceeding conventional cameras. Differential illumination significantly improves the signal-to-noise ratio of corneal reflection events. The DBSCAN algorithm localizes corneal reflection points more efficiently than K-Means, Agglomerative Clustering, Mean Shift, and OPTICS, achieving accurate results within 10 ms without requiring predefined clusters (Fig. 8 ,Table 3 ). For gaze estimation, the proposed method maintains stable accuracy across sampling frequencies from 2 kHz to 25 kHz. At a 15° cone angle, the mean error (ME) and root mean square error (RMSE) are approximately 0.66° and 0.67°, respectively, while at 25° they increase slightly to 0.87° and 0.90° (Table 4 ). Compared with existing state-of-the-art (SOTA) gaze tracking methods, the proposed approach demonstrates superior overall performance in terms of both temporal resolution and accuracy (Table 5 ) Trajectory results (Fig. 9 ) show close alignment between estimated and ground truth gaze paths, and distribution analyses (Fig. 10 ) confirm concentrated error ranges below 1°.Conclusions This paper presents a novel eye tracking method integrating event cameras, dual-channel differential illumination. The method achieves high temporal resolution (25 kHz), enhances event signal quality, and reduces localization errors, yielding gaze estimation errors of less than 1°. The proposed approach provides a reliable technical pathway for next-generation high-performance eye tracking systems. Future work should consider sensor noise modeling and computational optimization to further improve real-world applicability. -

表 1 事件相机偏置参数

_off _on fo hpf refr 50 130 55 120 235 表 2 ALMs在不同领域的应用

表 3 不同聚类算法在事件数据上的计算时间(ms)

表 4 注视精度

圆锥角 系统时间

分辨率(kHz)估计误差 ME(°) RMSE(°) 15° 25 0.6634 0.6765 10 0.6633 0.6776 5 0.6632 0.6776 2 0.6633 0.6775 25° 25 0.8711 0.9029 10 0.8728 0.9040 5 0.8734 0.9046 2 0.8753 0.9063 -

[1] WANG Linlin, TANG Wenzhe, MONTAGU E, et al. Cognitive evaluation based on regression and eye-tracking for layout on human–computer multi-interface[J]. Behaviour & Information Technology, 2025, 44(9): 2011–2034. doi: 10.1080/0144929X.2024.2394881. [2] PAUSZEK J R. An introduction to eye tracking in human factors healthcare research and medical device testing[J]. Human Factors in Healthcare, 2023, 3: 100031. doi: 10.1016/j.hfh.2022.100031. [3] LARSEN O F P, TRESSELT W G, LORENZ E A, et al. A method for synchronized use of EEG and eye tracking in fully immersive VR[J]. Frontiers in Human Neuroscience, 2024, 18: 1347974. doi: 10.3389/fnhum.2024.1347974. [4] RAU T, KOCH M, PATHMANATHAN N, et al. Understanding collaborative learning of molecular structures in AR with eye tracking[J]. IEEE Computer Graphics and Applications, 2024, 44(6): 39–51. doi: 10.1109/MCG.2024.3503903. [5] ZHAO Guangrong, SHEN Yiran, ZHANG Chenlong, et al. RGBE-gaze: A large-scale event-based multimodal dataset for high frequency remote gaze tracking[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2025, 47(1): 601–615. doi: 10.1109/TPAMI.2024.3474858. [6] LIU Xinghua, ZHAO Yunan, WEN Shiping, et al. Motion segmentation with event camera: N-patches optical flow estimation and pairwise Markov random fields[J]. Expert Systems with Applications, 2024, 254: 124342. doi: 10.1016/j.eswa.2024.124342. [7] VALLIAPPAN N, DAI N, STEINBERG E, et al. Accelerating eye movement research via accurate and affordable smartphone eye tracking[J]. Nature Communications, 2020, 11(1): 4553. doi: 10.1038/s41467-020-18360-5. [8] Wikipedia saccade. https://en.wikipedia.org/wiki/Saccade.lM. (查阅网上资料,未找到本条文献信息且网址打不开,请确认). [9] SHARIFF W, DILMAGHANI M S, KIELTY P, et al. Event cameras in automotive sensing: A review[J]. IEEE Access, 2024, 12: 51275–51306. doi: 10.1109/ACCESS.2024.3386032. [10] ANGELOPOULOS A N, MARTEL J N P, KOHLI A P, et al. Event-based near-eye gaze tracking beyond 10, 000 Hz[J]. IEEE Transactions on Visualization and Computer Graphics, 2021, 27(5): 2577–2586. doi: 10.1109/TVCG.2021.3067784. [11] ZHAO Guangrong, YANG Yurun, LIU Jingwei, et al. EV-eye: Rethinking high-frequency eye tracking through the lenses of event cameras[C]. Proceedings of the 37th International Conference on Neural Information Processing Systems, New Orleans, USA, 2023: 2716. [12] STOFFREGEN T, DARAEI H, ROBINSON C, et al. Event-based kilohertz eye tracking using coded differential lighting[C]. Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, USA, 2022: 3937–3945. doi: 10.1109/WACV51458.2022.00399. [13] GUESTRIN E D and EIZENMAN M. General theory of remote gaze estimation using the pupil center and corneal reflections[J]. IEEE Transactions on Biomedical Engineering, 2006, 53(6): 1124–1133. doi: 10.1109/TBME.2005.863952. [14] 戚连刚, 申振恒, 王亚妮, 等. 基于周期截断数据矩阵奇异值分解的干扰抑制技术[J]. 电子与信息学报, 2022, 44(6): 2143–2150. doi: 10.11999/JEIT210397.QI Liangang, SHEN Zhenheng, WANG Yani, et al. Interference suppression technology based on singular value decomposition of periodic truncated data matrix[J]. Journal of Electronics & Information Technology, 2022, 44(6): 2143–2150. doi: 10.11999/JEIT210397. [15] 闻建刚, 冯文淑, 冯晓斐, 等. 无线传感网中的迭代加权最小二乘定位算法[J]. 电子与信息学报, 2025, 47(3): 582–589. doi: 10.11999/JEIT250203.WEN Jiangang, FENG Wenshu, FENG Xiaofei, et al. Iterative weighted least square localization algorithm in wireless sensor networks[J]. Journal of Electronics & Information Technology, 2025, 47(3): 582–589. doi: 10.11999/JEIT250203. [16] LI Jianing, ZHANG Yunjian, HAN Haiqian, et al. Active event-based stereo vision[C]. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, USA, 2025: 971–981. doi: 10.1109/CVPR52734.2025.00099. [17] YU Bohan, REN Jieji, HAN Jin, et al. EventPS: Real-time photometric stereo using an event camera[C]. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, USA, 2024: 9602–9611. doi: 10.1109/CVPR52733.2024.00917. [18] LU Xingyu, SUN Lei, GU Diyang, et al. SGE: Structured light system based on Gray code with an event camera[J]. Optics Express, 2024, 32(26): 46044–46061. doi: 10.1364/OE.538396. [19] CENSI A, STRUBEL J, BRANDLI C, et al. Low-latency localization by active LED markers tracking using a dynamic vision sensor[C]. 2013 IEEE/RSJ International Conference on Intelligent Robots and Systems, Tokyo, Japan, 2013: 891–898. doi: 10.1109/IROS.2013.6696456. [20] DENG Dingsheng. DBSCAN clustering algorithm based on density[C]. 2020 7th International Forum on Electrical Engineering and Automation (IFEEA), Hefei, China, 2020: 949–953. doi: 10.1109/IFEEA51475.2020.00199. [21] LUCHI D, RODRIGUES A L, and VAREJÃO F M. Sampling approaches for applying DBSCAN to large datasets[J]. Pattern Recognition Letters, 2019, 117: 90–96. doi: 10.1016/j.patrec.2018.12.010. [22] Prophesee. Metavision SDK[EB/OL]. https://docs.prophesee.ai/stable/index.html. (查阅网上资料,未找到年份信息,请确认补充). [23] FELßBERG A M and STRAZDAS D. RELAY: Robotic EyeLink AnalYsis of the EyeLink 1000 using an artificial eye[J]. Vision, 2025, 9(1): 18. doi: 10.3390/vision9010018. [24] 甬江实验室. 眼动追踪设备及测试装置[P]. 中国, 117991502A, 2024.Yongjiang Laboratory. Eye movement tracking equipment and testing device[P]. CN, 117991502A, 2024. [25] 翁云骞, 张璟璟. 超级“仿生眼”!甬江实验室自研设备获国际显示技术大会大奖[EB/OL]. https://nb.zjol.com.cn/202404/t20240419_26786595.shtml, 2024. (查阅网上资料,未找到对应的英文翻译,请确认补充). [26] 姬昂, 裴昊, 张邦杰, 等. 阵列SAR高分辨三维成像与点云聚类研究[J]. 电子与信息学报, 2024, 46(5): 2087–2094. doi: 10.11999/JEIT231223.JI Ang, PEI Hao, ZHANG Bangjie, et al. Research on high-resolution 3D imaging and point cloud clustering of array SAR[J]. Journal of Electronics & Information Technology, 2024, 46(5): 2087–2094. doi: 10.11999/JEIT231223. [27] 韦文斌, 彭锐晖, 孙殿星, 等. 基于多相参处理间隔频响特征聚类的有源假目标鉴别方法[J]. 电子与信息学报, 2024, 46(7): 2721–2731. doi: 10.11999/JEIT231012. [28] WEI Wenbin, PENG Ruihui, SUN Dianxing, et al. Active false target identification method based on frequency response features clustering in multi-coherent processing intervals[J]. Journal of Electronics & Information Technology, 2024, 46(7): 2721–2731. doi: 10.11999/JEIT231012.(查阅网上资料,本条文献为27条文献的英文翻译信息,请确认). [29] HAJIHOSSEINLOU M, MAGHSOUDI A, and GHEZELBASH R. intelligent mapping of geochemical anomalies: Adaptation of DBSCAN and mean-shift clustering approaches[J]. Journal of Geochemical Exploration, 2024, 258: 107393. doi: 10.1016/j.gexplo.2024.107393. [30] YIN Jiahui, FUKUYAMA Y, MURAKAMI K, et al. Explanation of gas turbine generator anomaly detection using contextual outlier interpretation with ordering points to identify the clustering structure[C]. 2024 Conference on AI, Science, Engineering, and Technology (AIxSET), Laguna Hills, USA, 2024: 252–257. doi: 10.1109/AIxSET62544.2024.00048. [31] DIERKES K, KASSNER M, and BULLING A. A fast approach to refraction-aware eye-model fitting and gaze prediction[C]. Proceedings of the 11th ACM Symposium on Eye Tracking Research & Applications, Denver, USA, 2019: 23. doi: 10.1145/3314111.3319819. -

下载:

下载:

下载:

下载: