Phase Shift-Based Covert Backdoor Attack Strategy in Deep Neural Networks

-

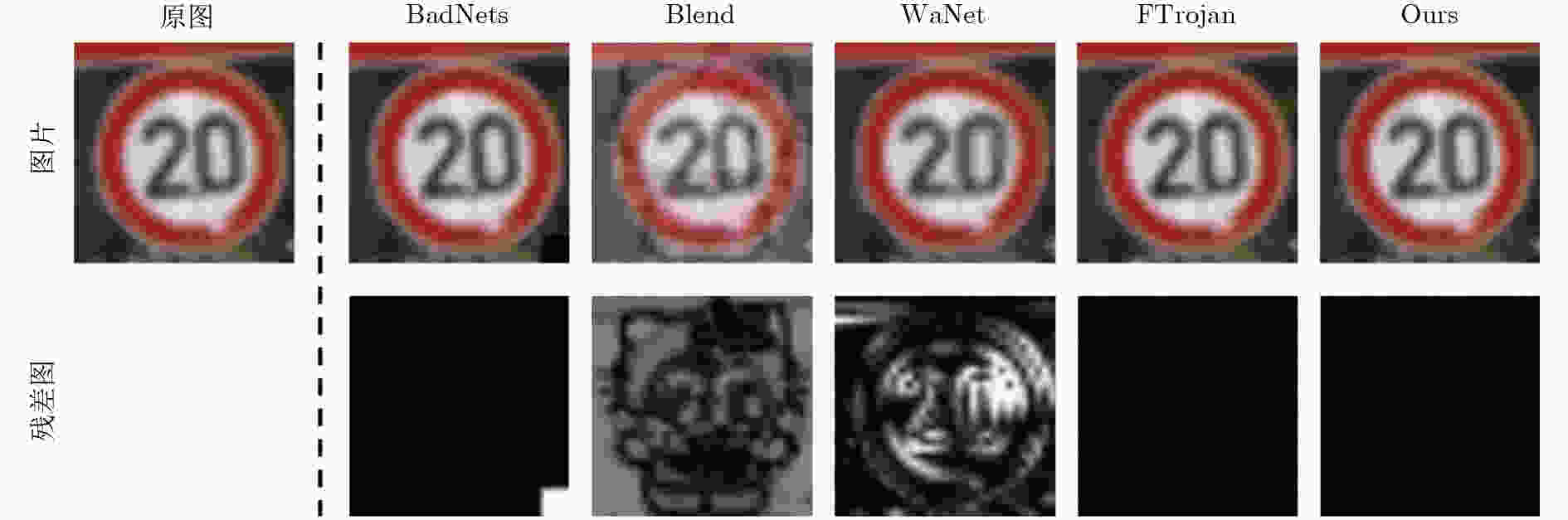

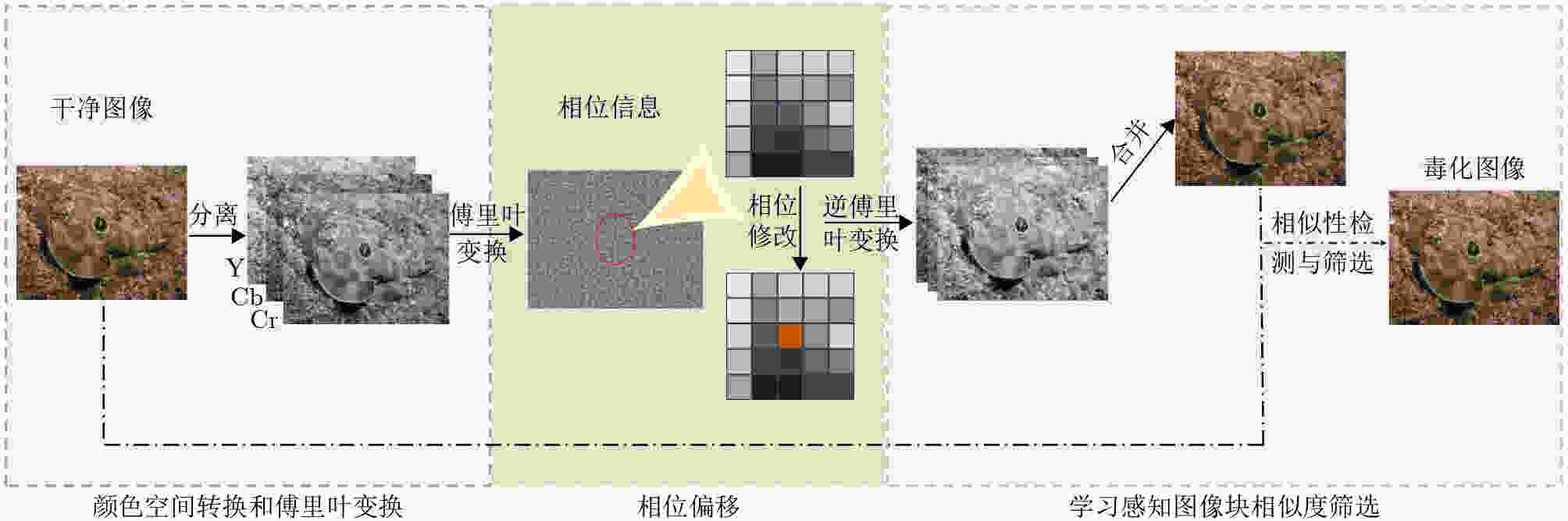

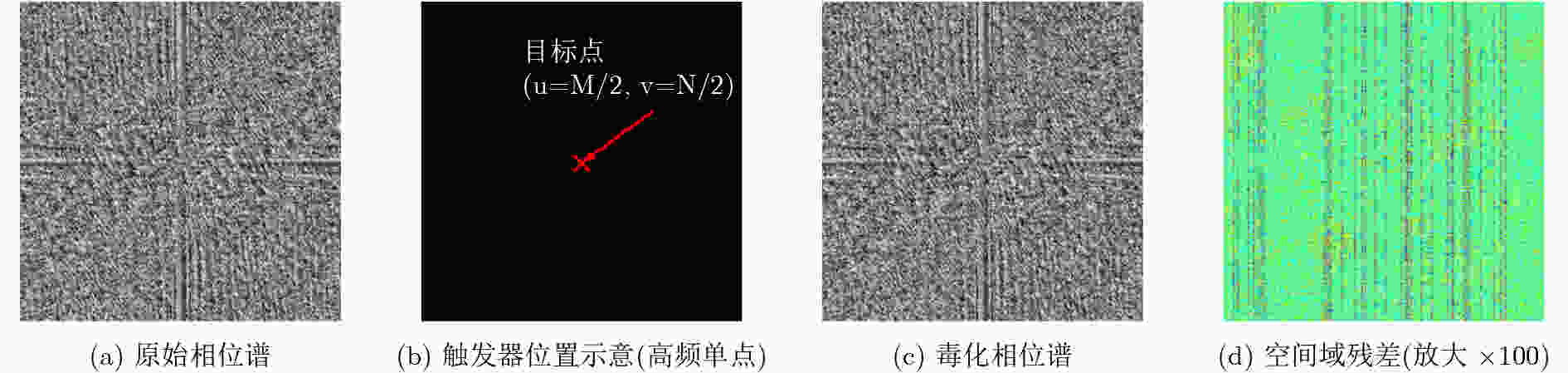

摘要: 后门攻击对深度神经网络(DNN)构成重大安全威胁。被植入后门的模型在遭遇特定触发器输入时会诱发预设错误输出,而对干净样本仍维持基准性能。现有研究已在空间域与频域触发器设计方面展开探索,但多数方法为确保攻击成功率(ASR),而牺牲了触发器的不可感知性。该文提出一种基于相位偏移的频域后门攻击(FDPS)方法。该方法通过离散傅里叶变换(DFT)将图像映射至频域,并在选定的频率分量上施加相位扰动以嵌入触发器。具体而言,FDPS优先针对中高频相位分量进行精细调控,以最小化幅度谱变化并避免引入可察觉的伪影。鉴于相位信息主导正弦波的相对位移,此类扰动可自然协调视觉语义,从而显著提升隐蔽性。相较于传统幅度扰动策略,相位偏移在保留图像全局结构的同时,更有效地规避了基于图像的防御检测机制。实验表明,与BadNets、Blend、WaNet及Ftrojan等基准后门攻击相比,FDPS在攻击成功率、干净样本准确率以及结构相似性指数(SSIM)等指标上均表现优越。此外,在GTSRB数据集上,仅需毒化2%的训练样本即可实现99%的攻击成功率,显著降低了攻击的样本需求与技术门槛,展现出对多样化攻击场景的更强鲁棒性与适配能力。Abstract:

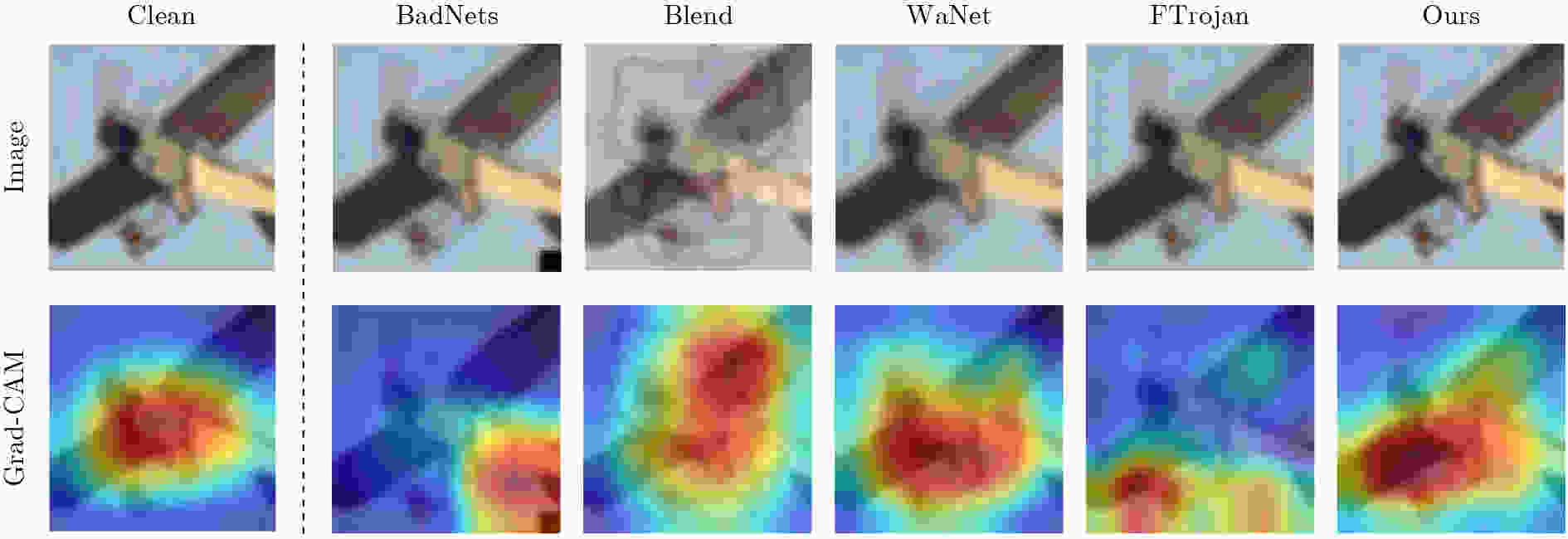

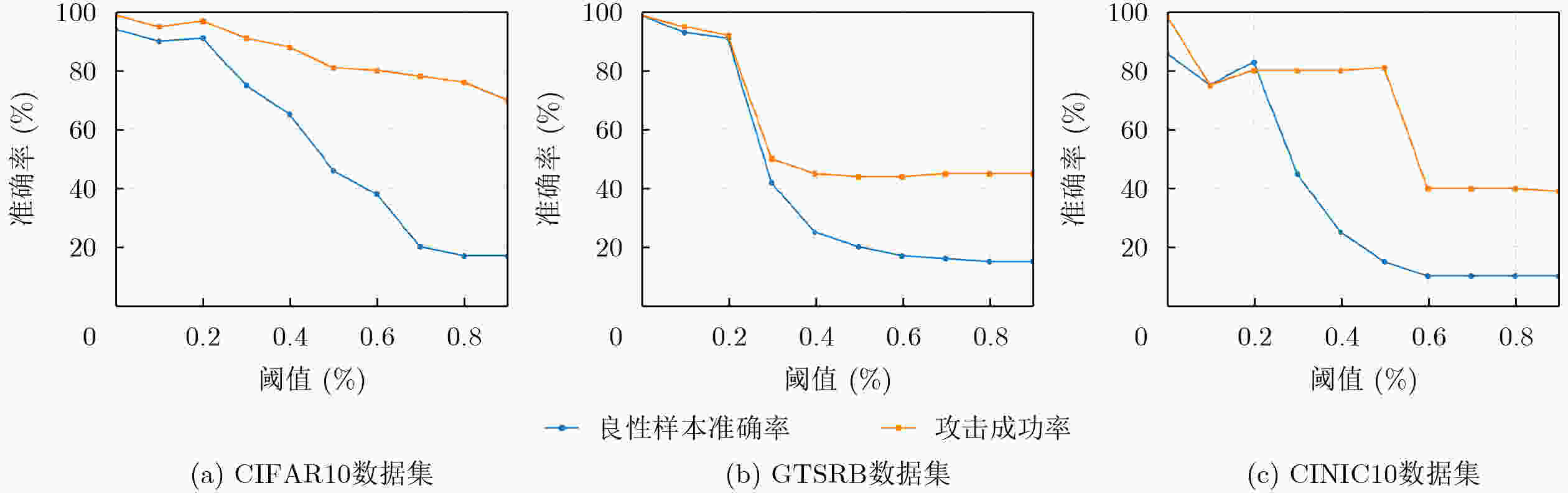

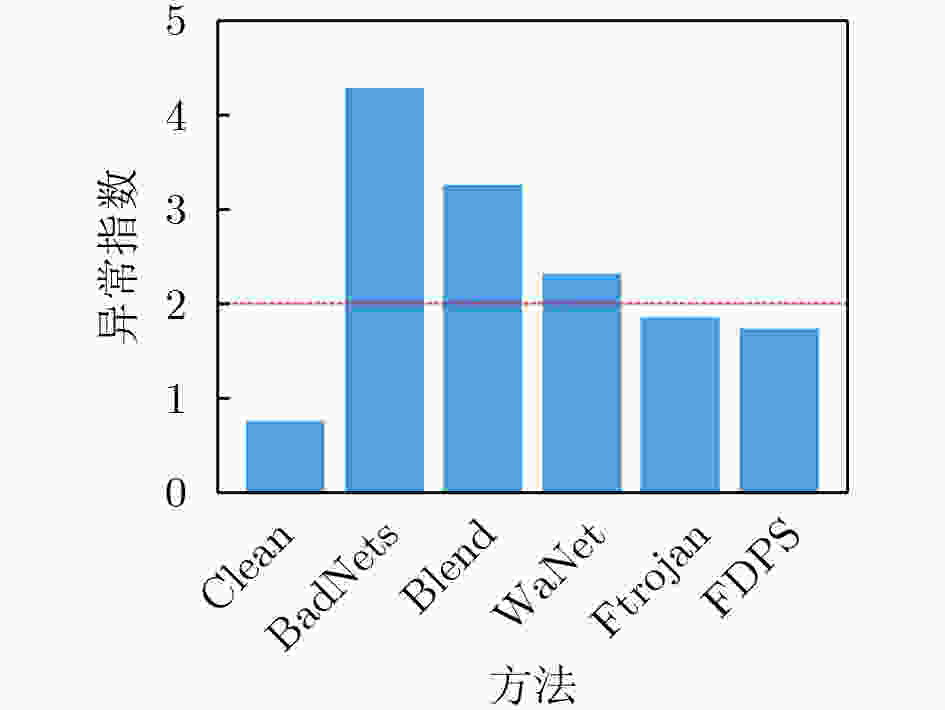

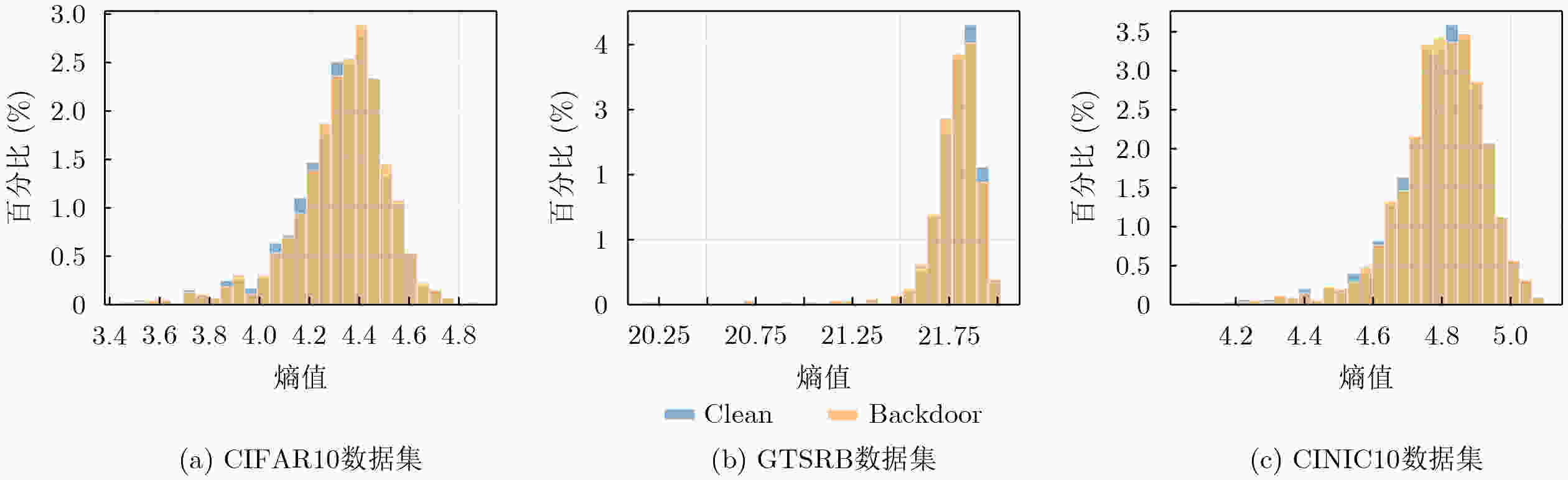

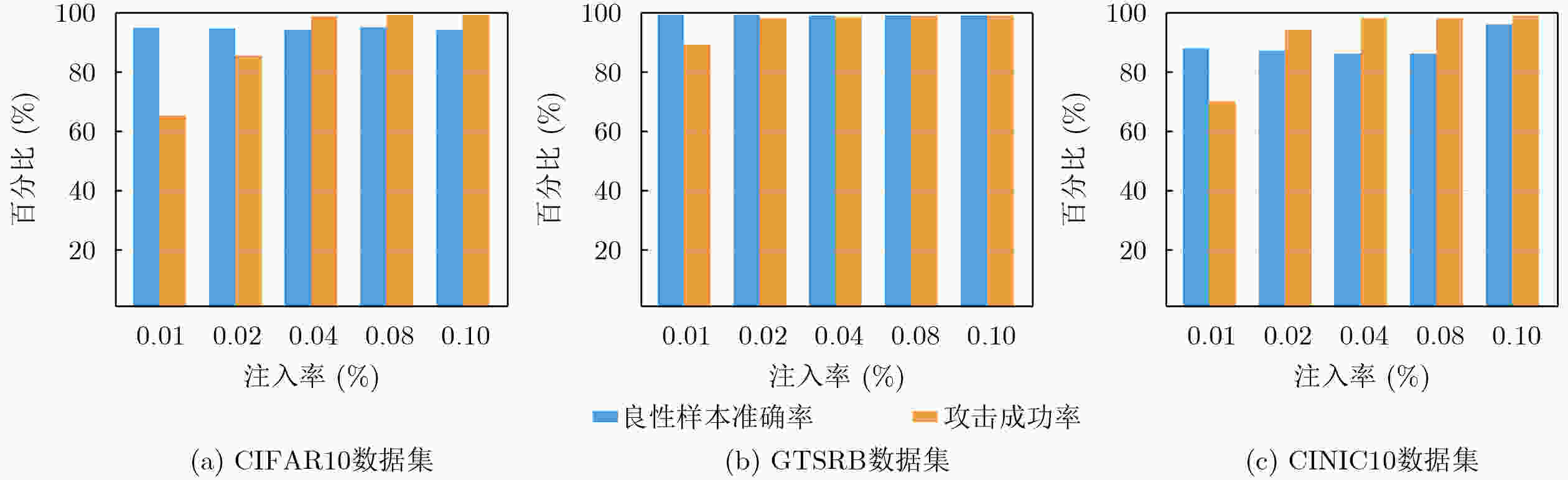

Objective The proliferation of deep neural networks (DNNs) in safety-critical domains such as autonomous driving and biomedical diagnostics has heightened concerns about their vulnerability to adversarial threats, particularly backdoor attacks. These attacks embed hidden triggers during training, causing models to behave normally on clean inputs while executing malicious actions when specific triggers are present. Existing backdoor methods predominantly operate in the spatial domain or frequency domain, but they face a fundamental trade-off between attack success rate (ASR) and stealthiness. Spatial triggers often introduce visible artifacts, while frequency-based amplitude perturbations disrupt energy distribution, making them detectable by advanced defenses like spectral anomaly detection. This work addresses the critical need for a backdoor paradigm that simultaneously achieves high attack performance, minimal perceptual distortion, and robustness against state-of-the-art defenses. Our objective is to develop a frequency-domain backdoor attack leveraging phase manipulation, which inherently aligns with human visual perception and structural coherence, thereby overcoming the limitations of existing methods. Methods FDPS integrates frequency-domain phase manipulation with perceptual similarity screening and standard data poisoning. The method begins by converting input images from RGB to YCrCb color space. This conversion isolates chrominance channels while preserving luminance information intact. Next, the system applies Discrete Fourier Transform to the chrominance components. This transformation produces complex frequency spectra. The method computes phase information using atan2 function and selectively shifts high-frequency components. Image reconstruction is performed through Inverse Fourier Transform. The framework incorporates Learned Perceptual Image Patch Similarity filtering. This filter discards generated instances that fall below similarity thresholds. The screening ensures all retained triggers maintain visual imperceptibility. Accepted poisoned samples receive target class labels. These samples are combined with clean training data following standard protocols. Results and Discussions FDPS achieves near-perfect 99% attack success rates while maintaining benign accuracy across three datasets and two network architectures ( Table 1 ). The method operates by manipulating phase information in chrominance channels via Fourier transforms, with LPIPS filtering ensuring visual stealth. Experimental results show poisoned images retain semantic focus, as confirmed by Grad-CAM visualizations aligning with clean patterns (Fig. 4 ). The approach demonstrates strong defense evasion, scoring an anomaly index of 1.73 against Neural Cleanse - below the detection threshold of 2 (Fig. 3 -5 ). Ablation studies validate that high-frequency phase perturbations achieve over 90% attack success with just 2% poisoning while minimizing impact on model utility (Fig. 6 ;Table 3 ).Conclusions An end-to-end frequency-domain strategy was developed to embed covert triggers in image classifiers while maintaining clean-data fidelity. By shifting selected phase components in chrominance and filtering with LPIPS, FDPS achieves 99% ASR with negligible BA loss and produces minimal visible artifacts. It also evades leading detection tools, including Grad-CAM, Neural Cleanse, ANP, and STRIP. The findings indicate that phase-centric, high-frequency perturbations constitute an especially potent and stealthy backdoor mechanism. Future work should explore broader modality coverage and develop frequency-domain anomaly detectors as principled countermeasures. -

Key words:

- Backdoor attacks /

- Deep neural networks /

- Image classification /

- Frequency domain

-

表 1 不同基线方法在三个数据集上的攻击成功率(ASR)和良性样本准确率(BA)对比

模型 方法 CIFAR10 GTSRB CINIC10 BA(%) ASR(%) BA(%) ASR(%) BA(%) ASR(%) ResNet18 Clean 95.00 - 99.20 - 88.02 - BadNets 94.02 99.42 99.13 99.52 85.77 99.26 Blend 94.26 99.87 99.02 99.77 85.82 99.89 WaNet 94.22 99.74 99.10 99.73 84.87 89.61 Ftrojan 94.10 99.26 99.01 98.90 85.90 99.70 DUBA 94.55 99.68 99.17 99.92 87.85 99.24 FDPS 94.02 99.33 99.15 99.12 86.11 99.13 RepVGG Clean 91.20 - 99.40 - 87.24 - BadNets 91.07 99.10 99.34 99.67 86.78 99.19 Blend 91.10 98.74 99.13 99.36 85.76 98.02 WaNet 91.07 97.95 99.13 99.38 86.16 88.76 Ftrojan 90.08 99.14 99.29 99.26 85.82 97.84 DUBA 91.18 99.78 99.34 99.78 87.01 99.02 FDPS 91.00 99.19 99.30 99.35 86.22 99.11 表 2 五种后门攻击方法在三数据集上的SSIM与LPIPS对比

数据集 BadNets Blend WaNet Ftrojan DUBA FDPS SSIM LPIPS SSIM LPIPS SSIM LPIPS SSIM LPIPS SSIM LPIPS SSIM LPIPS CIFAR10 0.972 0.0093 0.864 0.1319 0.953 0.0099 0.954 0.0125 0.977 0.0081 0.972 0.0058 GTSRB 0.968 0.0347 0.879 0.1569 0.950 0.0528 0.912 0.0598 0.972 0.0201 0.975 0.0112 CINIC10 0.978 0.0065 0.887 0.1832 0.941 0.0951 0.971 0.0754 0.968 0.0072 0.972 0.0052 表 3 不同频率位置下良性样本准确率(BA)与攻击成功率(ASR)的比较

频率 CIFAR10 GTSSRB CINIC10 BA(%) ASR(%) BA(%) ASR(%) BA(%) ASR(%) ($ u=\dfrac{1}{2}M,v=\dfrac{1}{2}N $) 94.02 99.33 99.15 99.12 86.11 99.13 ($ u=\dfrac{3}{4}M,v=\dfrac{3}{4}N $) 93.55 98.37 99.10 98.75 85.97 97.94 ($ u=M,v=N $) 93.11 94.18 94.18 95.47 85.49 87.55 -

[1] CHENG Jian, LONG Kaifang, ZHANG Shuang, et al. Text-image scene graph fusion for multimodal named entity recognition[J]. IEEE Transactions on Artificial Intelligence, 2024, 5(6): 2828–2839. doi: 10.1109/TAI.2023.3326416. [2] 任海玉, 刘建平, 王健, 等. 基于大语言模型的智能问答系统研究综述[J]. 计算机工程与应用, 2025, 61(7): 1–24. doi: 10.3778/j.issn.1002-8331.2409-0300.REN Haiyu, LIU Jianping, WANG Jian, et al. Research on intelligent question answering system based on large language model[J]. Computer Engineering and Applications, 2025, 61(7): 1–24. doi: 10.3778/j.issn.1002-8331.2409-0300. [3] NOWROOZI E, JADALLA N, GHELICHKHANI S, et al. Mitigating label flipping attacks in malicious URL detectors using ensemble trees[J]. IEEE Transactions on Network and Service Management, 2024, 21(6): 6875–6884. doi: 10.1109/TNSM.2024.3447411. [4] 任利强, 贾舒宜, 王海鹏, 等. 基于深度学习的时间序列分类研究综述[J]. 电子与信息学报, 2024, 46(8): 3094–3116. doi: 10.11999/JEIT231222.REN Liqiang, JIA Shuyi, WANG Haipeng, et al. A review of research on time series classification based on deep learning[J]. Journal of Electronics & Information Technology, 2024, 46(8): 3094–3116. doi: 10.11999/JEIT231222. [5] 汪旭童, 尹捷, 刘潮歌, 等. 神经网络后门攻击与防御综述[J]. 计算机学报, 2024, 47(8): 1713–1743. doi: 10.11897/SP.J.1016.2024.01713.WANG Xutong, YIN Jie, LIU Chaoge, et al. A survey of backdoor attacks and defenses on neural networks[J]. Chinese Journal of Computers, 2024, 47(8): 1713–1743. doi: 10.11897/SP.J.1016.2024.01713. [6] FENG Jun, LAI Yuzhe, SUN Hong, et al. SADBA: Self-adaptive distributed backdoor attack against federated learning[C]. Proceedings of the 39th AAAI Conference on Artificial Intelligence, Philadelphia, USA, 2025: 16568–16576. doi: 10.1609/aaai.v39i16.33820. [7] FENG Jun, YANG L T, ZHU Qing, et al. Privacy-preserving tensor decomposition over encrypted data in a federated cloud environment[J]. IEEE Transactions on Dependable and Secure Computing, 2020, 17(4): 857–868. doi: 10.1109/TDSC.2018.2881452. [8] ZHANG Pengfei, SUN Hong, ZHANG Zhikun, et al. Privacy-preserving recommendations with mixture model-based matrix factorization under local differential privacy[J]. IEEE Transactions on Industrial Informatics, 2025, 21(7): 5451–5459. doi: 10.1109/tii.2025.3555993. [9] 杜巍, 刘功申. 深度学习中的后门攻击综述[J]. 信息安全学报, 2022, 7(3): 1–16. doi: 10.19363/J.cnki.cn10-1380/tn.2022.05.01.DU Wei and LIU Gongshen. A survey of backdoor attack in deep learning[J]. Journal of Cyber Security, 2022, 7(3): 1–16. doi: 10.19363/J.cnki.cn10-1380/tn.2022.05.01. [10] GU Tianyu, LIU Kang, DOLAN-GAVITT B, et al. BadNets: Evaluating backdooring attacks on deep neural networks[J]. IEEE Access, 2019, 7: 47230–47244. doi: 10.1109/ACCESS.2019.2909068. [11] SELVARAJU R R, COGSWELL M, DAS A, et al. Grad-CAM: Visual explanations from deep networks via gradient-based localization[C]. Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 2017: 618–626. doi: 10.1109/ICCV.2017.74. [12] NGUYEN T A and TRAN A T. WaNet-imperceptible warping-based backdoor attack[C]. 9th International Conference on Learning Representations, Austria, 2021. (查阅网上资料, 未找到本条文献出版城市信息, 请确认并补充). [13] LIN Junyu, XU Lei, LIU Yingqi, et al. Composite backdoor attack for deep neural network by mixing existing benign features[C]. Proceedings of the 2020 ACM SIGSAC Conference on Computer and Communications Security, USA, 2020: 113–131. doi: 10.1145/3372297.3423362. (查阅网上资料,未找到本条文献出版城市信息,请确认并补充). [14] WANG Tong, YAO Yuan, XU Feng, et al. An invisible black-box backdoor attack through frequency domain[C]. Proceedings of the 17th European Conference on Computer Vision, Tel Aviv, Israel, 2022: 396–413. doi: 10.1007/978-3-031-19778-9_23. [15] FENG Yu, MA Benteng, ZHANG Jing, et al. FIBA: Frequency-injection based backdoor attack in medical image analysis[C]. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, USA, 2022: 20844–20853. doi: 10.1109/CVPR52688.2022.02021. [16] GAO Yansong, XU Change, WANG Derui, et al. STRIP: A defence against trojan attacks on deep neural networks[C]. Proceedings of the 35th Annual Computer Security Applications Conference, San Juan, USA, 2019: 113–125. doi: 10.1145/3359789.3359790. [17] XU Honghui, FANG Chuangjie, WANG Renfang, et al. Dual-enhanced high-order self-learning tensor singular value decomposition for robust principal component analysis[J]. IEEE Transactions on Artificial Intelligence, 2024, 5(7): 3564–3578. doi: 10.1109/TAI.2024.3373388. [18] YAN Qingsen, FENG Yixu, ZHANG Cheng, et al. HVI: A new color space for low-light image enhancement[C]. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, USA, 2025: 5678–5687. doi: 10.1109/CVPR52734.2025.00533. [19] HE Kaiming, ZHANG Xiangyu, REN Shaoqing, et al. Deep residual learning for image recognition[C]. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, USA, 2016: 770–778. doi: 10.1109/CVPR.2016.90. [20] DING Xiaohan, ZHANG Xiangyu, MA Ningning, et al. RepVGG: Making VGG-style ConvNets great again[C]. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, USA, 2021: 13728–13737. doi: 10.1109/CVPR46437.2021.01352. [21] KRIZHEVSKY A. Learning multiple layers of features from tiny images[R]. Technical Report TR-2009, 2009. [22] STALLKAMP J, SCHLIPSING M, SALMEN J, et al. Man vs. computer: Benchmarking machine learning algorithms for traffic sign recognition[J]. Neural Networks, 2012, 32: 323–332. doi: 10.1016/j.neunet.2012.02.016. [23] DARLOW L N, CROWLEY E J, ANTONIOU A, et al. CINIC-10 is not ImageNet or CIFAR-10[J]. arXiv preprint arXiv: 1810.03505, 2018. doi: 10.48550/arXiv.1810.03505. (查阅网上资料,请核对文献类型及格式是否正确). [24] GAO Yudong, CHEN Honglong, SUN Peng, et al. A dual stealthy backdoor: From both spatial and frequency perspectives[C]. Proceedings of the 38th AAAI Conference on Artificial Intelligence, Vancouver, Canada, 2024: 1851–1859. doi: 10.1609/aaai.v38i3.27954. [25] WU Dongxian and WANG Yisen. Adversarial neuron pruning purifies backdoored deep models[C]. Proceedings of the 35th International Conference on Neural Information Processing Systems, 2021: 1293. (查阅网上资料, 未找到本条文献出版地信息, 请确认并补充). [26] WANG Bolun, YAO Yuanshun, SHAN S, et al. Neural cleanse: Identifying and mitigating backdoor attacks in neural networks[C]. Proceedings of the 2019 IEEE Symposium on Security and Privacy (SP), San Francisco, USA, 2019: 707–723. doi: 10.1109/SP.2019.00031. -

下载:

下载:

下载:

下载: