UWF-YOLO: A Lightweight Framework for Underwater Object Detection via Redundant Information Optimization

-

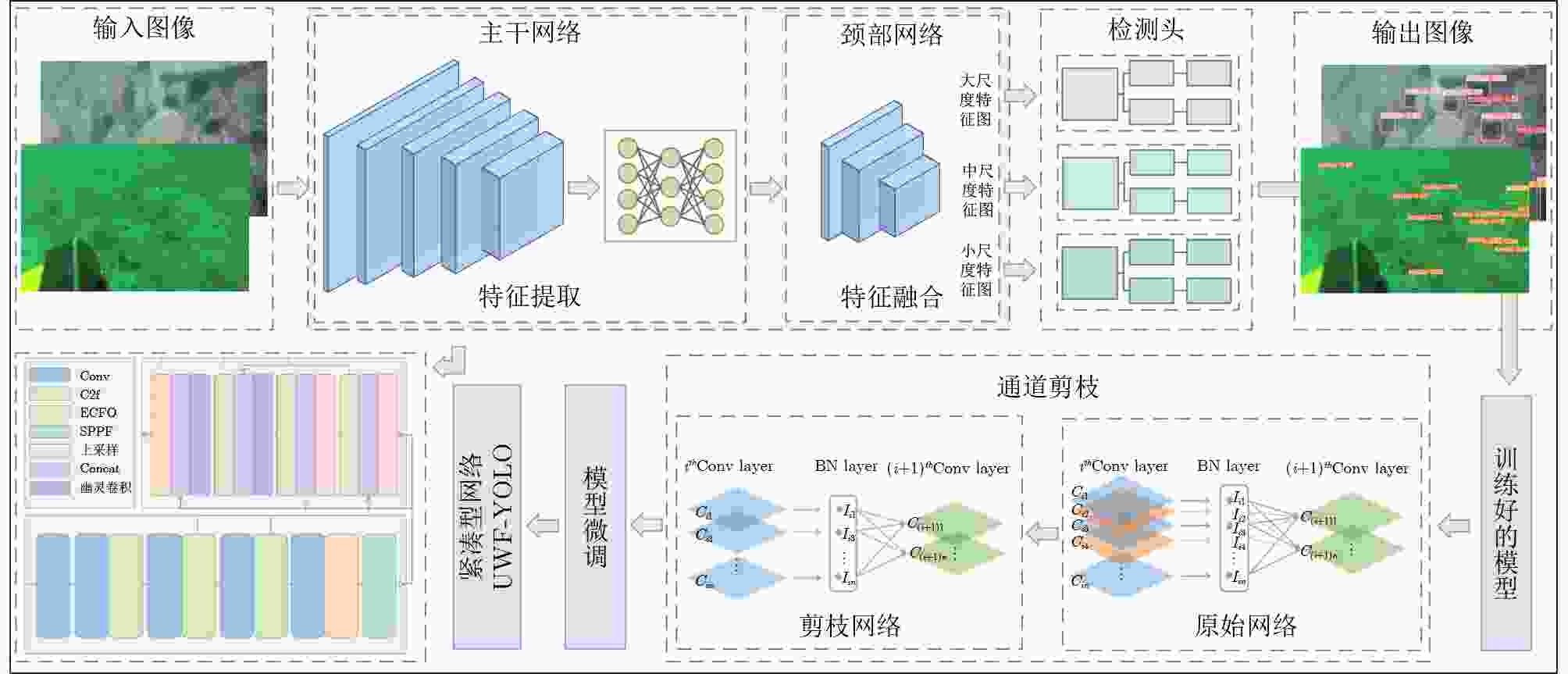

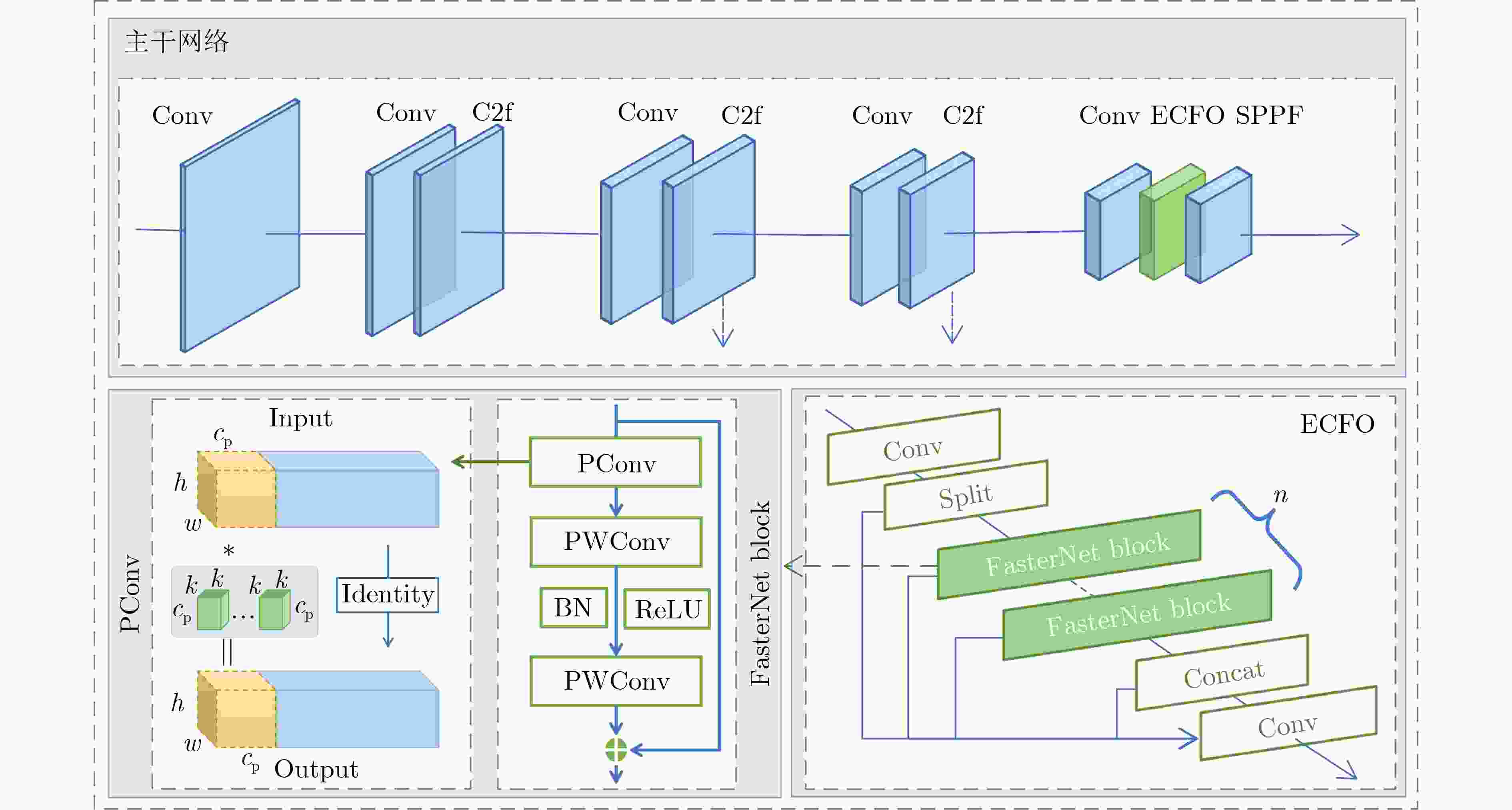

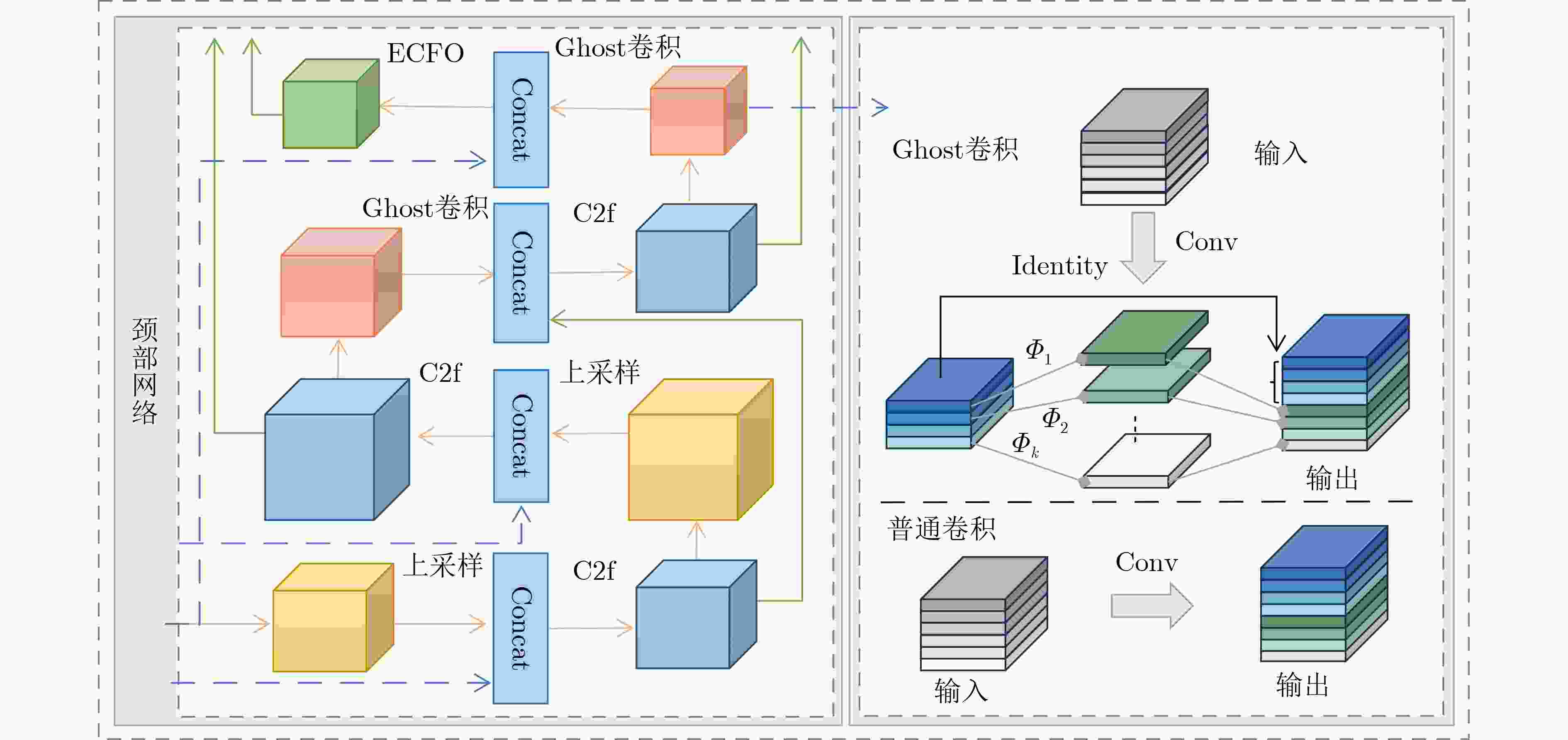

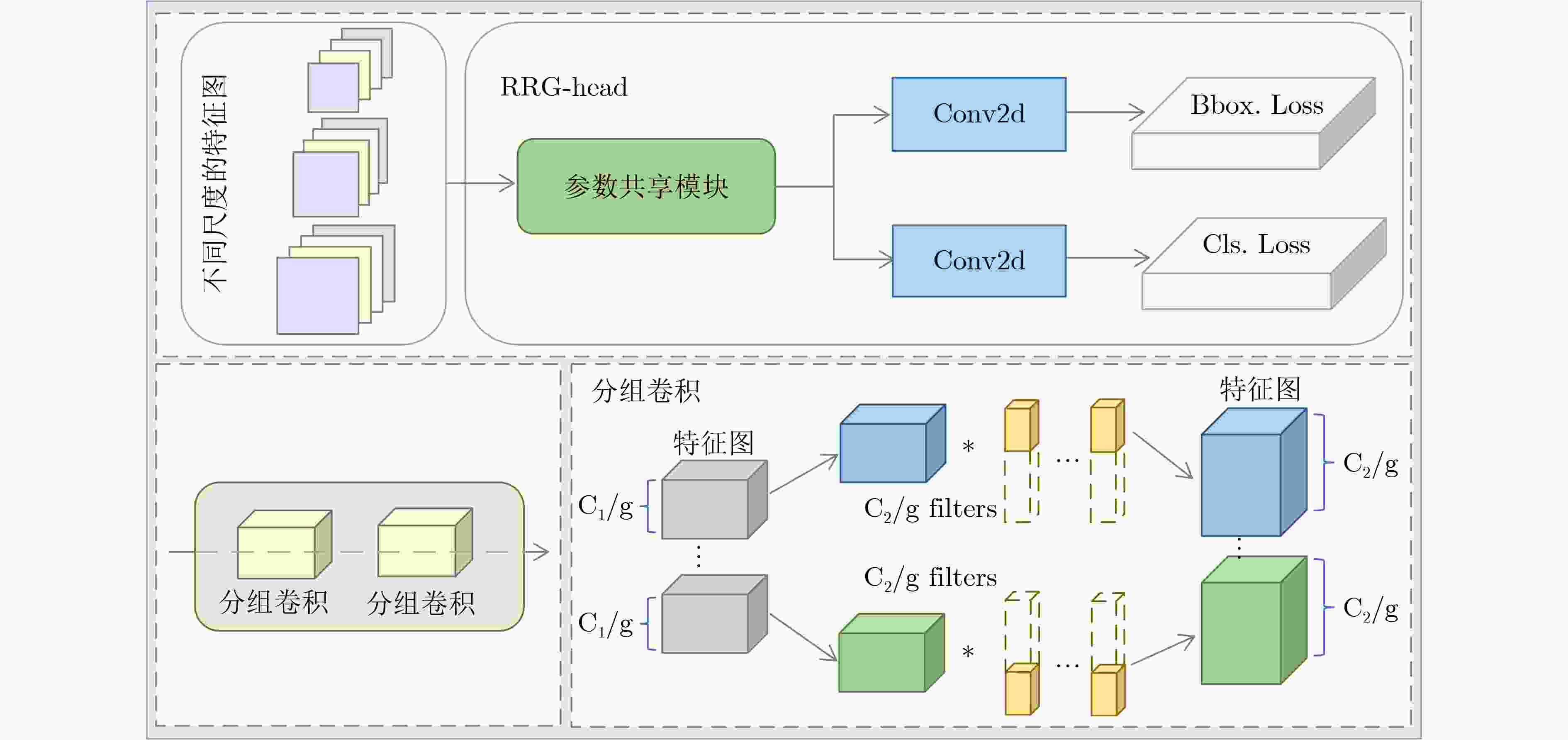

摘要: 针对现有水下目标检测方法在成像退化类型多样与背景干扰等复杂场景中鲁棒性差以及在设备资源受限条件下难以兼顾检测精度与模型轻量化的问题,本文提出基于冗余信息优化的轻量化水下目标检测网络(Underwater Faster YOLO Network Based on Redundancy Information Optimization, UWF-YOLO),并进一步构建了复杂场景水下目标检测数据集(Underwater Object Detection Dataset with Complex Scene, CSUOD)。UWF-YOLO采用FasterNet Block重构C2f模块优化主干和颈部网络,通过特征通道选择机制减少冗余特征,并引入Ghost卷积增强颈部网络的多尺度特征融合能力;同时,通过基于分组卷积的参数共享检测头降低计算开销;最后,应用结构化通道剪枝技术进一步压缩网络规模。CSUOD数据集通过收集真实水下图像标注并进行分辨率标准化处理,覆盖雾化、色偏、非均匀照明等各种退化类型,可用于复杂场景下水下目标检测模型的鲁棒性训练与性能评测。在DUO,RUOD和TrashCan数据集上进行实验表明,相较于YOLOv8s,所提方法在计算量、权重大小与参数量三个指标上的分别降低了60.4%、77.3%和78.4%;与参数量相当的YOLOv9-tiny相比,mAP指标在三个数据集上分别提升了0.3%、2.3%和3.4%。同时,在自建CSUOD数据集上的主客观对比实验,进一步证实所提模型在实现显著轻量化的同时,能够有效避免背景干扰导致的误检、漏检等问题,特别在复杂水下环境中展现出优异的检测性能。此外,本文构建的复杂场景水下数据集将有助于推动水下目标检测方法的发展。Abstract:

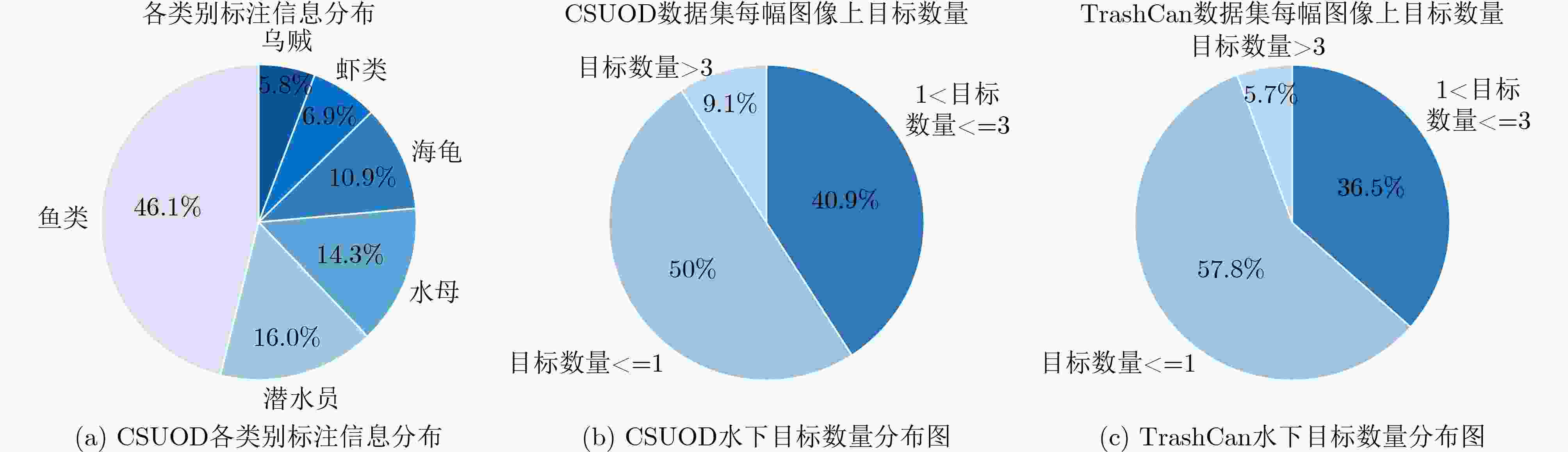

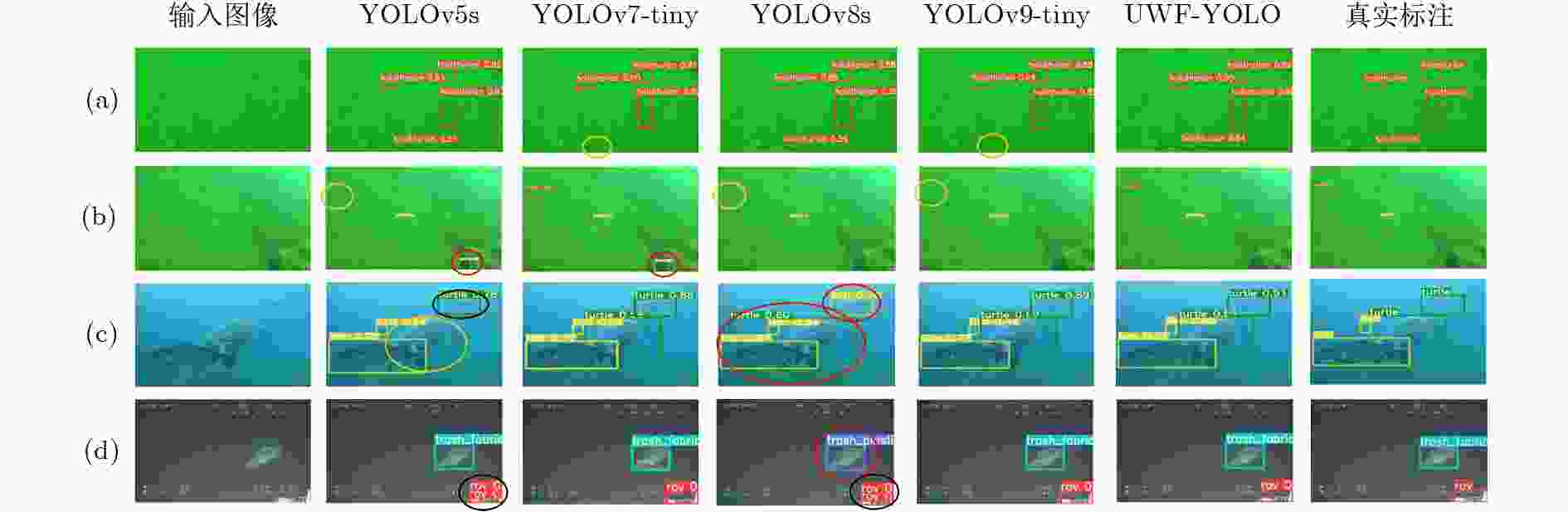

Objective The rapid development of underwater imaging technology has significantly elevated the importance of underwater object detection for resource exploration and environmental monitoring applications. Generally, complex underwater environments yield various degradations of image quality such as color casts, haze-like effects, and non-uniform illumination. Unfortunately, existing vision-based object detection algorithms always suffer from unpleasing performance and notable limitations especially for detecting small objects, resulting in missed detections and false positives. Moreover, existing deep learning based underwater detection models also face substantial challenges in striking an optimal balance between accuracy and lightweight design under the condition of limited equipment resources. To address these issues, it is of great importance to design efficient underwater object detection methods in view of water-related vision tasks, which play a crucial role in marine resource exploration, ecological monitoring, underwater robotics, and intelligent perception systems for autonomous underwater vehicles. Methods In this paper, we propose a novel lightweight framework based on redundant information optimization for underwater object detection. Technically, we propose a lightweight underwater object detection network called UWF-YOLO based on redundancy information optimization. First, the C2f module is reconstructed by FasterNet Block to optimize both the backbone and neck networks, and a feature channel selection mechanism is incorporated to reduce the redundant features. On other hand, due to the redundant traditional convolutional features in the YOLO neck, it is difficult to adapt to the underwater environment. Ghost Convolution is also introduced to generate the Ghost feature map for enhancing the multi-scale feature fusion capability of the neck network. Next, our proposed method achieves parameter sharing by replacing the original detection head with a redundant optimization group detection head (RRG-Head) based on group convolution, thereby reducing computational costs. Finally, the structured channel pruning technique is applied to identify the inter-layer dependencies of the graph and bind the pruning units. Combined with the LAMP weight magnitude score normalization for evaluating the importance of channels, the low-contributing groups are pruned and fine-tuned to achieve network size compression. In addition, since the scene of underwater detection datasets are typically monotonous and the underwater objects contained in the available datasets are usually small and clustered. We also construct an underwater object detection dataset with complex scene, namely CSUOD, by collecting real-world underwater images from different websites and platforms to ensure both its diversity and authenticity, followed by manual annotation and resolution normalization preprocessing. CSUOD is specifically designed for various challenging underwater environments characterized by color casts, haze-like effects, and non-uniform illumination. In our CSUOD, we manually select 1135 images containing 6 different types, and perform the manual annotation and resolution standardization operations.Results and Discussions Extensive experiments are conducted on three public underwater object detection datasets (i.e., DUO, RUOD, and TrashCan) by comparing several popular and widely used object detection methods. The proposed model is evaluated against mainstream detectors, including YOLOv5s, YOLOv7-tiny, YOLOv8s, YOLOv9-tiny, and Deformable DETR. In computational complexity assessment, experimental results show that the proposed method has reduced the FLOPs, model size, and parameters by 60.4%, 77.3%, and 78.4%, respectively, compared to the baseline. In addition, our method has outperformed YOLOv9-tiny with comparable parameters by 0.3%, 2.3%, and 3.4% in mAP across the three datasets. Also, some comparative results on our established CSUOD dataset also indicate that our proposed model has a good improvement and stability even in complex underwater environments. Qualitative visualization results further illustrate the model’s robustness and detection stability under various underwater degradations, such as haze-like effects and non-uniform illumination. Conclusions Quantitative and qualitative experiments on different datasets have validated the effectiveness and robustness of the proposed method. In addition, our method achieves superior detection performance in complex underwater environments, effectively solving missed detections and false positives caused by background interference. A large number of experimental results show that our designed UWF-YOLO can not only achieve significant light weighting, but also maintain the comparable detection accuracy comparing with the benchmark model. This balance between the detection accuracy and low computational cost makes it particularly suitable for underwater devices with limited resources. Besides, the proposed method has great potential in practical scenarios such as marine ecological monitoring, underwater resource exploration, and autonomous underwater vehicle perception systems. It also provides a reliable and efficient technical foundation for real-time applications, with strong adaptability to different underwater conditions, efficient integration into embedded platforms, and support for real-time perception and decision-making. Our constructed dataset CSUOD in this study will help address the limitations of existing underwater object detection datasets and promote the development of underwater object detection. In the future, this work can be further extended to multi-modal perception systems and larger-scale datasets. These efforts will enable adaptive models for more dynamic underwater scenarios and support broader applications in intelligent ocean observation and autonomous navigation. -

表 1 DUO、RUOD、TrashCan数据集客观指标对比

网络 mAP50(%) FLOPs(G) Weight(M) Params(M) DUO RUOD TrashCan DUO RUOD TrashCan DUO RUOD TrashCan DUO RUOD TrashCan YOLOv5s 82.3 85.9 89.2 15.8 15.8 15.9 14.4 14.5 14.5 7.0 7.0 7.1 Deformable DETR 80.1 83.3 81.4 51.1 51.1 51.1 480.0 480.0 480.0 39.8 39.8 39.8 YOLOv7-tiny 83.6 84.6 84.7 13.0 13.1 13.2 12.4 12.3 12.4 6.0 6.0 6.1 YOLOv8s 83.1 86.5 89.6 28.4 28.5 28.5 22.5 22.6 22.5 11.1 11.1 11.1 YOLOv9-tiny 82.8 84.3 86.0 10.7 10.7 10.7 6.1 6.1 6.1 2.6 2.6 2.6 UWF-YOLO

(剪枝前)84.2 87.0 90.1 19.5 19.5 19.5 14.7 14.7 14.7 7.2 7.2 7.2 UWF-YOLO 83.1 86.6 89.4 11.3 11.4 11.3 5.1 5.0 5.1 2.4 2.4 2.4 表 2 自建CSUOD数据集指标对比

网络 mAP50_all (%) AP_j (%) AP_c (%) AP_f (%) AP_s (%) AP_d (%) AP_t (%) YOLOv5s 78.4 86.7 81.5 76.9 50.8 87.1 87.8 YOLOv7-tiny 65.3 56.6 70.2 74.0 52.6 72.6 65.6 YOLOv7 79.6 89.6 82.5 80.5 61.6 81.6 81.8 YOLOv8s 78.0 85.9 72 79.6 64.2 76.4 90 YOLOv9-tiny 65.1 76.5 63.1 64.9 45.6 65.9 74.4 UWF-YOLO 79.6 84.2 79.8 75.2 70.5 84.2 84.1 表 3 消融实验

数据集 方案 基线模型 ECFO Ghost RRG-Head 通道剪枝 mAP50 (%) Params (M) FLOPs (G) Weight (M) RUOD 1 √ 86.5 11.1 28.5 22.6 2 √ √ 86.7 9.4 27.0 19.0 3 √ √ √ 86.7 9.0 26.6 18.3 4 √ √ √ √ 87.0 7.2 19.5 14.7 5 √ √ √ √ √ 86.6 2.4 11.4 5.0 TrashCan 1 √ 89.6 11.1 28.5 22.5 2 √ √ 90.0 9.4 27.1 19.0 3 √ √ √ 90.2 9.0 26.6 18.3 4 √ √ √ √ 90.1 7.2 19.5 14.7 5 √ √ √ √ √ 89.4 2.4 11.3 5.1 -

[1] 黄海宁, 李宝奇, 刘纪元, 等. 声呐图像水下目标识别综述与展望[J]. 电子与信息学报, 2024, 46(5): 1742–1760. doi: 10.11999/JEIT231207.HUANG Haining, LI Baoqi, LIU Jiyuan, et al. Sonar image underwater target recognition: A comprehensive overview and prospects[J]. Journal of Electronics & Information Technology, 2024, 46(5): 1742–1760. doi: 10.11999/JEIT231207. [2] WANG Hao, ZHANG Weibo, XU Yinghao, et al. WaterCycleDiffusion: Visual-textual fusion empowered underwater image enhancement[J]. Information Fusion, 2025, 127: 103693. doi: 10.1016/j.inffus.2025.103693. [3] ZHANG Dehua, YU Changcheng, LI Zhen, et al. A lightweight network enhanced by attention-guided cross-scale interaction for underwater object detection[J]. Applied Soft Computing, 2025, 184: 113811. doi: 10.1016/j.asoc.2025.113811. [4] CHEW A L, TONG P B, and CHIA C S. Automatic detection and classification of man-made targets in side scan sonar images[C]. 2007 Symposium on Underwater Technology and Workshop on Scientific Use of Submarine Cables and Related Technologies, Tokyo, Japan, 2007: 126–132. doi: 10.1109/UT.2007.370841. [5] BEIJBOM O, EDMUNDS P J, KLINE D I, et al. Automated annotation of coral reef survey images[C]. 2012 IEEE Conference on Computer Vision and Pattern Recognition, Providence, USA, 2012: 1170–1177. doi: 10.1109/CVPR.2012.6247798. [6] LI Xiu, SHANG Min, QIN Hongwei, et al. Fast accurate fish detection and recognition of underwater images with fast R-CNN[C]. OCEANS 2015-MTS/IEEE Washington, Washington, USA, 2015: 1–5. doi: 10.23919/OCEANS.2015.7404464. [7] SONG Pinhao, LI Pengteng, DAI Linhui, et al. Boosting R-CNN: Reweighting R-CNN samples by RPN’s error for underwater object detection[J]. Neurocomputing, 2023, 530: 150–164. doi: 10.1016/j.neucom.2023.01.088. [8] 王非, 王欣宇, 周景春, 等. 一种基于YOLOv3的水下声呐图像目标检测方法[J]. 电子与信息学报, 2022, 44(10): 3419–3426. doi: 10.11999/JEIT220260.WANG Fei, WANG Xinyu, ZHOU Jingchun, et al. An underwater object detection method for sonar image based on YOLOv3 model[J]. Journal of Electronics & Information Technology, 2022, 44(10): 3419–3426. doi: 10.11999/JEIT220260. [9] DAI Linhui, LIU Hong, SONG Pinhao, et al. A gated cross-domain collaborative network for underwater object detection[J]. Pattern Recognition, 2024, 149: 110222. doi: 10.1016/j.patcog.2023.110222. [10] YUAN Jieyu, CAI Zhanchuan, and CAO Wei. A novel underwater detection method for ambiguous object finding via distraction mining[J]. IEEE Transactions on Industrial Informatics, 2024, 20(7): 9215–9224. doi: 10.1109/TII.2024.3383537. [11] 沈学利, 李东峰. 频域重标定与自适应稀疏金字塔水下实时目标检测[J/OL]. 激光与光电子学进展. https://link.cnki.net/urlid/31.1690.TN.20260121.1736.048, 2026.SHEN Xueli and LI Dongfeng. Real-time underwater object detection with frequency-domain recalibration and an adaptive sparse pyramid[J/OL]. Laser & Optoelectronics Progress. https://link.cnki.net/urlid/31.1690.TN.20260121.1736.048, 2026. [12] WANG Junzhe, CHEN Xinke, DAI Anbang, et al. LS-DETR: Lightweight transformer for object detection in forward-looking sonar images[J]. IEEE Geoscience and Remote Sensing Letters, 2025, 22: 1502805. doi: 10.1109/LGRS.2025.3575615. [13] JOCHER G, QIU Jing, and CHAURASIA A. Ultralytics YOLO[EB/OL]. https://github.com/ultralytics/ultralytics, 2025. [14] CHEN Jierun, KAO S H, HE Hao, et al. Run, don't walk: Chasing higher FLOPS for faster neural networks[C]. Proceedings of 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, Canada, 2023: 12021–12031. doi: 10.1109/CVPR52729.2023.01157. [15] HAN Kai, WANG Yunhe, TIAN Qi, et al. GhostNet: More features from cheap operations[C]. Proceedings of 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, USA, 2020: 1577–1586. doi: 10.1109/CVPR42600.2020.00165. [16] LEE J, PARK S, MO S, et al. Layer-adaptive sparsity for the magnitude-based pruning[C]. 9th International Conference on Learning Representations, 2021. (查阅网上资料, 未找到出版地信息, 请补充). [17] FANG Gongfan, MA Xinyin, SONG Mingli, et al. DepGraph: Towards any structural pruning[C]. Proceedings of 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, Canada, 2023: 16091–16101. doi: 10.1109/CVPR52729.2023.01544. [18] LIU Chongwei, LI Haojie, WANG Shuchang, et al. A dataset and benchmark of underwater object detection for robot picking[C]. 2021 IEEE International Conference on Multimedia & Expo Workshops, Shenzhen, China, 2021: 1–6. doi: 10.1109/ICMEW53276.2021.9455997. [19] FU Chenping, LIU Risheng, FAN Xin, et al. Rethinking general underwater object detection: Datasets, challenges, and solutions[J]. Neurocomputing, 2023, 517: 243–256. doi: 10.1016/j.neucom.2022.10.039. [20] HONG J, FULTON M, and SATTAR J. TrashCan: A semantically-segmented dataset towards visual detection of marine debris[EB/OL]. arXiv: 2007.08097. https://doi.org/10.48550/arXiv.2007.08097, 2020. [21] ZHU Xizhou, SU Weijie, LU Lewei, et al. Deformable DETR: Deformable transformers for end-to-end object detection[C]. 9th International Conference on Learning Representations, 2021. (查阅网上资料, 未找到出版地信息, 请补充). [22] JOCHER G. YOLOv5 by ultralytics[EB/OL]. https://github.com/ultralytics/yolov5, 2025. [23] WANG C Y, BOCHKOVSKIY A, and MARK LIAO H Y. YOLOv7: Trainable bag-of-freebies sets new state-of-the-art for real-time object detectors[C]. 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, Canada, 2023: 7464–7475. doi: 10.1109/CVPR52729.2023.00721. [24] WANG C Y, YEH I H, and MARK LIAO H Y. Yolov9: Learning what you want to learn using programmable gradient information[C]. 18th European Conference on Computer Vision, Milan, Italy, 2025: 1–21. doi: 10.1007/978-3-031-72751-1_1. -

下载:

下载:

下载:

下载: