Brain-inspired Continuous Learning: Technology, Application and Future

-

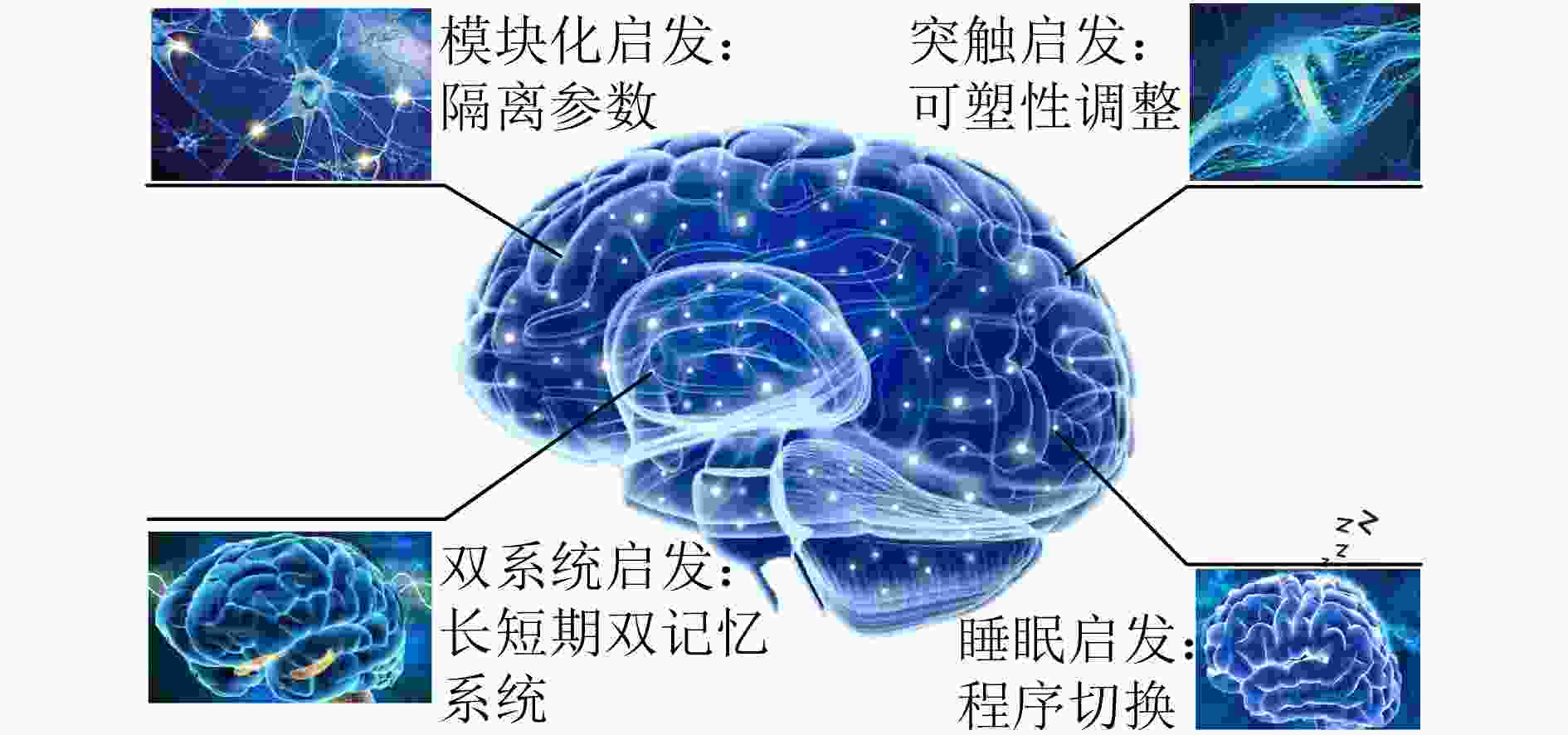

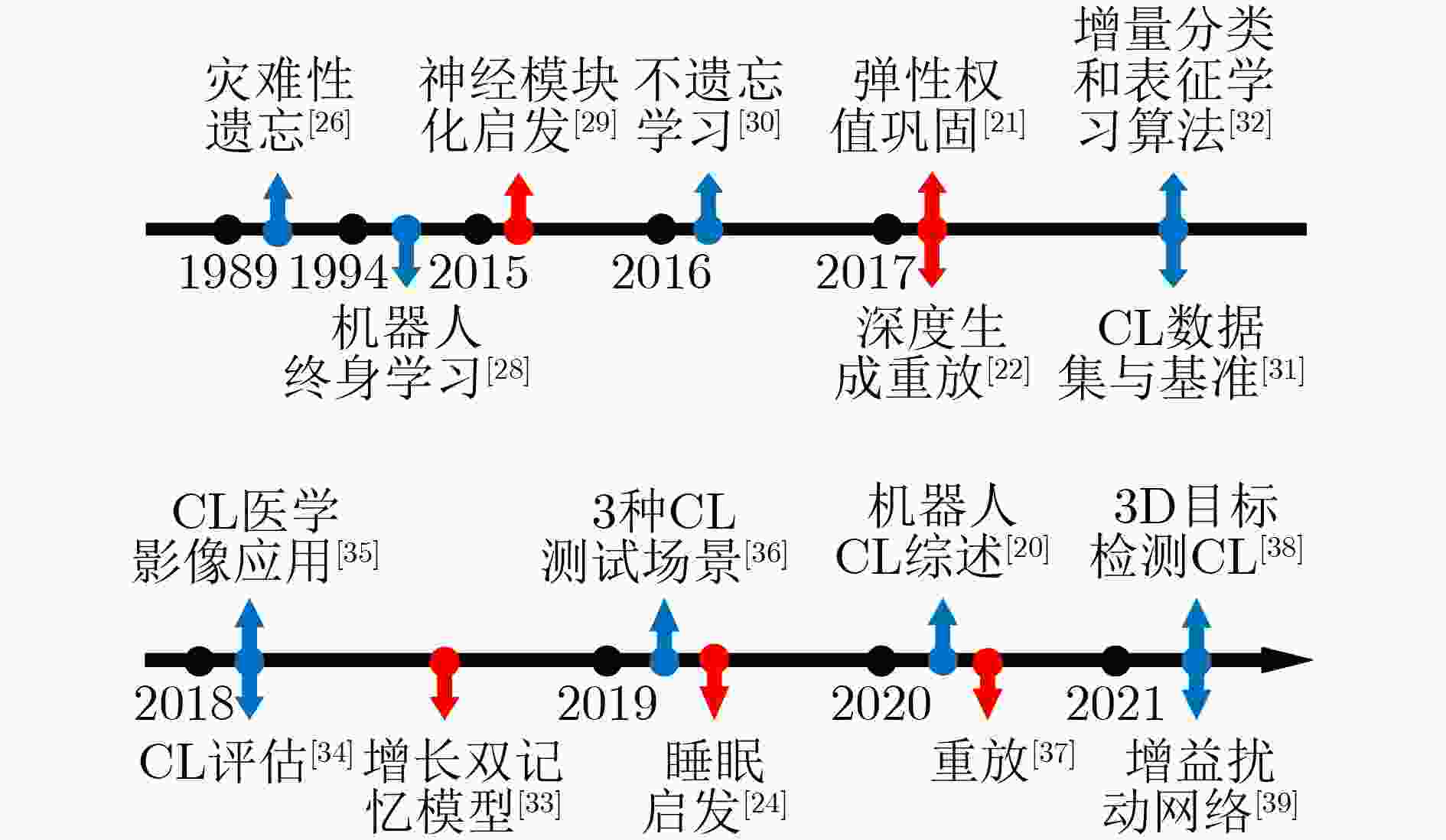

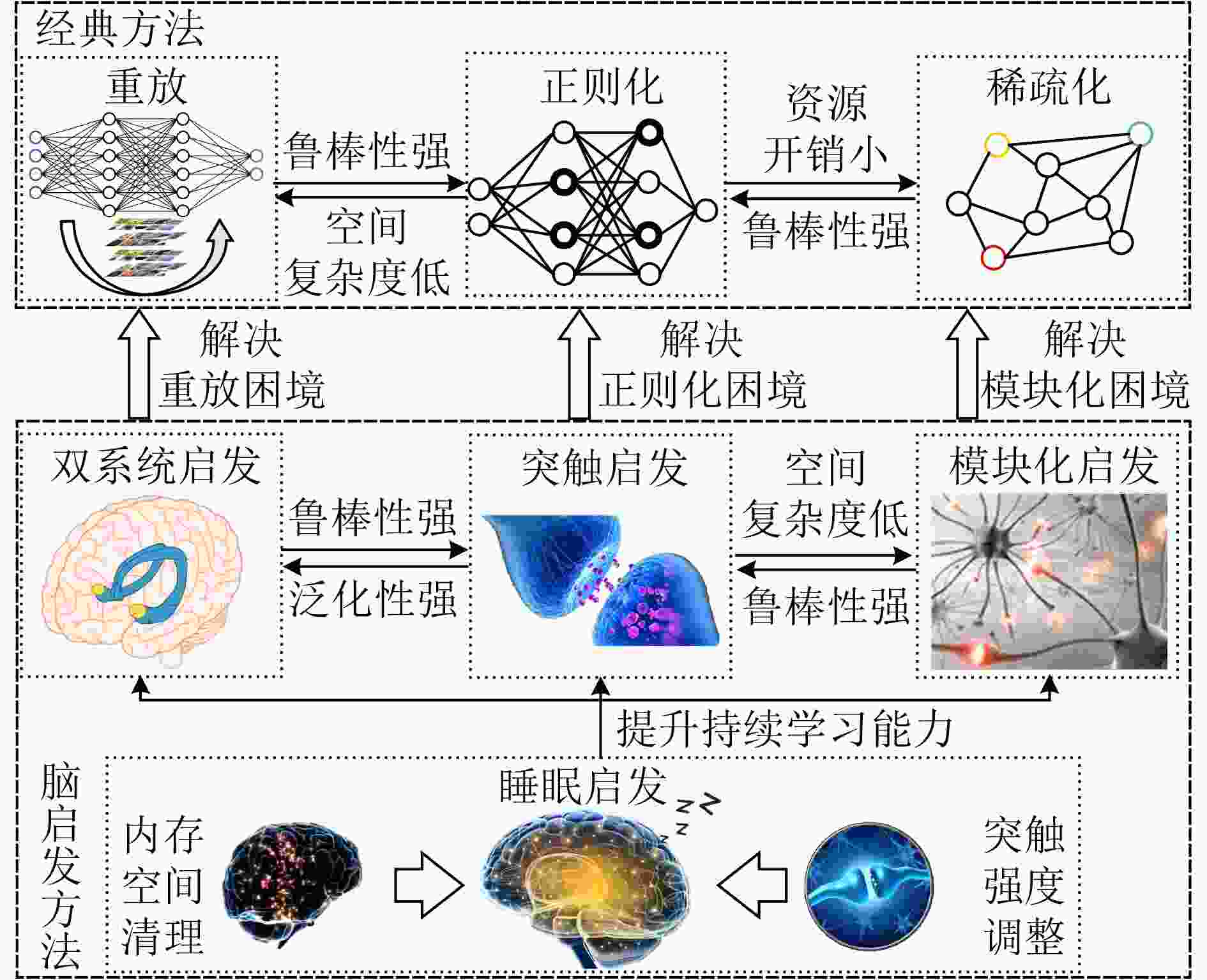

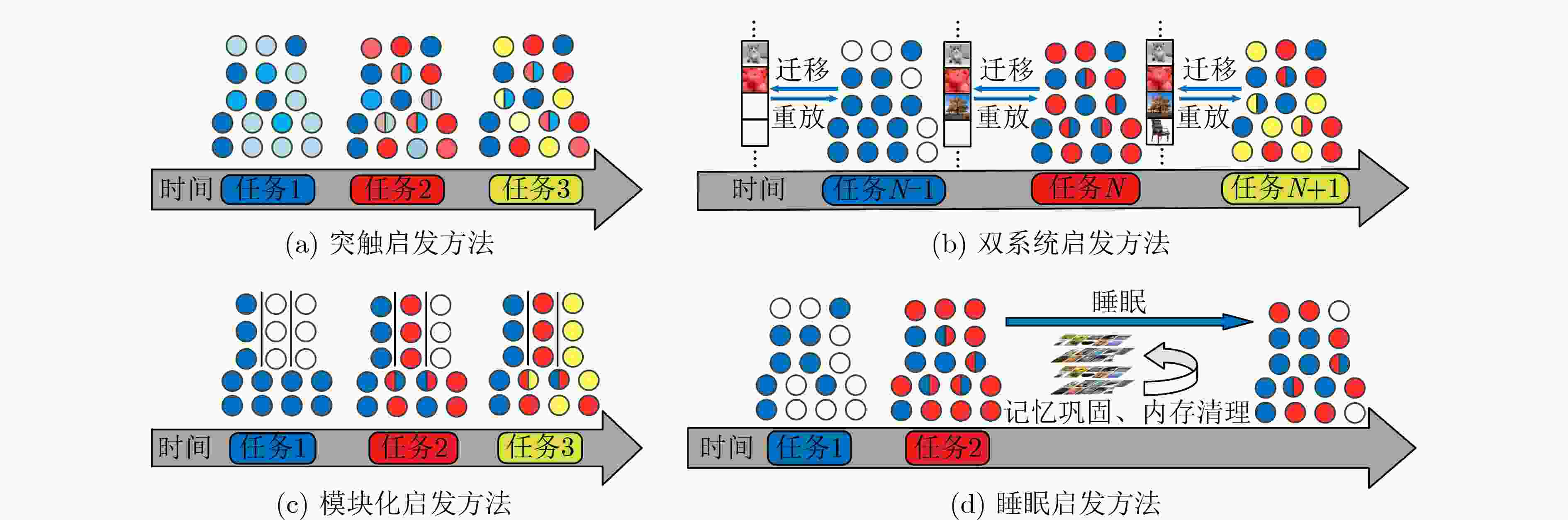

摘要: 深度学习模型面对非独立同分布数据流时,新知识会覆盖旧知识,造成其性能大幅度下降。而持续学习(CL)技术可以从非独立同分布数据流中获取增量可用知识,不断积累新知识的同时无须从头学习,通过模仿类脑的学习与记忆机制达到类人智能。该文针对脑启发式持续学习方法进行综述。首先,回顾持续学习发展历程;其次,从类脑持续学习机制的角度,将持续学习研究方法分为经典方法与脑启发方法两类,对重放、正则化与稀疏化3种经典持续学习方法的研究现状进行总结,分析了其所面临的困境。为此,针对更接近类脑持续学习能力的突触、双系统、睡眠及模块化4类脑启发方法进行阐述分析与对比总结;最后,概述脑启发式持续学习的应用现状,并探讨了在现有技术条件下实现脑启发式持续学习所面临的挑战及其未来发展方向。Abstract: Deep learning model facing the non-independent and identically distributed data streams, the old knowledge will be covered by new knowledge, resulting in a significant performance degradation of model. Continuous Learning(CL) can acquire incremental available knowledge from non-independent and identically distributed data streams, continuously accumulate new knowledge without learning from scratch, and achieve human intelligence by imitating brain learning and memory mechanisms. In this paper, the brain-inspired continuous learning methods are reviewed. Firstly, the history of continuous learning is reviewed. Secondly, from the perspective of brain continuous learning mechanism, the research methods of continuous learning are divided into general methods and brain-inspired methods .The current research status of replay, regularization and sparsity, which are commonly used as the methods of continuous learning, are summarized, and their difficulties are analyzed under the existing technical conditions. To this end, four types of brain-inspired methods: synaptic, dual system, sleep and modularization, which are closer to the ability of brain continuous learning, are meticulously analyzed and compared . Finally, the application status of brain-inspire continuous learning are summarized, and the challenges and development of brain-inspire continuous learning under the existing technical conditions are discussed.

-

表 1 脑启发式CL方法对比与总结

方法 文献 年份 仿生具体机制 核心思想 数据集 对比对象 实验结果 优势 不足 突触 文献[20] 2017 突触内部动力学 智能突触 MNIST EWC、随机梯度下降(Stochastic Gradient Descent, SGD) 平均分类准确率97.5%+,

EWC 97.3%+, SGD 75.6%+资源开销相较于

其他3类脑启发

方法较小类增量场景中相较于

其他3类脑启发

方法表现较差文献[21] 2017 突触可塑性 选择性调整权重可塑性 MNIST 梯度下降(Gradient Descent, GD) 网络容量1000个突触内时,EWC优于GD 文献[60] 2018 突触可塑性 以无监督和在线方式计算参数重要性 MIT Scenes, Caltech- Aircraft[79], SVHN[80] LwF、文献[81]、文

献[82]、 EWC、SI连续实验中,2任务平均遗忘率最低,多任务平均遗忘率为0.49%,平均准确率比SI高2% 海马体

新皮

质层文献[65] 2014 CLS 双网络记忆模型 – 传统双网络记忆模型 召回结果:所提模型Goodness为0.942,

传统模型为0.718对增量任务流适应力强于

突触方法,内存开销小于

模块化方法,知识重用率

高于突触与睡眠方法资源开销高于突触

与睡眠方法文献[22] 2017 海马体记忆重放 深度生成重放 MNIST, SVHN LwF 平均准确率:MNIST(Old)→SVHN(New): 95%+; SVHN (Old)→MNIST (New): 88%+ 文献[33] 2018 CLS 双记忆自组织架构 CORe50 VGG + FT 平均准确率:实例79.43%,

类别93.92%文献[66] 2020 海马体经验重放 使用自动编码器顺序编码网络权值集 MNIST EWC 50个分离的MNIST数据集上平均准确率为95.7%, EWC为70%+ 文献[37] 2020 神经元活动模式 生成重放GR MNIST LwF, EWC, SI 类增量MNIST中, GR: 95%+, LwF, EWC, SI: 40%+ 睡眠 文献[72] 2018 海马体-新皮质层、睡眠重放 生成重放 CIFAR-100 iCaRL 平均准确率,IFAR-100:

rNet:75%+, iCaRL: 0%+清理内存和调整突触

可塑性机制可与其他

脑启发方法结合缓解灾难性遗忘效果

相较于其他3类

脑启发方法较差文献[24] 2019 睡眠巩固记忆 SNN与ANN切换 MNIST 全卷积网络(Full Convolutional

Network, FCN)平均准确率:FCN: 18%+,

Sleep: 40%文献[74] 2019 睡眠与做梦机制 清醒与睡眠状态

切换– – 存储容量从α=0.14扩展到最大值α=1 模块化 文献[29] 2015 神经调节 模块化网络 – – 神经模块化性能94% 缓解灾难性遗忘效果较

佳,知识重用率相较于

突触、睡眠方法较高内存开销高于其他3类脑启发方法 文献[78] 2017 神经调节 扩散的神经调节 – – 验证文献[29]所提的局部与特定任务的学习可以形成功能模块,缓解灾难性遗忘 文献[25] 2018 刺激分离 阻塞训练 2维叶*分枝空间的大型数据集 – 200个试验序列中,平均精度为90%+ 表 2 CL方法总结对比

类型 方法 核心思想 抗遗忘 模型规模控制 内存开销 计算开销 鲁棒性 泛化性 经典方法 重放 重放相关数据以恢复记忆 Ⅲ Ⅱ Ⅰ Ⅰ Ⅱ Ⅱ 正则化 保护重要参数 Ⅰ Ⅱ Ⅱ Ⅱ Ⅰ Ⅱ 稀疏化 隔离参数 Ⅲ Ⅰ Ⅰ Ⅰ Ⅲ Ⅲ 脑启发方法 双记忆系统 长短期记忆网络配合 Ⅲ Ⅱ Ⅰ Ⅰ Ⅲ Ⅱ 突触 在线方式计算突触重要性 Ⅱ Ⅲ Ⅱ Ⅱ Ⅲ Ⅲ 模块化 隔离参数 Ⅲ Ⅱ Ⅰ Ⅰ Ⅲ Ⅲ 睡眠 睡眠与清醒状态间切换 Ⅰ Ⅲ Ⅲ Ⅲ Ⅱ Ⅰ 表 3 本文出现的中英文对照表

中文 英文 简称 持续学习 Continuous Learning CL 人工神经网络 Artificial Neural Network ANN 不遗忘学习 Learning without Forgetting LwF 弹性权值巩固 Elastic Weight Consolidation EWC 深度生成重放 Deep Generative Replay DGR 增长双记忆模型 Growing Dual-Memory GDM 生成重放 Generative Replay GR 增益扰动网络 Beneficial Perturbation Network BPN 智能突触 Synaptic Intelligence SI 记忆感知突触 Memory Aware Synapses MAS 互补学习系统 Complementary Learning Systems CLS 脉冲神经网络 Spiking Neural Network SNN 随机梯度下降 Stochastic Gradient Descent SGD 梯度下降 Gradient Descent GD 全卷积网络 Full Convolutional Network FCN 手写数字数据集 Mixed National Institute of Standards

and Technology databaseMNIST -

[1] LIU Yinhan, GU Jiatao, GOYAL N, et al. Multilingual denoising pre-training for neural machine translation[J]. Transactions of the Association for Computational Linguistics, 2020, 8: 726–742. doi: 10.1162/tacl_a_00343 [2] KHAN N S, ABID A, and ABID K. A novel Natural Language Processing (NLP)-based machine translation model for English to Pakistan sign language translation[J]. Cognitive Computation, 2020, 12(4): 748–765. doi: 10.1007/s12559-020-09731-7 [3] ZHOU Long, ZHANG Jiajun, and ZONG Chengqing. Synchronous bidirectional neural machine translation[J]. Transactions of the Association for Computational Linguistics, 2019, 7: 91–105. doi: 10.1162/tacl_a_00256 [4] LI Lin, GOH T T, and JIN Dawei. How textual quality of online reviews affect classification performance: A case of deep learning sentiment analysis[J]. Neural Computing and Applications, 2020, 32(9): 4387–4415. doi: 10.1007/s00521-018-3865-7 [5] PORIA S, CAMBRIA E, HOWARD N, et al. Fusing audio, visual and textual clues for sentiment analysis from multimodal content[J]. Neurocomputing, 2016, 174: 50–59. doi: 10.1016/j.neucom.2015.01.095 [6] 孙晓, 彭晓琪, 胡敏, 等. 基于多维扩展特征与深度学习的微博短文本情感分析[J]. 电子与信息学报, 2017, 39(9): 2048–2055. doi: 10.11999/JEIT160975SUN Xiao, PENG Xiaoqi, HU Min, et al. Extended multi-modality features and deep learning based microblog short text sentiment analysis[J]. Journal of Electronics &Information Technology, 2017, 39(9): 2048–2055. doi: 10.11999/JEIT160975 [7] YANG Jing, LI Shaobo, WANG Zheng, et al. Using deep learning to detect defects in manufacturing: A comprehensive survey and current challenges[J]. Materials, 2020, 13(24): 5755. doi: 10.3390/ma13245755 [8] JIANG Fengling, KONG Bin, LI Jingpeng, et al. Robust visual saliency optimization based on bidirectional Markov chains[J]. Cognitive Computation, 2021, 13(1): 69–80. doi: 10.1007/s12559-020-09724-6 [9] 周治国, 荆朝, 王秋伶, 等. 基于时空信息融合的无人艇水面目标检测跟踪[J]. 电子与信息学报, 2021, 43(6): 1698–1705. doi: 10.11999/JEIT200223ZHOU Zhiguo, JING Zhao, WANG Qiuling, et al. Object detection and tracking of unmanned surface vehicles based on spatial-temporal information fusion[J]. Journal of Electronics &Information Technology, 2021, 43(6): 1698–1705. doi: 10.11999/JEIT200223 [10] SHIH H C, CHENG H Y, and FU J C. Image classification using synchronized rotation local ternary pattern[J]. IEEE Sensors Journal, 2020, 20(3): 1656–1663. doi: 10.1109/JSEN.2019.2947994 [11] ZHANG Lei, ZHAO Yao, and ZHU Zhenfeng. Extracting shared subspace incrementally for multi-label image classification[J]. The Visual Computer, 2014, 30(12): 1359–1371. doi: 10.1007/s00371-013-0891-4 [12] WANG Qi, LIU Xinchen, LIU Wu, et al. MetaSearch: Incremental product search via deep meta-learning[J]. IEEE Transactions on Image Processing, 2020, 29: 7549–7564. doi: 10.1109/TIP.2020.3004249 [13] DELANGE M, ALJUNDI R, MASANA M, et al. A continual learning survey: Defying forgetting in classification tasks[J/OL]. IEEE Transactions on Pattern Analysis and Machine Intelligence. https://ieeexplore.ieee.org/document/9349197, 2021. [14] HASSELMO M E. Avoiding catastrophic forgetting[J]. Trends in Cognitive Sciences, 2017, 21(6): 407–408. doi: 10.1016/j.tics.2017.04.001 [15] 莫建文, 陈瑶嘉. 基于分类特征约束变分伪样本生成器的类增量学习[J]. 控制与决策, 2021, 36(10): 2475–2482. doi: 10.13195/j.kzyjc.2020.0228MO Jianwen and CHEN Yaojia. Class incremental learning based on variational pseudo-sample generator with classification feature constraints[J]. Control and Decision, 2021, 36(10): 2475–2482. doi: 10.13195/j.kzyjc.2020.0228 [16] MERMILLOD M, BUGAISKA A, and BONIN P. The stability-plasticity dilemma: Investigating the continuum from catastrophic forgetting to age-limited learning effects[J]. Frontiers in Psychology, 2013, 4: 504. doi: 10.3389/fpsyg.2013.00504 [17] LESORT T, LOMONACO V, STOIAN A, et al. Continual learning for robotics: Definition, framework, learning strategies, opportunities and challenges[J]. Information Fusion, 2020, 58: 52–68. doi: 10.1016/j.inffus.2019.12.004 [18] HADSELL R, RAO D, RUSU A A, et al. Embracing change: Continual learning in deep neural networks[J]. Trends in Cognitive Sciences, 2020, 24(12): 1028–1040. doi: 10.1016/j.tics.2020.09.004 [19] PARISI G I, KEMKER R, PART J L, et al. Continual lifelong learning with neural networks: A review[J]. Neural Networks, 2019, 113: 54–71. doi: 10.1016/j.neunet.2019.01.012 [20] ZENKE F, POOLE B, and GANGULI S. Continual learning through synaptic intelligence[C]. The 34th International Conference on Machine Learning, Sydney, Australia, 2017: 3987–3995. [21] KIRKPATRICK J, PASCANU R, RABINOWITZ N, et al. Overcoming catastrophic forgetting in neural networks[J]. Proceedings of the National Academy of Sciences of the United States of America, 2017, 114(13): 3521–3526. doi: 10.1073/pnas.1611835114 [22] SHIN H, LEE J K, KIM J, et al. Continual learning with deep generative replay[C]. The 31st International Conference on Neural Information Processing Systems (NIPS 2017), Long Beach, USA, 2017: 2994–3003. [23] GONZÁLEZ O C, SOKOLOV Y, KRISHNAN G P, et al. Can sleep protect memories from catastrophic forgetting?[J]. eLife, 2020, 9: e51005. doi: 10.7554/eLife.51005 [24] KRISHNAN G P, TADROS T, RAMYAA R, et al. Biologically inspired sleep algorithm for artificial neural networks[J/OL]. https://arxiv.org/abs/1908.02240, 2019. [25] FLESCH T, BALAGUER J, DEKKER R, et al. Comparing continual task learning in minds and machines[J]. Proceedings of the National Academy of Sciences of the United States of America, 2018, 115(44): e10313–e10322. doi: 10.1073/pnas.1800755115 [26] MCCLOSKEY M and COHEN N J. Catastrophic interference in connectionist networks: The sequential learning problem[J]. Psychology of Learning and Motivation, 1989, 24: 109–165. [27] BAE H, SONG S, and PARK J. The present and future of continual learning[C]. 2020 International Conference on Information and Communication Technology Convergence (ICTC), Jeju, Korea (South), 2020: 1193–1195. [28] THRUN S. A lifelong learning perspective for mobile robot control[C]. IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS'94), Munich, Germany, 1994: 23–30. [29] ELLEFSEN K O, MOURET J B, and CLUNE J B. Neural modularity helps organisms evolve to learn new skills without forgetting old skills[J]. PLoS Computational Biology, 2015, 11(4): e1004128. doi: 10.1371/journal.pcbi.1004128 [30] LI Zhizhong and HOIEM D. Learning without forgetting[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2018, 40(12): 2935–2947. doi: 10.1109/TPAMI.2017.2773081 [31] LOMONACO V and MALTONI D. Core50: A new dataset and benchmark for continuous object recognition[C]. The 1st Annual Conference on Robot Learning (CoRL 2017), Mountain View, USA, 2017: 17–26. [32] REBUFFI S A, KOLESNIKOV A, SPERL G, et al. iCaRL: Incremental classifier and representation learning[C]. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, USA, 2017: 5533–5542. [33] PARISI G I, TANI J, WEBER C, et al. Lifelong learning of spatiotemporal representations with dual-memory recurrent self-organization[J]. Frontiers in Neurorobotics, 2018, 12: 78. doi: 10.3389/fnbot.2018.00078 [34] FARQUHAR S and GAL Y. Towards robust evaluations of continual learning[J/OL]. https://arxiv.org/abs/1805.09733, 2018. [35] BAWEJA C, GLOCKER B, and KAMNITSAS K. Towards continual learning in medical imaging[J/OL]. https://arxiv.org/abs/1811.02496, 2018. [36] VAN DE VEN G M and TOLIAS A S. Three scenarios for continual learning[J/OL]. https://arxiv.org/abs/1904.07734, 2019. [37] VAN DE VEN G M, SIEGELMANN H T, and TOLIAS A S. Brain-inspired replay for continual learning with artificial neural networks[J]. Nature Communications, 2020, 11(1): 4069. doi: 10.1038/s41467-020-17866-2 [38] JAIN S and KASAEI H. 3D_DEN: Open-ended 3D object recognition using dynamically expandable networks[J/OL]. IEEE Transactions on Cognitive and Developmental Systems. https://ieeexplore.ieee.org/document/9410594, 2021. [39] WEN Shixian, RIOS A, GE Yunhao, et al. Beneficial perturbation network for designing general adaptive artificial intelligence systems[J/OL]. IEEE Transactions on Neural Networks and Learning Systems. https://ieeexplore.ieee.org/document/9356334, 2021. [40] CASTRO F M, MARÍN-JIMÉNEZ M J, GUIL N, et al. End-to-end incremental learning[C]. The 15th European Conference on Computer Vision (ECCV), Munich, Germany, 2018: 241–257. [41] SHIEH J L, UL HAQ Q M, HAQ M A, et al. Continual learning strategy in one-stage object detection framework based on experience replay for autonomous driving vehicle[J]. Sensors, 2020, 20(23): 6777. doi: 10.3390/s20236777 [42] BRAHMA P P and OTHON A. Subset replay based continual learning for scalable improvement of autonomous systems[C]. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Salt Lake City, USA, 2018: 1179–1798. [43] WU Yue, CHEN Yinpeng, WANG Lijuan, et al. Incremental classifier learning with generative adversarial networks[J/OL]. https://arxiv.org/abs/1802.00853, 2018. [44] OSTAPENKO O, PUSCAS M, KLEIN T, et al. Learning to remember: A synaptic plasticity driven framework for continual learning[C]. 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, USA, 2019: 11313–11321. [45] WU Chenshen, HERRANZ L, LIU Xialei, et al. Memory replay GANs: Learning to generate images from new categories without forgetting[C]. The 32nd International Conference on Neural Information Processing Systems, Montréal, Canada, 2018: 5966–5976. [46] LAO Qicheng, JIANG Xiang, HAVAEI M, et al. A two-stream continual learning system with variational domain-agnostic feature replay[J/OL]. IEEE Transactions on Neural Networks and Learning Systems. https://ieeexplore.ieee.org/document/9368260, 2021. [47] JUNG S, AHN H, CHA S, et al. Adaptive group sparse regularization for continual learning[J/OL]. https://arxiv.org/abs/2003.13726, 2020. [48] POMPONI J, SCARDAPANE S, LOMONACO V, et al. Efficient continual learning in neural networks with embedding regularization[J]. Neurocomputing, 2020, 397: 139–148. doi: 10.1016/j.neucom.2020.01.093 [49] MALTONI D and LOMONACO V. Continuous learning in single-incremental-task scenarios[J]. Neural Networks, 2019, 116: 56–73. doi: 10.1016/j.neunet.2019.03.010 [50] PARSHOTAM K and KILICKAYA M. Continual learning of object instances[C]. 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, USA, 2020: 907–914. [51] MASARCZYK W and TAUTKUTE I. Reducing catastrophic forgetting with learning on synthetic data[C]. 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, USA, 2020: 1019–1024. [52] WIXTED J T. The psychology and neuroscience of forgetting[J]. Annual Review of Psychology, 2004, 55: 235–269. doi: 10.1146/annurev.psych.55.090902.141555 [53] WANG Zifeng, JIAN Tong, CHOWDHURY K, et al. Learn-prune-share for lifelong learning[C]. 2020 IEEE International Conference on Data Mining (ICDM), Sorrento, Italy, 2020: 641–650. [54] GOLKAR S, KAGAN M, and CHO K. Continual learning via neural pruning[J/OL]. https://arxiv.org/abs/1903.04476, 2019. [55] YOON J, YANG E, LEE J, et al. Lifelong learning with dynamically expandable networks[J/OL]. https://arxiv.org/abs/1708.01547, 2018. [56] HUMEAU Y and CHOQUET D. The next generation of approaches to investigate the link between synaptic plasticity and learning[J]. Nature Neuroscience, 2019, 22(10): 1536–1543. doi: 10.1038/s41593-019-0480-6 [57] BENNA M K and FUSI S. Computational principles of synaptic memory consolidation[J]. Nature Neuroscience, 2016, 19(12): 1697–1706. doi: 10.1038/nn.4401 [58] REDONDO R L and MORRIS R G M. Making memories last: The synaptic tagging and capture hypothesis[J]. Nature Reviews Neuroscience, 2011, 12(1): 17–30. doi: 10.1038/nrn2963 [59] FUSI S, DREW P J, and ABBOTT L F. Cascade models of synaptically stored memories[J]. Neuron, 2005, 45(4): 599–611. doi: 10.1016/j.neuron.2005.02.001 [60] ALJUNDI R, BABILONI F, ELHOSEINY M, et al. Memory aware synapses: Learning what (not) to forget[C]. The 15th European Conference on Computer Vision (ECCV), Munich, Germany, 2018: 144–161. [61] SUKHOV S, LEONTEV M, MIHEEV A, et al. Prevention of catastrophic interference and imposing active forgetting with generative methods[J]. Neurocomputing, 2020, 400: 73–85. doi: 10.1016/j.neucom.2020.03.024 [62] KRAUSE J, STARK M, DENG Jia, et al. 3D object representations for fine-grained categorization[C]. 2013 IEEE International Conference on Computer Vision Workshops, Sydney, Australia, 2013: 554–561. [63] MCCLELLAND J L, MCNAUGHTON B L, and O'REILLY R C. Why there are complementary learning systems in the hippocampus and neocortex: Insights from the successes and failures of connectionist models of learning and memory[J]. Psychological Review, 1995, 102(3): 419–457. doi: 10.1037/0033-295x.102.3.419 [64] KUMARAN D, HASSABIS D, and MCCLELLAND J L. What learning systems do intelligent agents need? Complementary learning systems theory updated[J]. Trends in Cognitive Sciences, 2016, 20(7): 512–534. doi: 10.1016/j.tics.2016.05.004 [65] HATTORI M. A biologically inspired dual-network memory model for reduction of catastrophic forgetting[J]. Neurocomputing, 2014, 134: 262–268. doi: 10.1016/j.neucom.2013.08.044 [66] MANDIVARAPU J K, CAMP B, and ESTRADA R. Self-net: Lifelong learning via continual self-modeling[J]. Frontiers in Artificial Intelligence, 2020, 3: 19. doi: 10.3389/frai.2020.00019 [67] DIEKELMANN S and BORN J. The memory function of sleep[J]. Nature Reviews Neuroscience, 2010, 11(2): 114–126. doi: 10.1038/nrn2762 [68] FELDMAN D E. Synaptic mechanisms for plasticity in neocortex[J]. Annual Review of Neuroscience, 2009, 32: 33–55. doi: 10.1146/annurev.neuro.051508.135516 [69] TONONI G and CIRELLI C. Sleep and synaptic homeostasis: A hypothesis[J]. Brain Research Bulletin, 2003, 62(2): 143–150. doi: 10.1016/j.brainresbull.2003.09.004 [70] WEI Yi'na, KRISHNAN G P, KOMAROV M, et al. Differential roles of sleep spindles and sleep slow oscillations in memory consolidation[J]. PLoS Computational Biology, 2018, 14(7): e1006322. doi: 10.1371/journal.pcbi.1006322 [71] STICKGOLD R. Parsing the role of sleep in memory processing[J]. Current Opinion in Neurobiology, 2013, 23(5): 847–853. doi: 10.1016/j.conb.2013.04.002 [72] KEMKER R and KANAN C. FearNet: Brain-inspired model for incremental learning[C]. The 6th International Conference on Learning Representations, Vancouver, Canada, 2018. [73] HOPFIELD J J. Neural networks and physical systems with emergent collective computational abilities[J]. Proceedings of the National Academy of Sciences of the United States of America, 1982, 79(8): 2554–2558. doi: 10.1201/9780429500459-2 [74] FACHECHI A, AGLIARI E, and BARRA A. Dreaming neural networks: Forgetting spurious memories and reinforcing pure ones[J]. Neural Networks, 2019, 112: 24–40. doi: 10.1016/j.neunet.2019.01.006 [75] HINTZE A and ADAMI C. Evolution of complex modular biological networks[J]. PLoS Computational Biology, 2008, 4(2): e23. doi: 10.1371/journal.pcbi.004 [76] ESPINOSA-SOTO C and WAGNER A. Specialization can drive the evolution of modularity[J]. PLoS Computational Biology, 2010, 6(3): e1000719. doi: 10.1371/journal.pcbi.1000719 [77] VERBANCSICS P and STANLEY K O. Constraining connectivity to encourage modularity in HyperNEAT[C]. The 13th Annual Conference on Genetic and Evolutionary Computation (GECCO), Dublin, Ireland, 2011: 1483–1490. [78] VELEZ R and CLUNE J. Diffusion-based neuromodulation can eliminate catastrophic forgetting in simple neural networks[J]. PLoS One, 2017, 12(11): e0187736. doi: 10.1371/journal.pone.0187736 [79] MAJI S, RAHTU E, KANNALA J, et al. Fine-grained visual classification of aircraft[J/OL]. https://arxiv.org/abs/1306.5151, 2013. [80] HUANG Gao, LIU Zhuang, VAN DER MAATEN L, et al. Densely connected convolutional networks[C]. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, USA, 2017: 2261–2269. [81] RANNEN A, ALJUNDI R, BLASCHKO M B, et al. Encoder based lifelong learning[C]. 2017 IEEE International Conference on Computer Vision, Venice, Italy, 2017: 1329–1337. [82] LEE S W, KIM J H, JUN J, et al. Overcoming catastrophic forgetting by incremental moment matching[C]. The 31st International Conference on Neural Information Processing Systems, Long Beach, USA, 2017: 4655–4665 . [83] MASANA M, LIU Xialei, TWARDOWSKI B, et al. Class-incremental learning: Survey and performance evaluation on image classification[J/OL]. https://arxiv.org/abs/2010.15277, 2020. [84] KAMRANI F, ELERS A, COHEN M, et al. MarioDAgger: A time and space efficient autonomous driver[C]. The 19th IEEE International Conference on Machine Learning and Applications (ICMLA), Miami, USA, 2020: 1491–1498. [85] MASCHLER B, VIETZ H, JAZDI N, et al. Continual learning of fault prediction for turbofan engines using deep learning with elastic weight consolidation[C]. The 25th IEEE International Conference on Emerging Technologies and Factory Automation (ETFA), Vienna, Austria, 2020: 959–966. [86] KUMAR S, VANKAYALA S K, SAHOO B S, et al. Continual learning-based channel estimation for 5G millimeter-wave systems[C]. The IEEE 18th Annual Consumer Communications & Networking Conference (CCNC), Las Vegas, USA, 2021: 1–6. [87] ATKINSON C, MCCANE B, SZYMANSKI L, et al. Pseudo-rehearsal: Achieving deep reinforcement learning without catastrophic forgetting[J]. Neurocomputing, 2021, 428: 291–307. doi: 10.1016/j.neucom.2020.11.050 [88] KUMAR S, DUTTA S, CHATTURVEDI S, et al. Strategies for Enhancing Training and Privacy in Blockchain Enabled Federated Learning[C]. 2020 IEEE Sixth International Conference on Multimedia Big Data (BigMM), New Delhi, India, 2020: 333–340. [89] CHEN J. Continual learning for addressing optimization problems with a snake-like robot controlled by a self-organizing model[J]. Applied Sciences, 2020, 10(14): 4848. doi: 10.3390/app10144848 [90] XIONG Fangzhou, LIU Zhiyong, HUANG Kaizhu, et al. State primitive learning to overcome catastrophic forgetting in robotics[J]. Cognitive Computation, 2021, 13(2): 394–402. doi: 10.1007/s12559-020-09784-8 [91] LEE S. Accumulating conversational skills using continual learning[C]. 2018 IEEE Spoken Language Technology Workshop (SLT), Athens, Greece, 2018: 862–867. [92] HAYES T L and KANAN C. Lifelong machine learning with deep streaming linear discriminant analysis[C]. 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, USA, 2020: 887–896. [93] KEMKER R, MCCLURE M, ABITINO A, et al. Measuring catastrophic forgetting in neural networks[C]. The 32nd AAAI Conference on Artificial Intelligence, New Orleans, USA, 2018: 3390–3398. [94] BUSHEY D, TONONI G, and CIRELLI C. Sleep and synaptic homeostasis: Structural evidence in Drosophila[J]. Science, 2011, 332(6037): 1576–1581. doi: 10.1126/science.1202839 -

下载:

下载:

下载:

下载: