Target Drift Discriminative Network Based on Dual-template Siamese Structure in Long-term Tracking

-

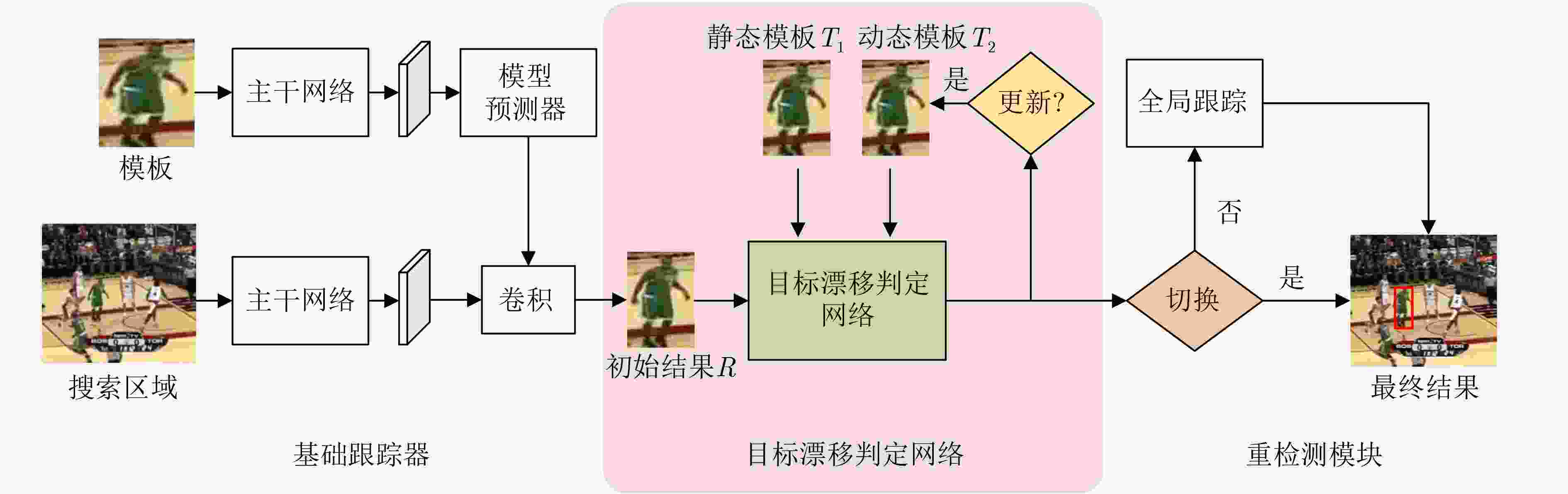

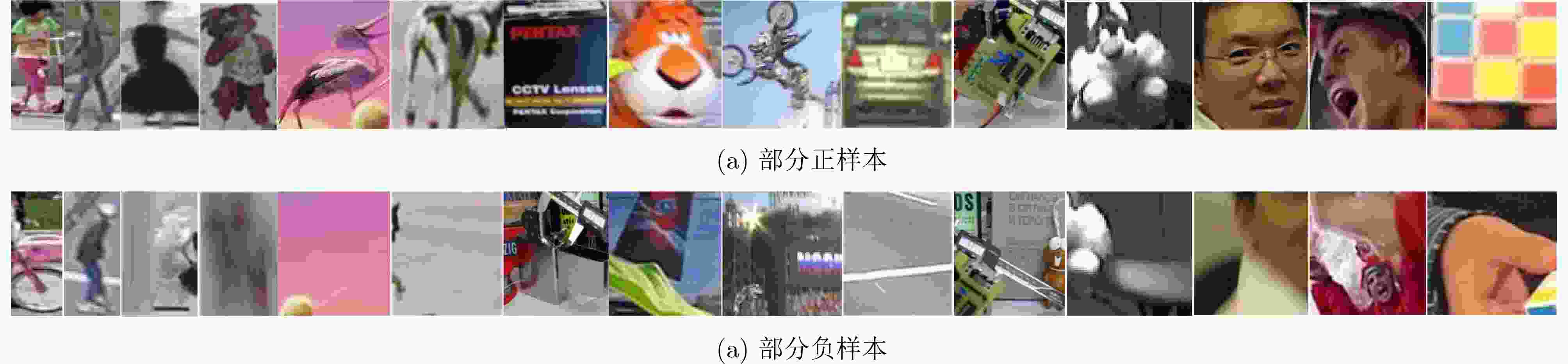

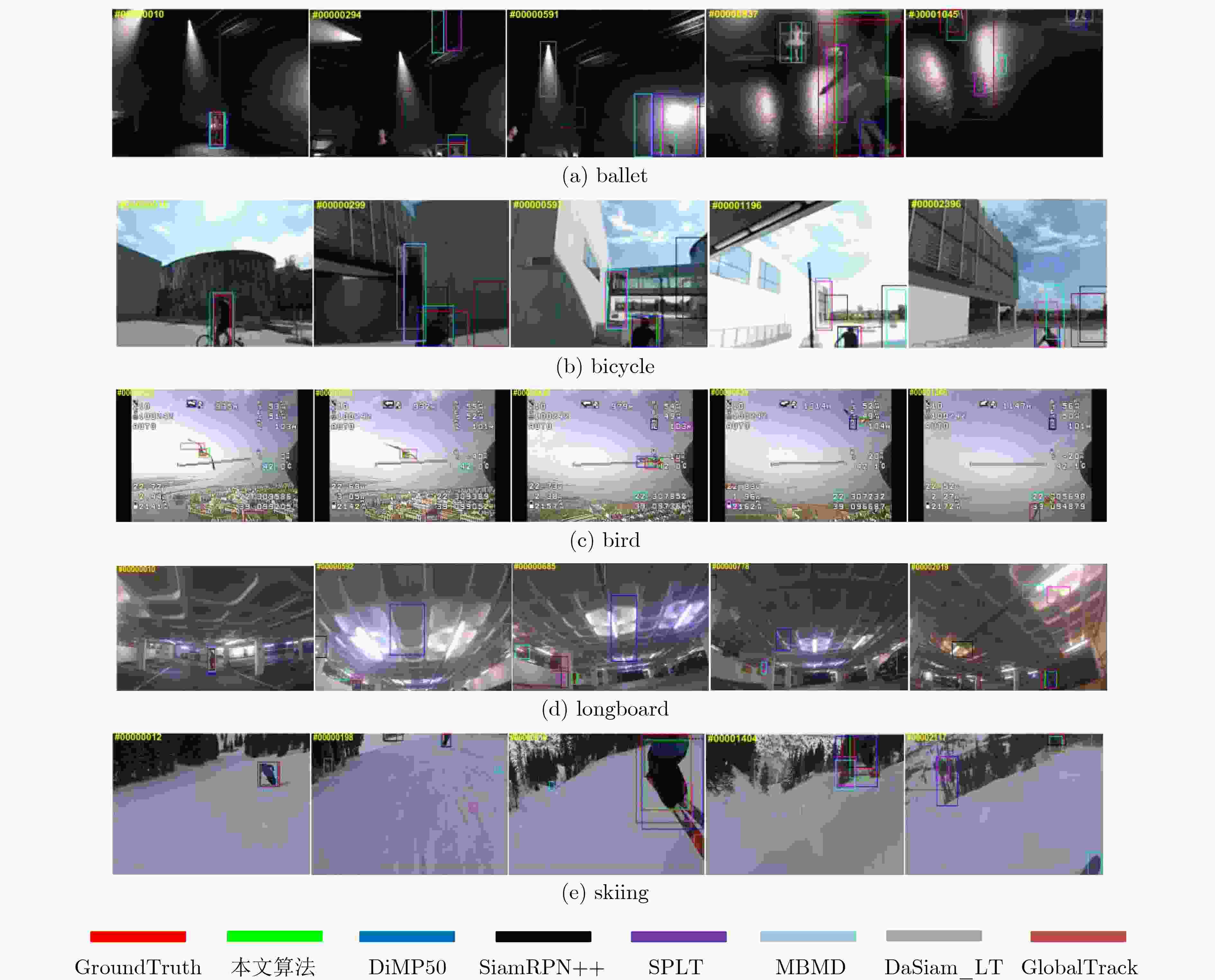

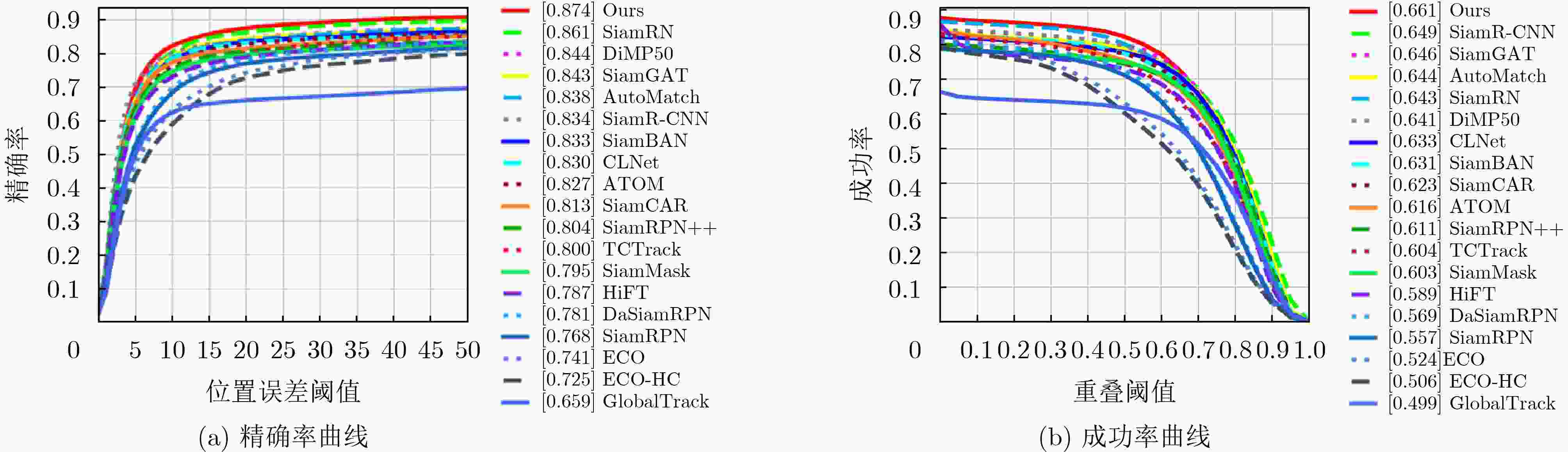

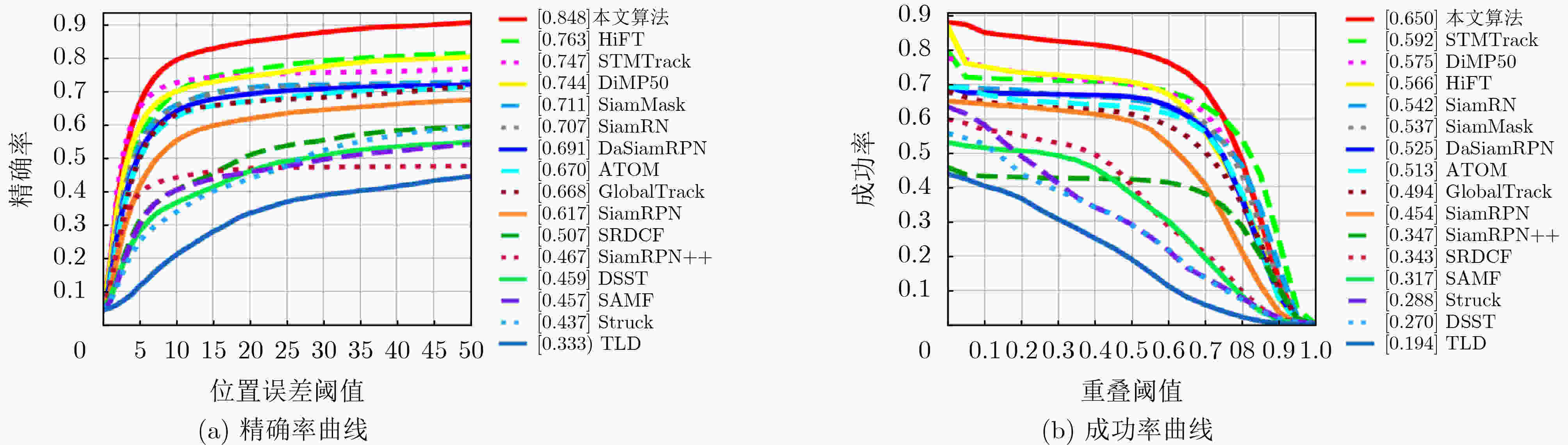

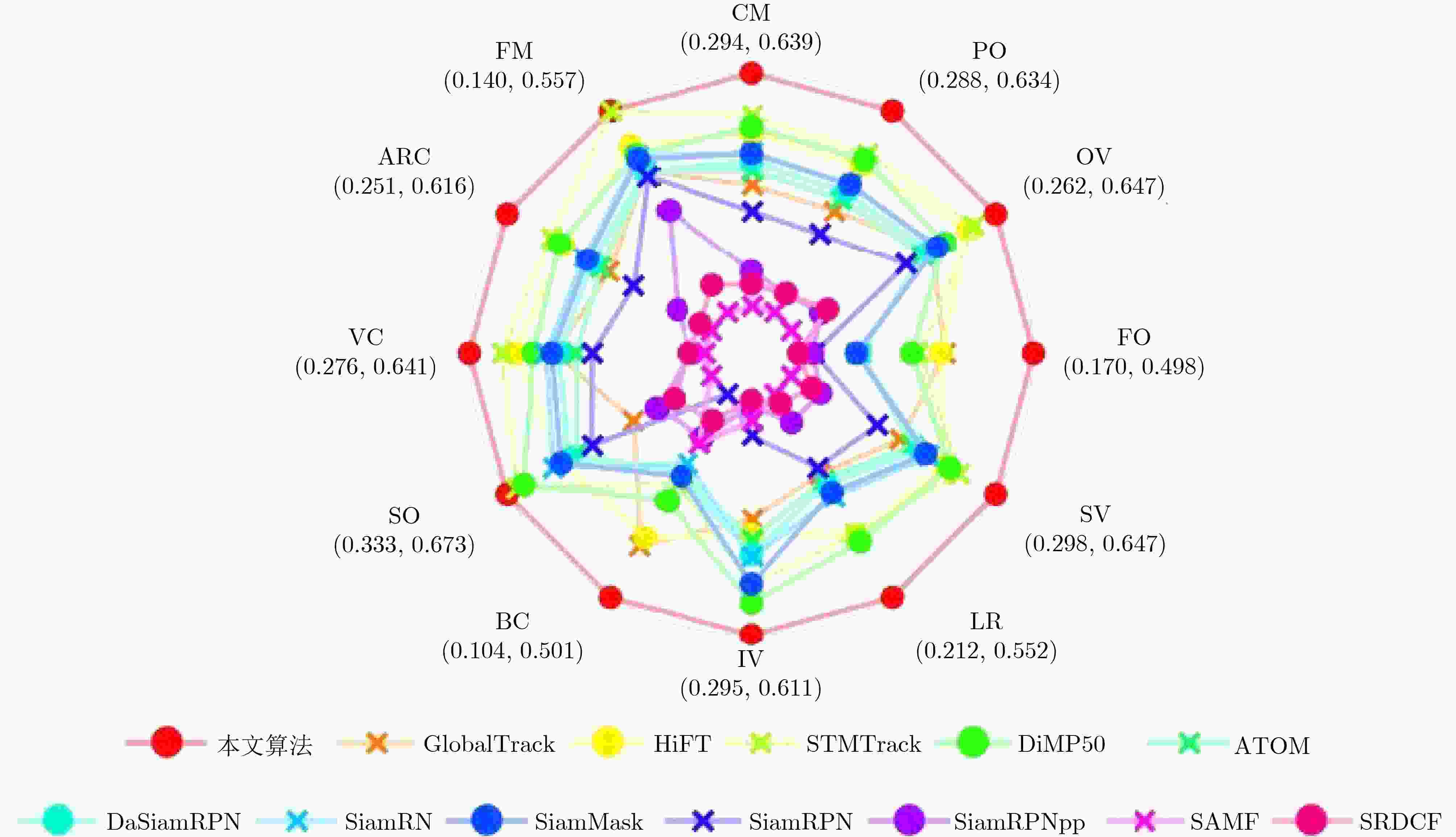

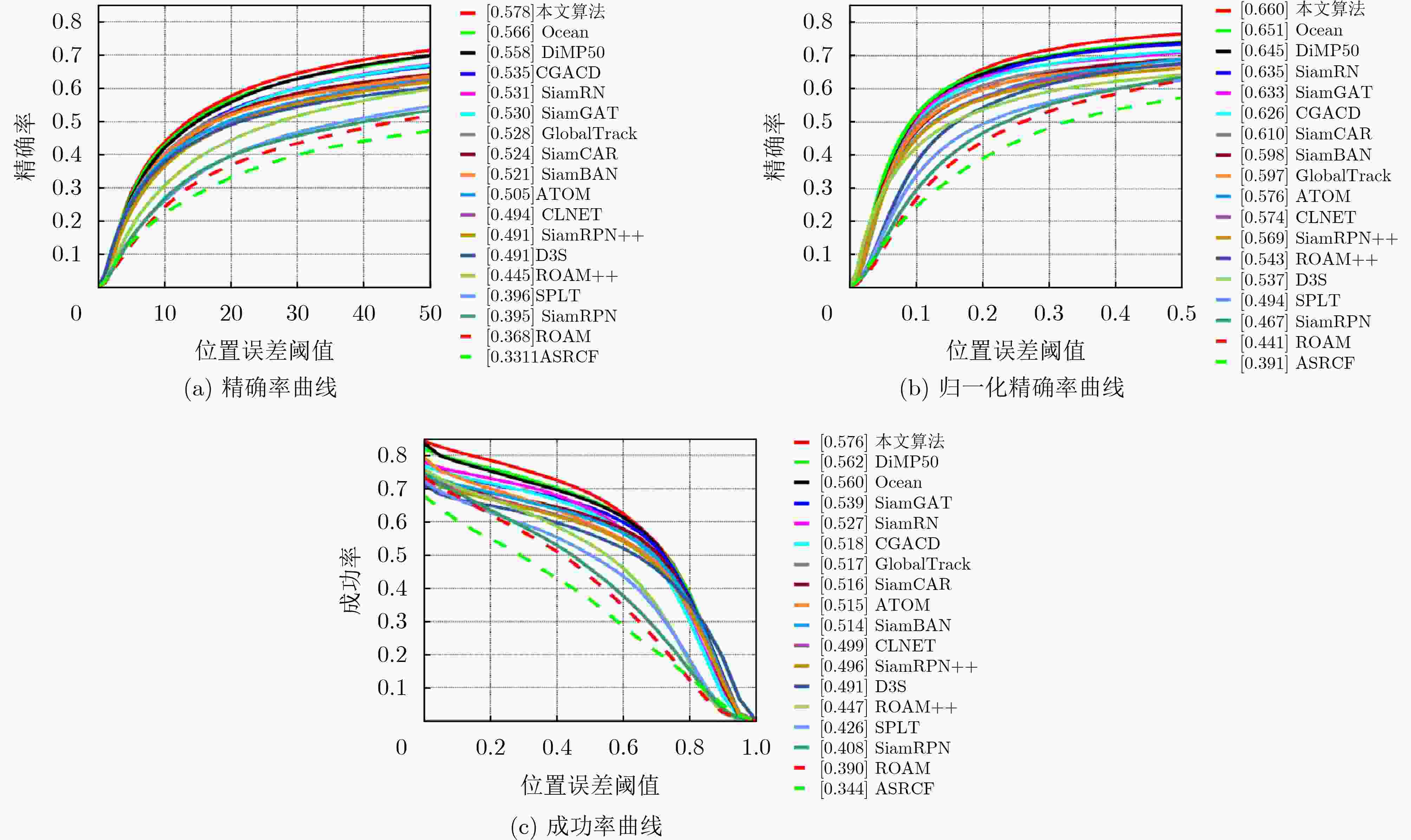

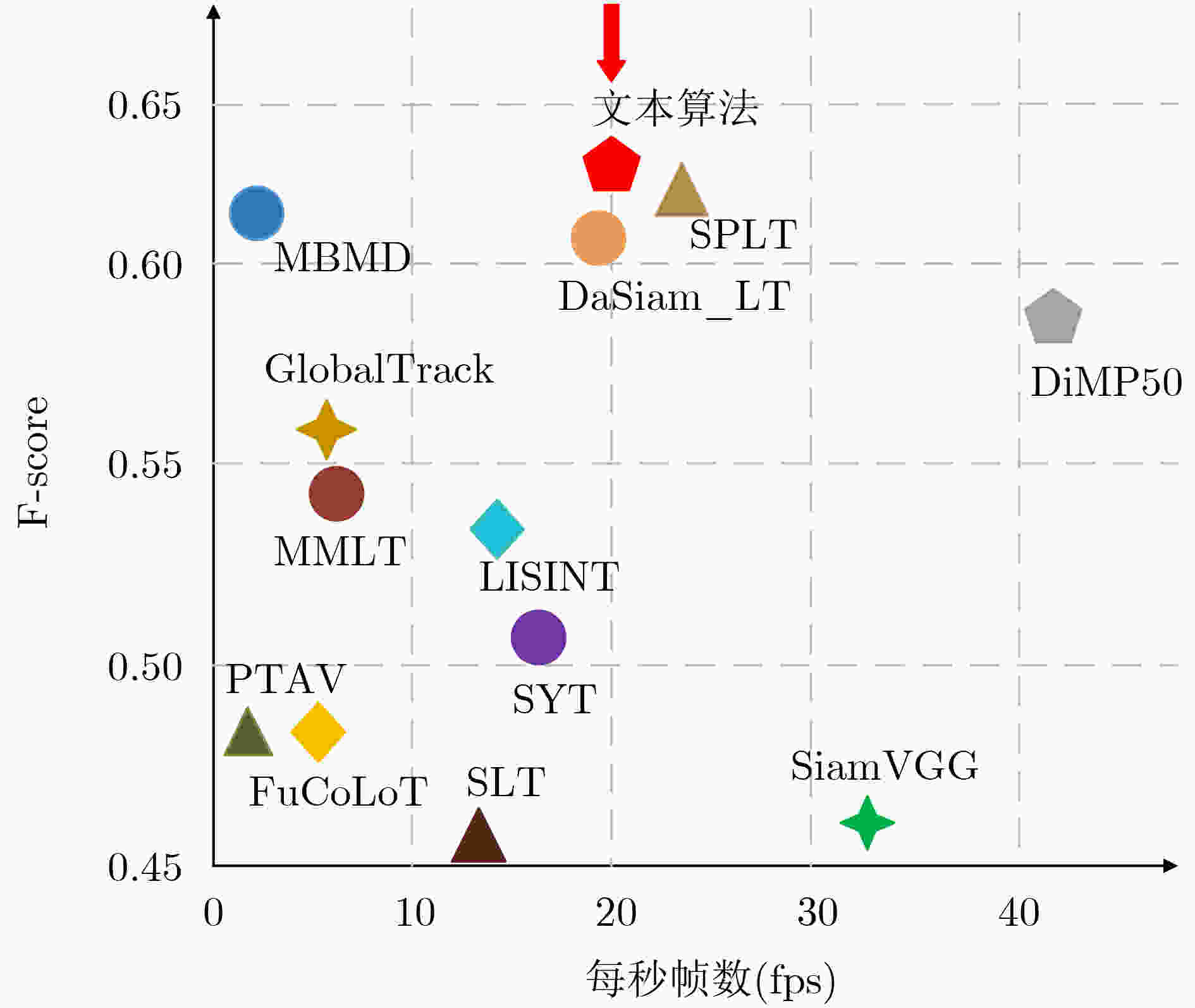

摘要: 在长时视觉跟踪中,大部分目标丢失判定方法需要人为确定阈值,而最优阈值的选取通常较为困难,造成长时跟踪算法的泛化能力较弱。为此,该文提出一种无需人为选取阈值的目标漂移判定网络(DNet)。该网络采用Siamese结构,利用静态模板和动态模板共同判定跟踪结果是否丢失,其中,引入动态模板有效提高算法对目标外观变化的适应能力。为了对所提目标漂移判定网络进行训练,建立了样本丰富的数据集。为验证所提网络的有效性,将该网络与基础跟踪器和重检测模块相结合,构建了一个完整的长时跟踪算法。在UAV20L, LaSOT, VOT2018-LT和VOT2020-LT等经典的视觉跟踪数据集上进行了测试,实验结果表明,相比于基础跟踪器,在UAV20L数据集上,跟踪精度和成功率分别提升了10.4%和7.5%。Abstract: In long-term visual tracking, most of the target loss discriminative methods require artificially determined thresholds, and the selection of optimal thresholds is usually difficult, resulting in weak generalization ability of long-term tracking algorithms. A target drift Discriminative Network (DNet) that does not require artificially selected thresholds is proposed. The network adopts Siamese structure and uses both static and dynamic templates to determine whether the tracking results are lost or not. Among them, the introduction of dynamic templates effectively improves the algorithm’s ability to adapt to changes in target appearance. In order to train the proposed target drift discriminative network, a sample-rich dataset is established. To verify the effectiveness of the proposed network, a complete long-term tracking algorithm is constructed in this paper by combining this network with the base tracker and the re-detection module. It is tested on classical visual tracking datasets such as UAV20L, LaSOT, VOT2018-LT and VOT2020-LT. The experimental results show that compared with the base tracker, the tracking accuracy and success rate are improved by 10.4% and 7.5% on UAV20L dataset, respectively.

-

表 1 在UAV20L上选取不同置信度阈值的跟踪结果精确率,最优结果使用粗体

阈值 0.70 0.75 0.80 0.85 0.90 精确率 0.829 0.848 0.842 0.841 0.836 表 2 训练数据划分

正样本 负样本 训练集 45352 53836 验证集 11338 13458 共计 56690 67294 1 长时视觉跟踪中基于双模板Siamese结构的目标漂移判定网络

输入:图像序列:I1,I2,···,In;初始目标位置:P0=(x0, y0, w, h) 输出:预估目标位置:Pn=( x0, y0, w, h)。 For i=2,3,···,n do: 步骤1 跟踪目标 根据上一帧中心位置裁剪出搜索区域Search Region; 提取基准模板和搜索区域的特征; 将经过模型预测器的模板特征与搜索区域特征进行互相关Corr操

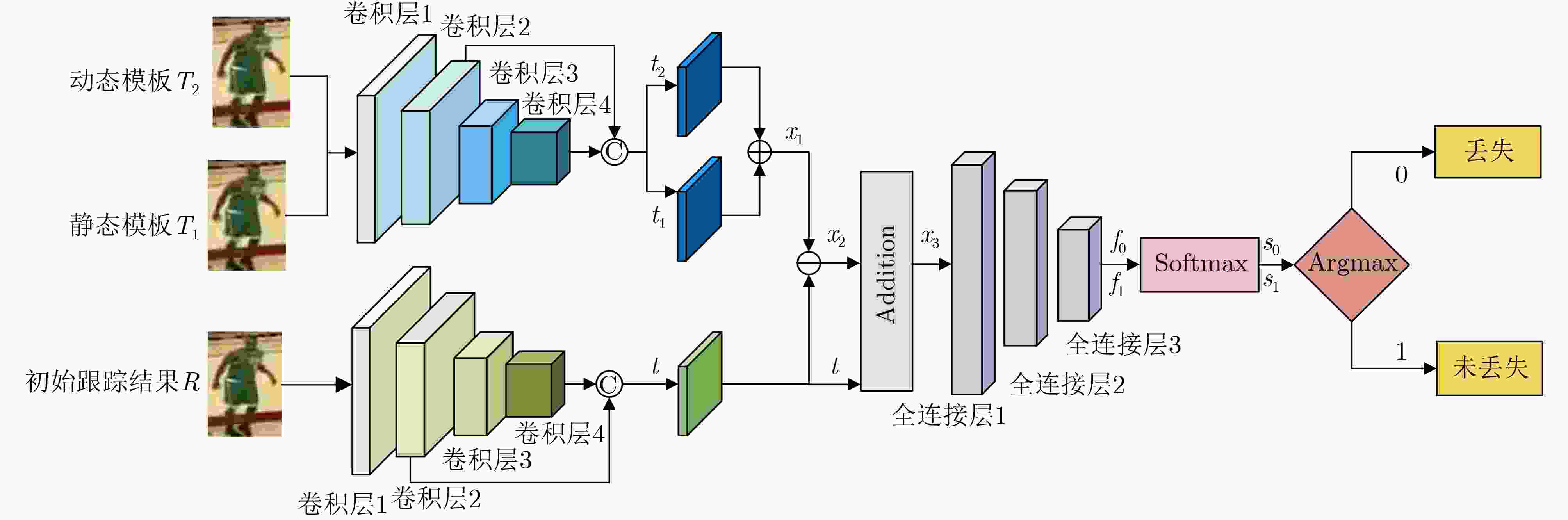

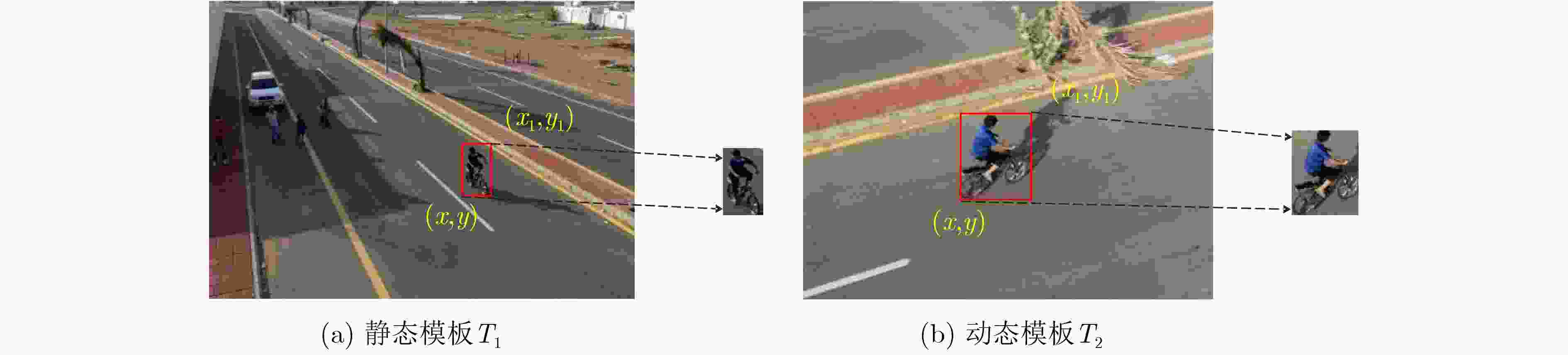

作,得到结果响应图,即最高响应点位置是被跟踪目标的位置。步骤2基于双模板Siamese结构的目标漂移判定网络DNet 输入初始跟踪结果R、静态模板T1与动态模板T2; 经过4层卷积(Conv1~Conv4),并将浅层特征与深层特征相结

合,最终提取到特征t,t1,t2;对双模板特征t1和t2进行融合之后,得到特征x1,并与初始跟踪

结果t进行特征相减,得到特征x2;特征x2与初始跟踪结果t进行Addtion操作,得到最终特征x3; 将特征x3送入3层全连接(FC1~FC3)中降参数; 随后使用Softmax函数与Argmax函数得到最终结果0或1,即目

标丢失或未丢失;若判断目标未丢失,同时基础跟踪器中的置信度分数Fmax大于阈

值$ \sigma $,则更新动态模板T2。步骤3 重检测模块 如果判定网络判断目标丢失同时置信度分数 Fmax小于0.3,则启

动重检测模块;将当前帧的整幅图像送入GlobalTrack算法中; 最后得到目标框,即为被跟踪目标。 表 3 在不同数据集下不同判定方法的阈值选取

UAV123 UAV20L LaSOT VOT2018-LT VOT2020-LT PSR 5 9 5 7 8 APCE 16 20 19 19 20 DNet – – – – – 表 4 在VOT2018-LT上的消融实验结果,最佳结果以加黑突出显示

DiMP50 MDNet PSR APCE&Fmax DNet&Fmax GlobalTrack F-score FPS √ √ √ 0.614 6.5 √ √ √ 0.627 21 √ √ √ 0.629 22 √ √ √ 0.630 20 表 5 在VOT2018-LT上不同算法的性能评估,第1和第2优的结果用粗体和斜体显示

FuCoLoT PTAVplus DaSiam_LT MBMD DiMP50 SPLT GlobalTrack TJLGS ELGLT 本文算法 F-score 0.480 0.481 0.607 0.610 0.588 0.616 0.555 0.586 0.638 0.630 Pr 0.539 0.595 0.627 0.634 0.564 0.633 0.503 0.649 0.669 0.643 Re 0.432 0.404 0.588 0.588 0.614 0.600 0.528 0.535 0.610 0.618 表 6 在VOT2020-LT上的性能评估,第1和第2优的结果用粗体和斜体显示

ltMDNet MBMD mbdet SPLT DiMP50 GlobalTrack SiamRN TJLGS STMTrack TACT ELGLT 本文算法 F-score 0.574 0.575 0.567 0.565 0.567 0.520 0.444 0.515 0.550 0.569 0.590 0.599 Pr 0.649 0.623 0.609 0.565 0.606 0.529 0.575 0.628 0.611 0.578 0.637 0.609 Re 0.514 0.534 0.530 0.544 0.533 0.512 0.361 0.437 0.500 0.561 0.550 0.590 -

[1] 周治国, 荆朝, 王秋伶, 等. 基于时空信息融合的无人艇水面目标检测跟踪[J]. 电子与信息学报, 2021, 43(6): 1698–1705. doi: 10.11999/JEIT200223.ZHOU Zhiguo, JING Zhao, WANG Qiuling, et al. Object detection and tracking of unmanned surface vehicles based on spatial-temporal information fusion[J]. Journal of Electronics & Information Technology, 2021, 43(6): 1698–1705. doi: 10.11999/JEIT200223. [2] MUELLER M, SMITH N, and GHANEM B. A benchmark and simulator for UAV tracking[C]. The 14th European Conference on Computer Vision, Amsterdam, The Netherlands, 2016: 445–461. Doi: 10.1007/978-3-319-46448-0_27. [3] FAN Heng, LIN Liting, YANG Fan, et al. LaSOT: A high-quality benchmark for large-scale single object tracking[C]. The 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, USA, 2019: 5369–5378. doi: 10.1109/CVPR.2019.00552. [4] LUKEŽIČ A, ZAJC L Č, VOJÍŘ T, et al. Now you see me: Evaluating performance in long-term visual tracking[EB/OL]. https://arxiv.org/abs/1804.07056, 2018. [5] KRISTAN M, LEONARDIS A, MATAS J, et al. The eighth visual object tracking VOT2020 challenge results[C]. European Conference on Computer Vision, Glasgow, UK, 2020: 547–601. doi: 10.1007/978-3-030-68238-5_39. [6] BOLME D S, BEVERIDGE J R, DRAPER B A, et al. Visual object tracking using adaptive correlation filters[C]. 2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, San Francisco, USA, 2010: 2544–2550. doi: 10.1109/CVPR.2010.5539960. [7] WANG Mengmeng, LIU Yong, and HUANG Zeyi. Large margin object tracking with circulant feature maps[C]. The 2017 IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, USA, 2017: 4800–4808. doi: 10.1109/CVPR.2017.510. [8] LUKEŽIČ A, ZAJC L Č, VOJÍŘ T, et al. Fucolot–a fully-correlational long-term tracker[C]. 14th Asian Conference on Computer Vision, Perth, Australia, 2018: 595–611. doi: 10.1007/978-3-030-20890-5_38. [9] YAN Bin, ZHAO Haojie, WANG Dong, et al. 'Skimming-Perusal' tracking: A framework for real-time and robust long-term tracking[C]. The 2019 IEEE/CVF International Conference on Computer Vision, Seoul, Korea (South), 2019: 2385–2393. doi: 10.1109/ICCV.2019.00247. [10] KRISTAN M, LEONARDIS A, MATAS J, et al. The sixth visual object tracking vot2018 challenge results[C]. The European Conference on Computer Vision (ECCV) Workshops, Munich, Germany, 2018: 3–53. doi: 10.1007/978-3-030-11009-3_1. [11] ZHANG Yunhua, WANG Dong, WANG Lijun, et al. Learning regression and verification networks for long-term visual tracking[EB/OL].https://arxiv.org/abs/1809.04320, 2018. [12] XUAN Shiyu, LI Shengyang, ZHAO Zifei, et al. Siamese networks with distractor-reduction method for long-term visual object tracking[J]. Pattern Recognition, 2021, 112: 107698. doi: 10.1016/j.patcog.2020.107698. [13] DAI Kenan, ZHANG Yunhua, WANG Dong, et al. High-performance long-term tracking with meta-updater[C]. The 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, USA, 2020: 6297–6306. doi: 10.1109/CVPR42600.2020.00633. [14] WU Yi, LIM J, and YANG M H. Object tracking benchmark[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2015, 37(9): 1834–1848. doi: 10.1109/TPAMI.2014.2388226. [15] BHAT G, DANELLJAN M, VAN GOOL L, et al. Learning discriminative model prediction for tracking[C]. The 2019 IEEE/CVF International Conference on Computer Vision, Seoul, Korea (South), 2019: 6181–6190. doi: 10.1109/ICCV.2019.00628. [16] HUANG Lianghua, ZHAO Xin, and HUANG Kaiqi. Globaltrack: A simple and strong baseline for long-term tracking[C]. The 34th AAAI Conference on Artificial Intelligence, New York, USA, 2020: 11037–11044. doi: 10.1609/aaai.v34i07.6758. [17] CHENG Siyuan, ZHONG Bineng, LI Guorong, et al. Learning to filter: Siamese relation network for robust tracking[C]. The 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, USA, 2021: 4419–4429. doi: 10.1109/CVPR46437.2021.00440. [18] CAO Ziang, FU Changhong, YE Junjie, et al. HiFT: Hierarchical feature transformer for aerial tracking[C]. The 2021 IEEE/CVF International Conference on Computer Vision, Montreal, Canada, 2021: 15457–15466. doi: 10.1109/ICCV48922.2021.01517. [19] CAO Ziang, HUANG Ziyuan, PAN Liang, et al. TCTrack: Temporal contexts for aerial tracking[C]. The 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, USA, 2022: 14778–14788. doi: 10.1109/CVPR52688.2022.01438. [20] FU Zhihong, LIU Qingjie, FU Zehua, et al. STMTrack: Template-free visual tracking with space-time memory networks[C]. The 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, USA, 2021: 13769–13778. doi: 10.1109/CVPR46437.2021.01356. [21] ZHAO Haojie, YAN Bin, WANG Dong, et al. Effective local and global search for fast long-term tracking[J]. IEEE Transactions on Pattern Analysis & Machine Intelligence, 2023, 45(1): 460–474. doi: 10.1109/TPAMI.2022.3153645. [22] https://www.votchallenge.net/vot2019/results.html. -

下载:

下载:

下载:

下载: